레이크하우스란 무엇인가요?

작성자: 벤 로리카, Michael Armbrust, Reynold Xin, Matei Zaharia , Ali Ghodsi

Over the past few years at Databricks, we've seen a new data management architecture that emerged independently across many customers and use cases: the lakehouse. In this post we describe this new architecture and its advantages over previous approaches.

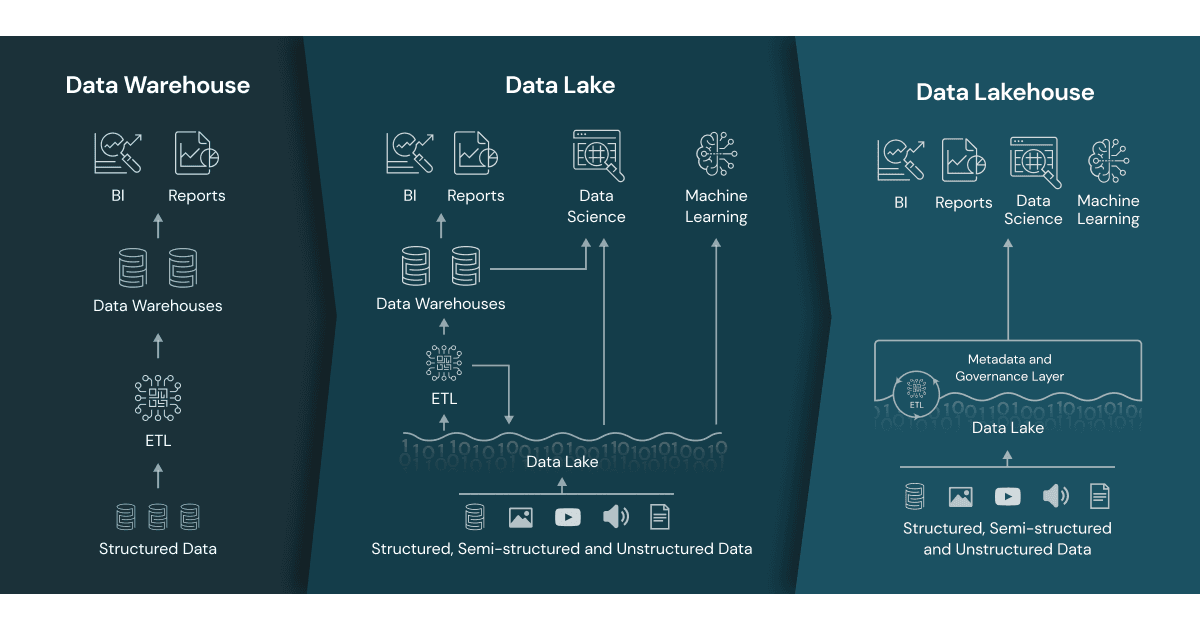

Data warehouses have a long history in decision support and business intelligence applications. Since its inception in the late 1980s, data warehouse technology continued to evolve and MPP architectures led to systems that were able to handle larger data sizes. But while warehouses were great for structured data, a lot of modern enterprises have to deal with unstructured data, semi-structured data, and data with high variety, velocity, and volume. Data warehouses are not suited for many of these use cases, and they are certainly not the most cost efficient.

As companies began to collect large amounts of data from many different sources, architects began envisioning a single system to house data for many different analytic products and workloads. About a decade ago companies began building data lakes - repositories for raw data in a variety of formats. While suitable for storing data, data lakes lack some critical features: they do not support transactions, they do not enforce data quality, and their lack of consistency / isolation makes it almost impossible to mix appends and reads, and batch and streaming jobs. For these reasons, many of the promises of the data lakes have not materialized, and in many cases leading to a loss of many of the benefits of data warehouses.

The need for a flexible, high-performance system hasn't abated. Companies require systems for diverse data applications including SQL analytics, real-time monitoring, data science, and machine learning. Most of the recent advances in AI have been in better models to process unstructured data (text, images, video, audio), but these are precisely the types of data that a data warehouse is not optimized for. A common approach is to use multiple systems - a data lake, several data warehouses, and other specialized systems such as streaming, time-series, graph, and image databases. Having a multitude of systems introduces complexity and more importantly, introduces delay as data professionals invariably need to move or copy data between different systems.

What is a lakehouse?

New systems are beginning to emerge that address the limitations of data lakes. A lakehouse is a new, open architecture that combines the best elements of data lakes and data warehouses. Lakehouses are enabled by a new system design: implementing similar data structures and data management features to those in a data warehouse directly on top of low cost cloud storage in open formats. They are what you would get if you had to redesign data warehouses in the modern world, now that cheap and highly reliable storage (in the form of object stores) are available.

A lakehouse has the following key features:

- Transaction support: In an enterprise lakehouse many data pipelines will often be reading and writing data concurrently. Support for ACID transactions ensures consistency as multiple parties concurrently read or write data, typically using SQL.

- Schema enforcement and governance: The Lakehouse should have a way to support schema enforcement and evolution, supporting DW schema architectures such as star/snowflake-schemas. The system should be able to reason about data integrity, and it should have robust governance and auditing mechanisms.

- BI support: Lakehouses enable using BI tools directly on the source data. This reduces staleness and improves recency, reduces latency, and lowers the cost of having to operationalize two copies of the data in both a data lake and a warehouse.

- Storage is decoupled from compute: In practice this means storage and compute use separate clusters, thus these systems are able to scale to many more concurrent users and larger data sizes. Some modern data warehouses also have this property.

- Openness: The storage formats they use are open and standardized, such as Parquet, and they provide an API so a variety of tools and engines, including machine learning and Python/R libraries, can efficiently access the data directly.

- Support for diverse data types ranging from unstructured to structured data: The lakehouse can be used to store, refine, analyze, and access data types needed for many new data applications, including images, video, audio, semi-structured data, and text.

- Support for diverse workloads: including data science, machine learning, and SQL and analytics. Multiple tools might be needed to support all these workloads but they all rely on the same data repository.

- End-to-end streaming: Real-time reports are the norm in many enterprises. Support for streaming eliminates the need for separate systems dedicated to serving real-time data applications.

These are the key attributes of lakehouses. Enterprise grade systems require additional features. Tools for security and access control are basic requirements. Data governance capabilities including auditing, retention, and lineage have become essential particularly in light of recent privacy regulations. Tools that enable data discovery such as data catalogs and data usage metrics are also needed. With a lakehouse, such enterprise features only need to be implemented, tested, and administered for a single system.

Read the full research paper on the inner workings of the Lakehouse.

Some early examples

The Databricks Lakehouse Platform has the architectural features of a lakehouse. Microsoft's Azure Synapse Analytics service, which integrates with Azure Databricks, enables a similar lakehouse pattern. Other managed services such as BigQuery and Redshift Spectrum have some of the lakehouse features listed above, but they are examples that focus primarily on BI and other SQL applications. Companies who want to build and implement their own systems have access to open source file formats (Delta Lake, Apache Iceberg, Apache Hudi) that are suitable for building a lakehouse.

Merging data lakes and data warehouses into a single system means that data teams can move faster as they are able use data without needing to access multiple systems. The level of SQL support and integration with BI tools among these early lakehouses are generally sufficient for most enterprise data warehouses. Materialized views and stored procedures are available but users may need to employ other mechanisms that aren't equivalent to those found in traditional data warehouses. The latter is particularly important for "lift and shift scenarios", which require systems that achieve semantics that are almost identical to those of older, commercial data warehouses.

다른 유형의 데이터 애플리케이션에 대한 지원은 어떻습니까? 레이크하우스 사용자는 데이터 과학 및 머신러닝과 같은 BI가 아닌 워크로드에 대해 다양한 표준 도구(Spark, Python, R, 머신러닝 라이브러리)에 액세스할 수 있습니다. 데이터 탐색 및 정제는 많은 분석 및 데이터 과학 애플리케이션에 표준입니다. Delta Lake는 사용자가 소비할 준비가 될 때까지 레이크하우스의 데이터 품질을 점진적으로 개선할 수 있도록 설계되었습니다.

기술적 빌딩 블록에 대한 참고 사항. 분산 파일 시스템은 스토리지 계층에 사용될 수 있지만, 객체 스토리지가 레이크하우스에서 더 일반적으로 사용됩니다. 객체 스토리지는 저렴하고 가용성이 높은 스토리지를 제공하며, 대규모 병렬 읽기에 탁월합니다. 이는 최신 데이터 웨어하우스의 필수 요구 사항입니다.

BI에서 AI로

레이크하우스는 엔터프라이즈 데이터 인프라를 근본적으로 단순화하고 머신러닝이 모든 산업을 뒤흔들 준비가 된 시대에 혁신을 가속화하는 새로운 데이터 관리 아키텍처입니다. 과거에는 회사의 제품이나 의사 결정에 사용된 대부분의 데이터가 운영 시스템의 정형 데이터였지만, 오늘날에는 많은 제품이 컴퓨터 비전 및 음성 모델, 텍스트 마��이닝 등의 형태로 AI를 통합합니다. AI를 위해 데이터 레이크 대신 레이크하우스를 사용하는 이유는 무엇인가요? 레이크하우스는 비정형 데이터에도 필요한 데이터 버전 관리, 거버넌스, 보안 및 ACID 속성을 제공합니다.

현재 레이크하우스는 비용을 절감하지만, 수년간의 투자와 실제 배포를 거친 전문 시스템(데이터 웨어하우스 등)에 비해 성능이 뒤처질 수 있습니다. 사용자는 다른 도구보다 특정 도구(BI 도구, IDE, 노트북)를 선호할 수 있으므로 레이크하우스는 UX와 인기 있는 도구에 대한 커넥터를 개선하여 다양한 사용자에게 어필할 수 있어야 합니다. 이러한 문제와 기타 문제들은 기술이 계속 성숙하고 발전함에 따라 해결될 것입니다. 시간이 지남에 따라 레이크하우스는 이러한 격차를 해소하면서 더 간단하고 비용 효율적이며 다양한 데이터 애플리케이션을 서비스할 수 있는 핵심 속성을 유지할 것입니다.

자세한 내용은 데이터 레이크하우스에 대한 FAQ를 읽어보세요.

(이 글은 AI의 도움을 받아 번역되었습니다. 원문이 궁금하시다면 여기를 클릭해 주세요)

최신 게시물을 이메일로 받아보세요

블로그를 구독하고 최신 게시물을 이메일로 받아보세요.