Che cos'è Managed MLflow?

Managed MLflow estende le funzionalità di MLflow, una piattaforma open source sviluppata da Databricks per creare modelli migliori e app AI generativa, concentrandosi su affidabilità, sicurezza e scalabilità aziendali. L'ultimo aggiornamento di MLflow introduce funzionalità innovative GenAI e LLMOps che ne migliorano la capacità di gestire e distribuire modelli linguistici di grandi dimensioni (LLM). Questo supporto LLM ampliato è ottenuto attraverso nuove integrazioni con gli strumenti LLM standard di settori industriali OpenAI e Hugging Face Transformers, nonché con MLflow Deployments Server. Inoltre, l'integrazione di MLflowcon i framework LLM (ad esempio LangChain) consente lo sviluppo di modelli semplificati per la creazione di applicazioni AI generativa per una varietà di casi d'uso, tra cui chatbot, riepilogo di documenti, classificazione di testo, analisi del sentiment e altro ancora.

Vantaggi

Sviluppo di modelli

Migliora e accelera la gestione del ciclo di vita del machine learning con un framework standardizzato per modelli pronti per la produzione. Le ricette MLflow gestite consentono il bootstrap continuo di progetti ML , un'iterazione rapida e la distribuzione di modelli su largaScale . Crea applicazioni come chatbot, riepilogo di documenti, analisi del sentiment e classificazione senza sforzo. Sviluppa facilmente AI app generativa (ad esempio chatbot, riepilogo di documenti) con MLflow LLM le offerte di LangChain, che si integrano perfettamente con , Hugging Face e OpenAI.

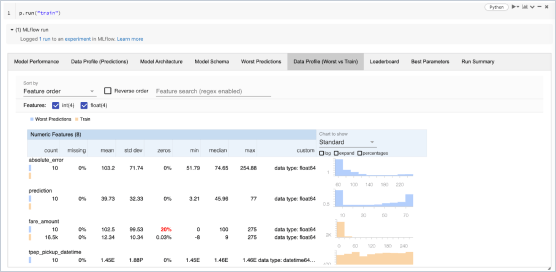

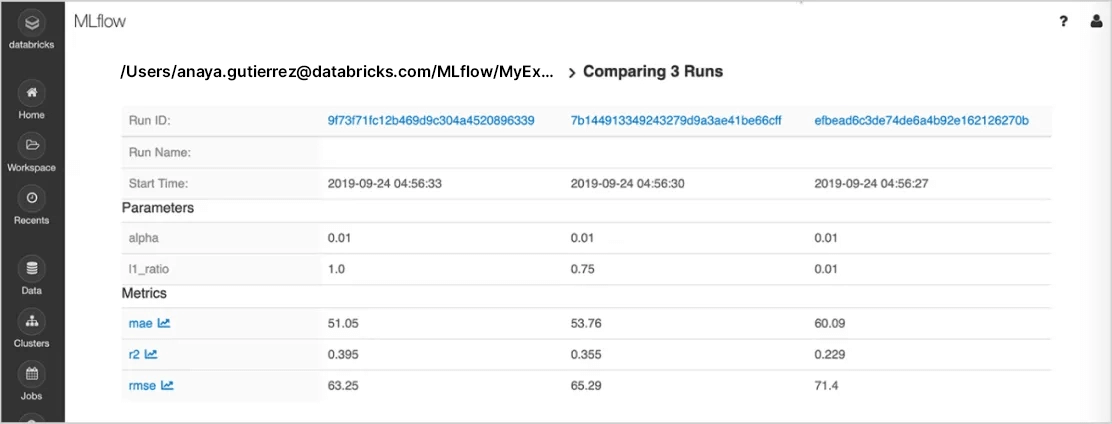

Monitoraggio di esperimenti

Esegui l'esperimento con qualsiasi libreria, framework o linguaggio ML e tieni traccia automaticamente di parametri, metriche, codice e modelli di ciascun Experiment. Utilizzando MLflow su Databricks, puoi condividere, gestire e confrontare in modo sicuro i risultati Experiment insieme agli artefatti e alle versioni del codice corrispondenti, grazie alle integrazioni dell'integratore con l'area di lavoro e il notebook di Databricks . Potrai anche valutare i risultati dell'esperimento GenAI e migliorare la qualità con la funzionalità di valutazioneMLflow .

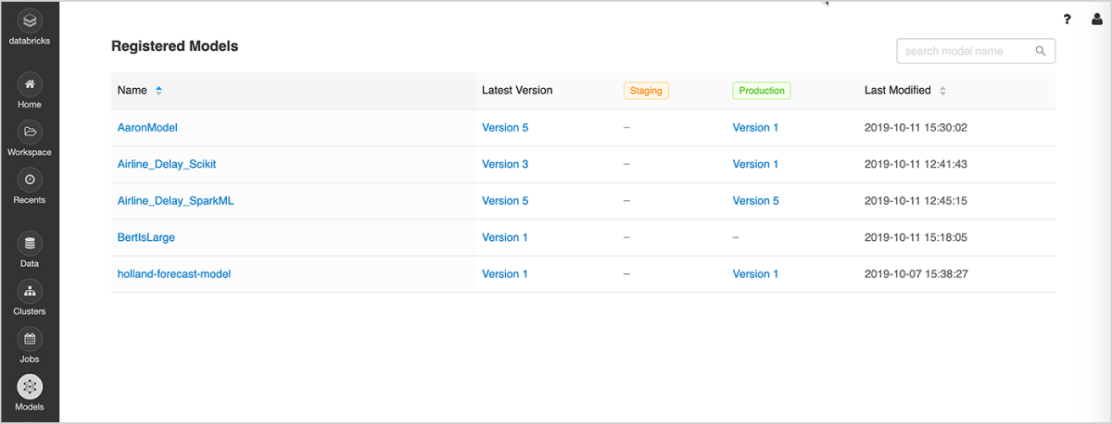

Gestione dei modelli

La soluzione offre un luogo centralizzato per scoprire e condividere modelli di ML, collaborare per portare i modelli dalla sperimentazione al collaudo online e alla produzione, integrare il processo con flussi di lavoro di approvazione e governance e pipeline CI/CD, e monitorare le implementazioni di ML e le relative prestazioni. Il registro dei modelli MLflow facilita la condivisione di competenze e conoscenze, aiutando l'utente a mantenere il controllo.

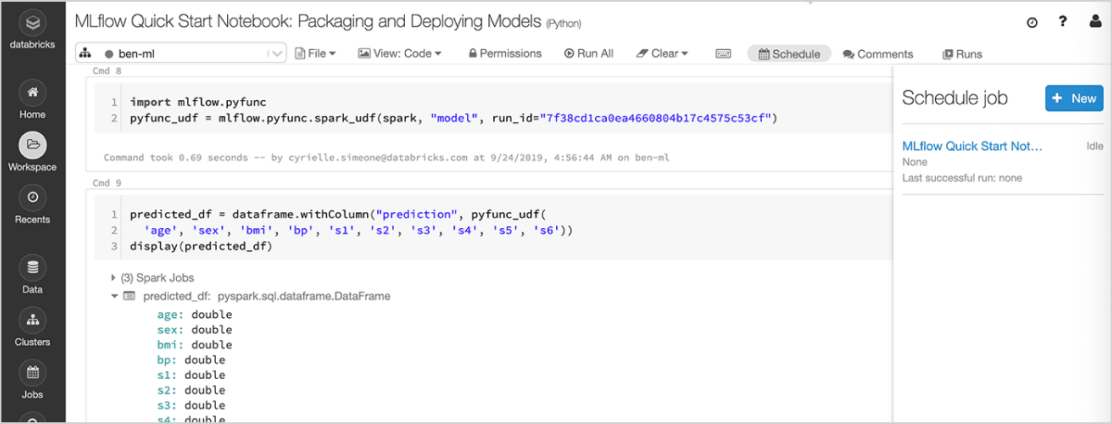

Implementazione di modelli

Implementa velocemente modelli in produzione per l'inferenza in batch su Apache Spark™ e come API REST, utilizzando l'integrazione con contenitori Docker, Azure ML o Amazon SageMaker. Managed MLflow on Databricks consente di operazionalizzare e monitorare modelli in produzione utilizzando Databricks Jobs Scheduler e cluster autogestiti per dimensionare la soluzione in base alle esigenze dell'azienda.

Gli ultimi aggiornamenti a MLflow raggruppano perfettamente le applicazioni GenAI per la distribuzione. Ora puoi distribuire i tuoi chatbot e altre applicazioni GenAI come il riepilogo dei documenti, l'analisi del sentiment e la classificazione su Scale, utilizzando Databricks Model Serving.

Features

Features

Leggi la sezione dedicata alle novità di prodotto di Azure Databricks e AWS per scoprire le nostre funzionalità più recenti.

Offerte MLflow a confronto

Open Source MLflow | Managed MLflow on Databricks | |

|---|---|---|

Monitoraggio di esperimenti | ||

API di tracciamento di MLflow | ||

Server di tracciamento di MLflow | Hosting interno | Completamente gestito |

Integrazione con notebook | ||

Integrazione con flussi di lavoro | ||

Progetti riproducibili | ||

Progetti MLflow | ||

Gestione dei modelli | ||

Integrazione con Git e Conda | ||

Cloud/cluster scalabili per esecuzione di progetti | ||

Registro dei modelli MLflow | ||

Gestione delle versioni dei modelli | ||

Implementazione flessibile | ||

Transizione di fase basata su ACL | ||

Integrazione con flussi di lavoro CI/CD | ||

Sicurezza e gestione | ||

Inferenza batch integrata | ||

Modelli MLflow | ||

Analisi in streaming integrata | ||

Alta disponibilità | ||

Aggiornamenti automatici | ||

Controllo degli accessi per ruoli | ||

Sicurezza e gestione | ||

Controllo degli accessi per ruoli | ||

Controllo degli accessi per ruoli | ||

Controllo degli accessi per ruoli | ||

Controllo degli accessi per ruoli | ||

Sicurezza e gestione | ||

Controllo degli accessi per ruoli | ||

Controllo degli accessi per ruoli | ||

Controllo degli accessi per ruoli | ||

Controllo degli accessi per ruoli | ||

Sicurezza e gestione | ||

Controllo degli accessi per ruoli | ||

Controllo degli accessi per ruoli | ||

Controllo degli accessi per ruoli |

Risorse

Blog

Video

Tutorial

Webinar

Webinar

Webinar

Frequently Asked Questions

Ready to get started?