10 Data Warehouse Migration Myths Blocking AI-readiness (and Your Blueprint for Seamless Modernization)

Best practices for seamlessly migrating your data warehouse to an open data lakehouse

by Olga Romanova and George Komninos

- Migrate your data warehouse for ROI, not just cost. Consolidate platforms, unlock AI on governed data, and decommission legacy systems faster.

- Go beyond code conversion. Use automated discovery, value-based descoping, and rigorous validation to reduce risk and technical debt.

- Modernize pragmatically. Combine automation with lift-and-shift to accelerate timelines and achieve predictable ROI.

Moving to a modern data warehouse is a critical part of any enterprise AI-readiness strategy. But without the right approach, data warehouse migrations are often perceived as high-risk, resource-intensive initiatives. The primary challenges (managing technical debt, ensuring data integrity, and minimizing downtime) can feel overwhelming without a structured framework.

At Databricks, seamless migrations follow a proven, repeatable approach: discovery and rationalization, automated conversion, rigorous validation, optimization for the lakehouse architecture, and early decommissioning of legacy systems. However, certain misconceptions about the complexity and cost of the transition persist.

This blog covers common myths that often derail the process and the Databricks framework for efficient and seamless migrations.

Myth 1: Companies should focus only on costs when planning a data warehouse migration

Reality: Value is driven by AI enablement, operational agility, and platform consolidation

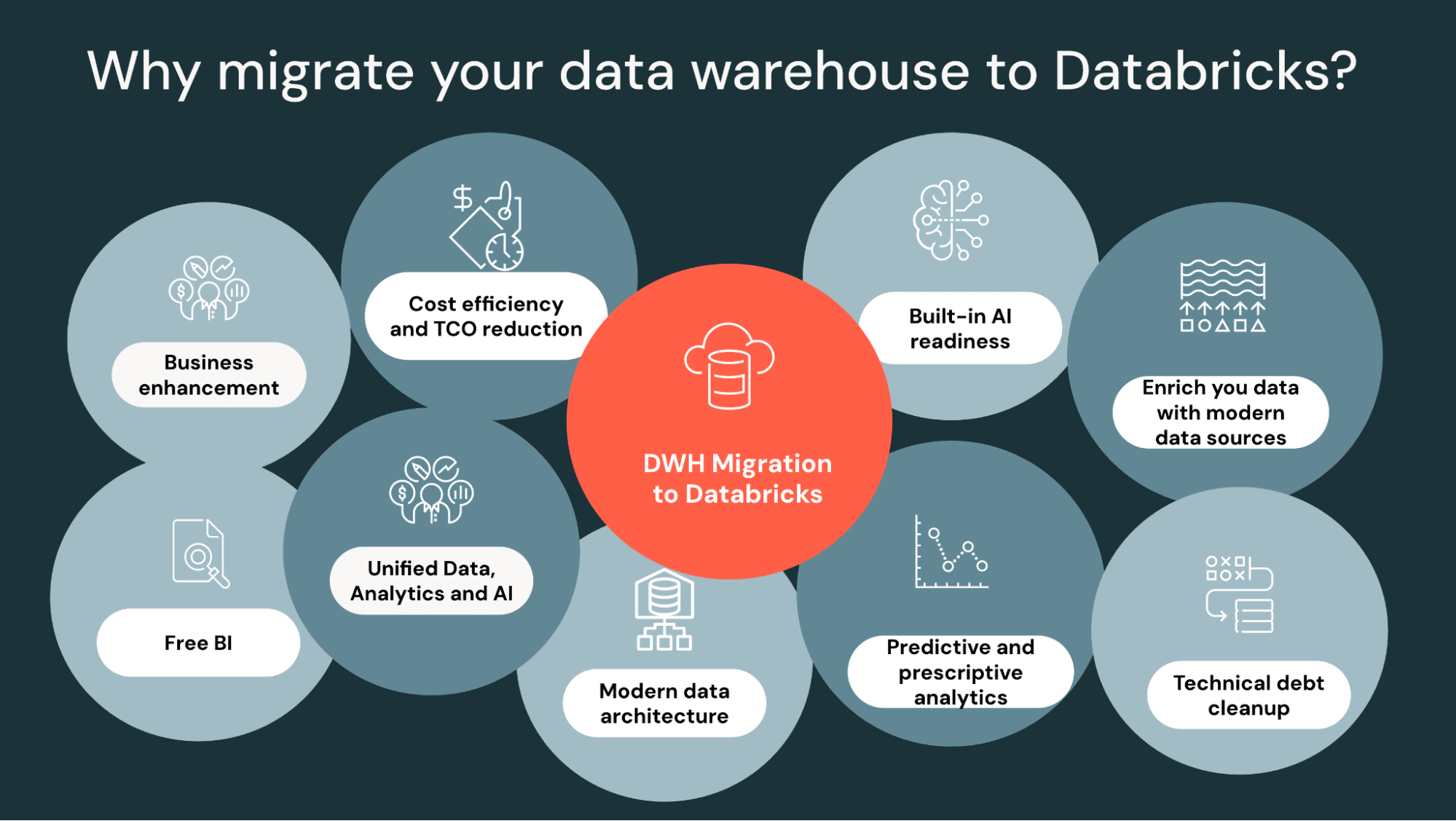

While Databricks consistently delivers superior TCO validated by industry-standard TPC-DS benchmarks, cost reduction is only one component of the value proposition. Companies should focus on the migration ROI to the business, including new value drivers that legacy environments cannot support:

Platform consolidation and operational efficiencies

Migrating consolidates fragmented data warehousing platforms, simplifying the data estate. By moving to the Databricks, Williams achieved, for example, a 40% reduction in TCO while revolutionizing decision-making capabilities.

Enabling AI and intelligence

Migration is the catalyst for your data, agents, and AI apps. Once their data is consolidated and governed, enterprises can use it for AI cases and build data products tailored to the business. For example, Insulet achieved a 97% reduction in processing costs, but more importantly, unlocked the ability to process data for advanced analytics and AI that legacy systems could not scale to handle. DXC achieved a 30% reduction in TCO by unifying its global data estate, but the primary gain was the ability to reduce time to insights from months to days.

Platform EOL and datacenter exits

Many migrations are driven by the urgency of legacy platform End-of-Life (EOL) cycles or strategic datacenter exits, pushing organizations toward cloud-native reliability.

Unlocking free BI

Databricks Lakehouse unifies both AI and BI workloads, empowering self-service analytics through natural language using AI/BI Genie. Democratize data access without the "user tax" of traditional BI tools. By migrating to Databricks Lakehouse, companies like Novade (60% TCO reduction) are not just saving money; they are reducing operational overhead and improving reliability. This shift unlocks use cases that are feasible only with modern platforms' advanced analytics, real-time insights, and AI-driven capabilities, creating opportunities for innovation and differentiated business outcomes.

Myth 2: Data warehouse migration is just about SQL code conversion

Reality: Successful migration requires architectural realignment, governance, and deep business engagement

A common mistake is viewing migration through the narrow lens of SQL translation. A successful migration requires a broader lens that includes design, governance, validation, orchestration, change management, and business alignment.

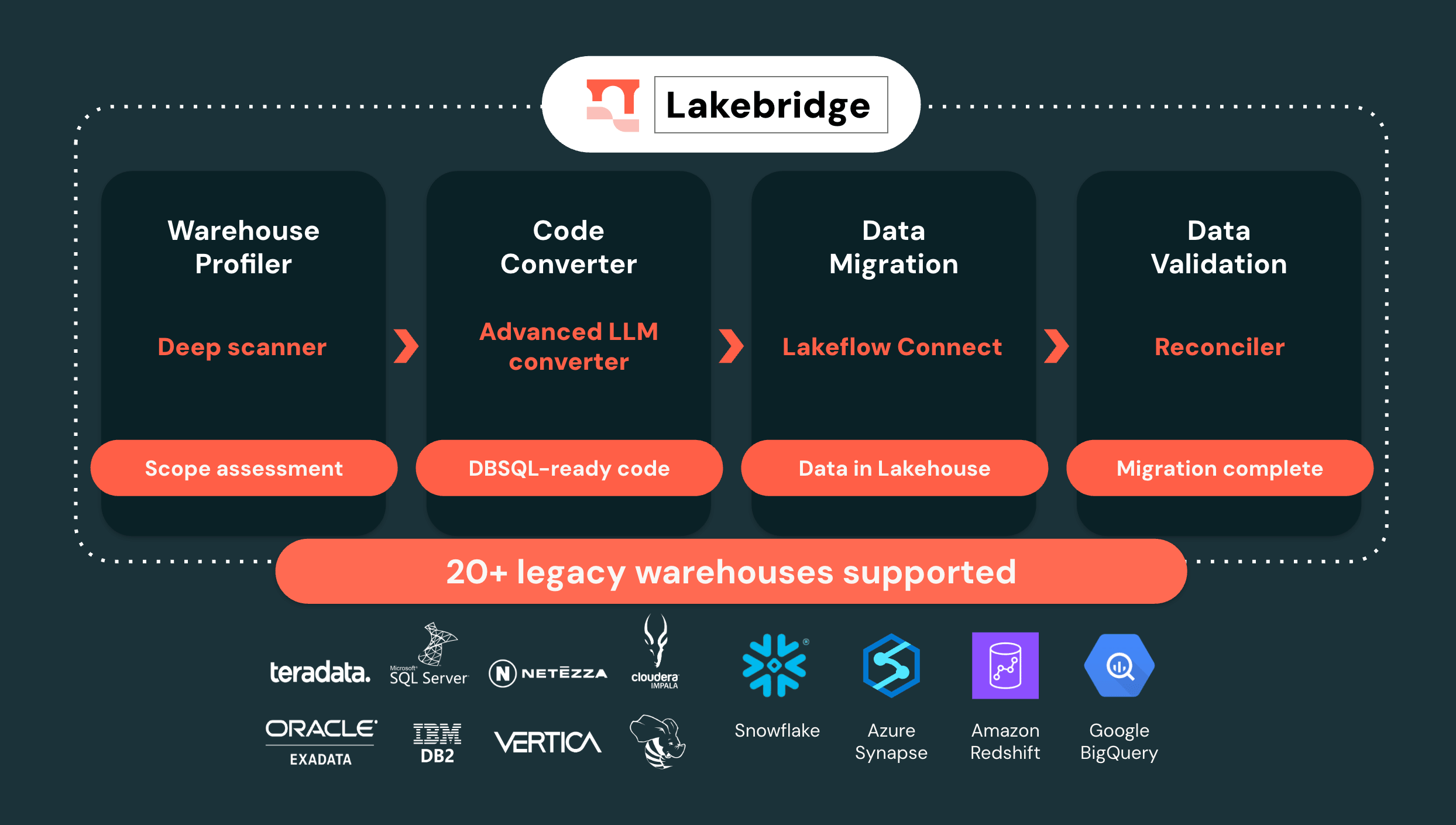

During the assessment phase, the migration plan and architectural design are vital. Databricks leverages Lakebridge as a key accelerator in this phase to automate discovery and object usage analysis, ensuring you understand the full scope of your estate before moving a single table and eliminating the guesswork. Internal know-how helps automate the effort and timeline estimates during the planning.

During migration, organizations often overlook the "validation gap". While validation can consume 50-60% of the total migration effort, this is not something to be feared. Databricks’ migration framework explicitly account for validation as a first-class phase, with automated reconciliation and lineage tooling built into the process.

When migrating to a new orchestration framework, existing logic often needs repointing, redesigning, or reimplementation due to differences in triggers, error handling, and scalability concerns on the new platform.

A successful migration hinges on more than technical expertise; it requires alignment with the business, governance, and change management. That’s why at Databricks, we collaborate with the business teams during the validation phase to ensure we are meeting their SLAs. Business stakeholders domain expertise is crucial for interpreting results, spotting discrepancies, and certifying that the modernized system supports downstream reporting and analytics needs.

Myth 3: All legacy objects need to be migrated

Reality: A "value-first" audit reveals massive redundancy

Attempting to migrate every legacy object - including deprecated tables and obsolete stored procedures - introduces technical debt, extended timeline, and unnecessary costs. Industry benchmarks suggest that a significant amount of legacy data warehouse objects are frequently redundant or unused. By assessing business use cases and identifying critical workloads first, organizations achieve a significantly faster return on investment.

Databricks migration framework recommends a thorough discovery process that enables descoping unnecessary assets, and a successful migration design ensures the appropriate merging or modernization, utilizing automation.

Myth 4: Automation ensures successful migration

Reality: Successful automation requires a pragmatic balance and specialized tools

Using tool-based migration solely shifts the legacy system's technical debt to the modern platform. One of the migration goals is to retire technical debt.

At Databricks, we view migrations holistically, with Lakebridge and accelerators playing a significant part in the migration journey. It is essential to evaluate how automation could accelerate the migration. Quantifying acceleration levels informs migration process decisions and optimizes migration outcomes while ensuring the elimination of technical debt.

In practice, any well-executed migration is a fine balance between modernizing high-impact components like architecture, frameworks, and old code base and "lifting and shifting" modern code and reporting assets. Of course, some non-performant code needs refactoring, but the goal is to allocate modernization effort to high-yield, long-term investments with strong returns, such as the most resource-intensive queries, while leveraging automation to handle the bulk of standard transformation logic. This approach, combined with professional services know-how, yields up to 90% automation.

Myth 5: Technical migration’s success depends purely on technical expertise and tooling conversion rates

Reality: Success requires subject matter expert (SME) alignment, a Center of Excellence (COE), and the right tooling

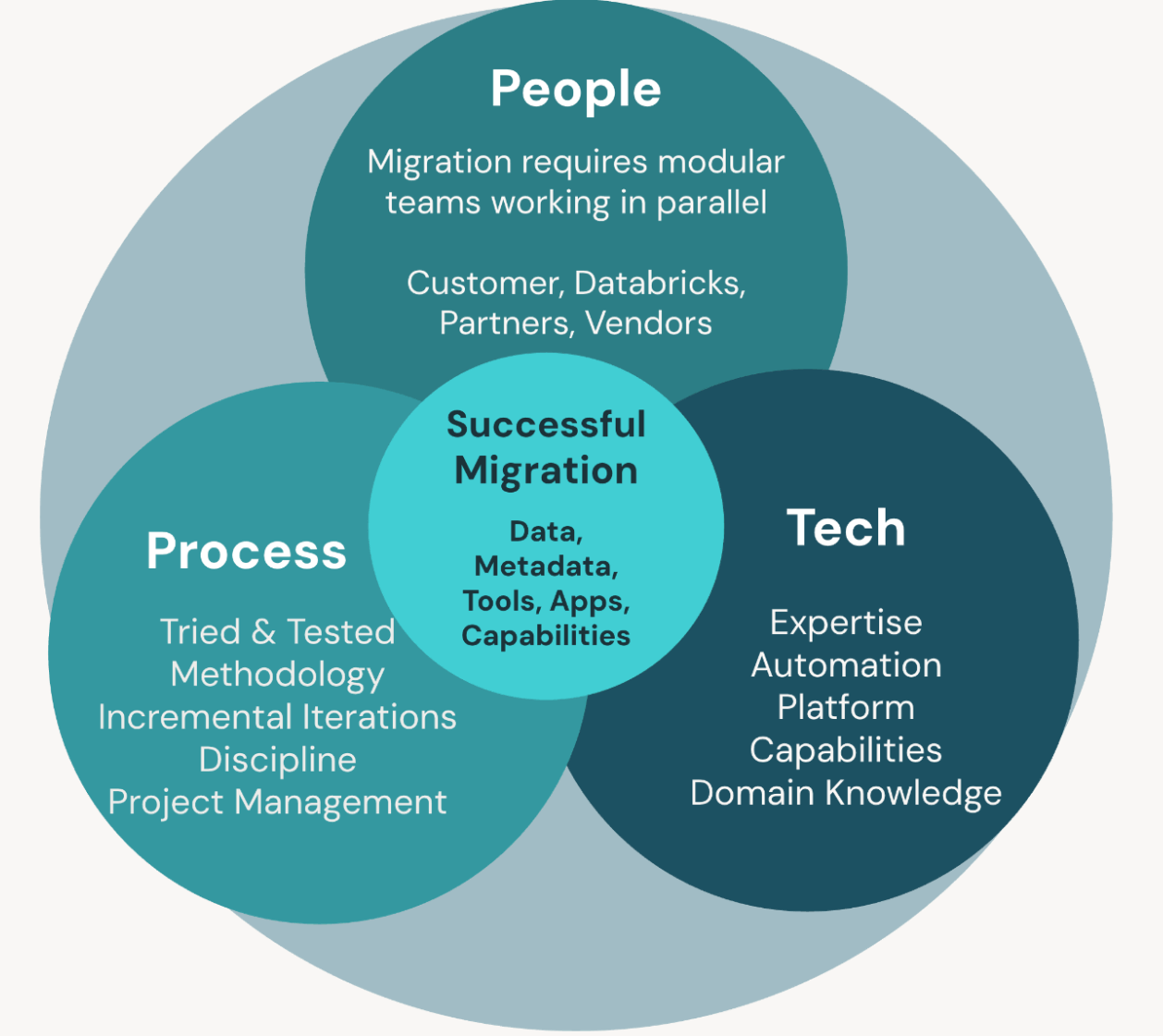

Technical teams often assume legacy requirements are accurately documented. In practice, engaging Business SMEs is vital for validating the underlying logic and prioritizing high-value use cases. Beyond technical considerations, Databricks embraces a holistic “people, process, platform” mentality when driving Lakehouse adoption.

- People are at the center - we empower cross-functional teams, fostering collaboration between technical and business stakeholders to ensure alignment and knowledge transfer throughout migration.

- Process is critical for sustainable change. Our standard delivery incorporates structured methodologies, accelerators, and robust change management, enabling organizations to embed best practices and adapt workflows for modern data environments.

- The platform dimension leverages Databricks’ flexible capabilities, deploying a mix of LLMs, rule-based, and deterministic engines tailored to the complexity of the code being converted and the customer’s unique environment. To sustain this value at scale, we champion the creation of a CoE as a hub for innovation and governance, reinforcing continuous improvement and operational excellence. This integrated approach ensures organizations not only migrate their data but also build the skills, processes, and technology foundation required to fully realize the benefits of a Lakehouse platform.

Myth 6: Data validation is trivial

Reality: Precision and reconciliation are highly complex and require defined SLAs

Validating complex data types in legacy systems (e.g., Oracle or Teradata) and reconciling with Lakehouse formats requires more than simple row counts. When validating data and logic, it is important to recognize two types of logic:

- Deterministic logic: Produces the same output for the same input every time, allowing for straightforward, repeatable validation.

- Non-deterministic logic: Can yield slightly different outputs across runs. Validating these artifacts requires a strong business context to define acceptable ranges or patterns rather than relying on exact matches.

This complexity is relevant not only to legacy systems but also to Change Data Capture (CDC), snapshotting, and in-place automation for incremental loads and streaming. Because production data is dynamic, defining an SLA (e.g., requiring validation to be 99.x% accurate) is key for reconciliation success. Working with Professional Services guarantees that a detailed validation plan is in place and followed, and that rigorous reconciliation and lineage-tracking tools are used to maintain data integrity throughout the migration lifecycle.

Myth 7: Modernization is inherently more expensive and time-consuming than legacy maintenance

Reality: Early decommissioning yields rapid ROI

While migrations require an initial capital and time investment, the "operational tax" of legacy systems is often the largest drain on IT budgets. By utilizing acceleration frameworks and planning for the rapid decommissioning of legacy licenses, organizations often achieve a positive ROI within the first 12 months.

An additional justification for investment is that migrating enables the adoption of new use cases and capabilities that are not feasible on the legacy platform. Modernizing the stack reduces the long-term maintenance burden, empowering engineering teams to move beyond simply "keeping the lights on" and focus on driving AI-led innovation. After the migration, developers are freed from legacy platform administration and can concentrate on more productive and strategic tasks that deliver greater business value.

Myth 8: Scaling the platform requires a massive increase in engineering resources

Reality: Success is driven by a certified partner ecosystem and in-house enablement

While traditional approaches to data warehouse migration often require large teams to handle complex workflows, modern tooling and automation have drastically reduced these needs. Certified migration partners with Professional Services, ensuring quality, bringing a depth of experience, leveraging proven methodologies and accelerators tailored to Databricks, which directly addresses common challenges and avoids unnecessary engineering overhead. Their expertise allows a customer’s internal team to focus on business-critical activities, rather than the intricacies of refactoring legacy workloads or troubleshooting nuanced compatibility issues.

Moreover, the migration process is designed to ensure minimal disruption, with change management and enablement built into it. Interactive workshops, hands-on trainings, and documentation empower users - so by project completion, the in-house team has the skills and knowledge to operate, optimize, and extend the platform independently. In this model, businesses realize ongoing agility and cost efficiency without the legacy burden of a sizable migration workforce.

Myth 9: Lift and shift never works with Databricks

Reality: Lift and shift can be the best path for tight timelines

While complete modernization enables advanced capabilities immediately, a lift-and-shift approach allows organizations to quickly decommission legacy systems and reduce operational risk during cutover. Lift and shift is the recommended migration approach when the primary drivers are time to migrate, ease and accuracy of planning, or the criticality of downstream applications that depend on a stable schema and behavior.

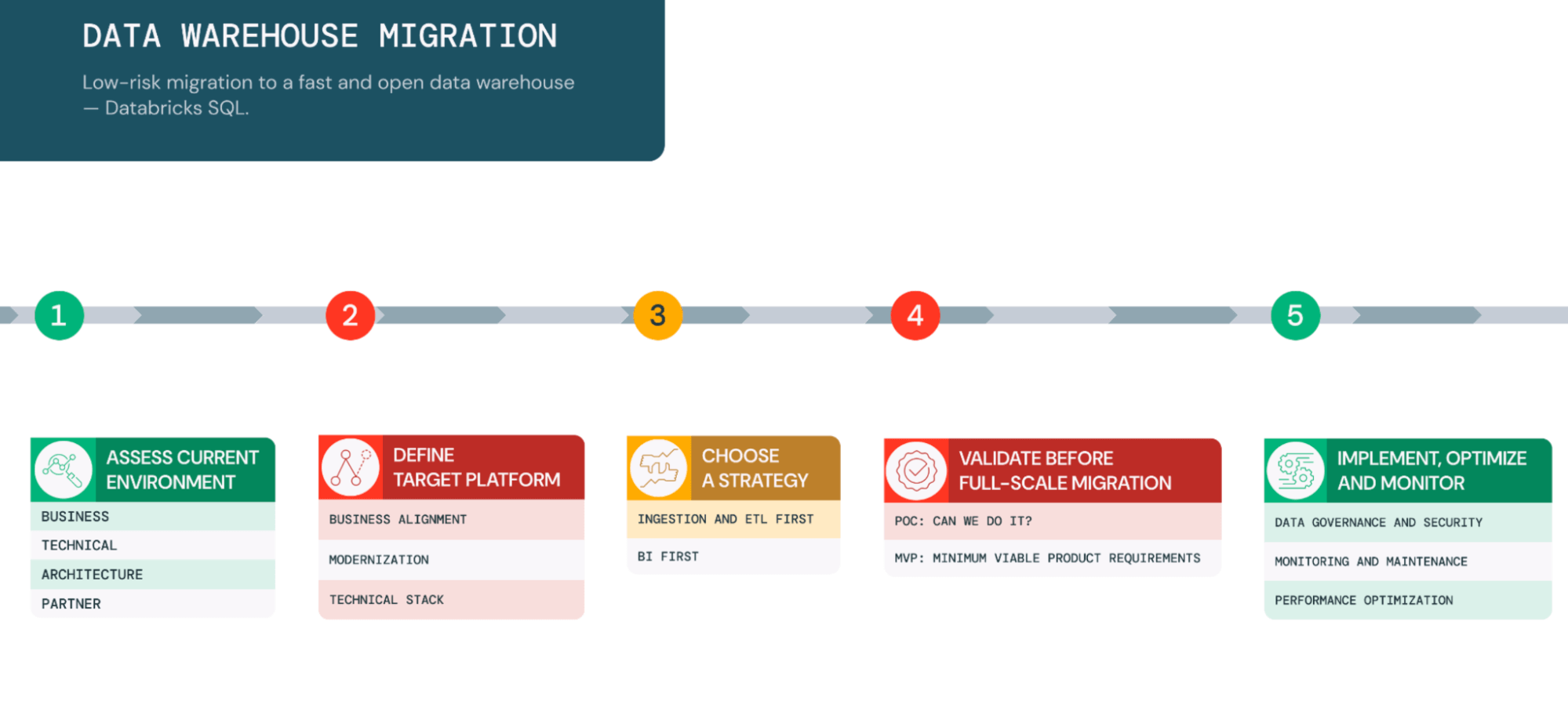

In practice, most programs adopt a hybrid strategy: migrate first to stabilize, then modernize incrementally. A common pattern is “ingestion and ETL first” to establish durable pipelines, governance, and observability as the foundation before optimizing models, performance, and cost. It is not uncommon to classify use cases as “critical/always on” and “nice to have”, migrate the former with lift and shift to preserve reliability, and modernize the latter to unlock new capabilities.

Myth 10: Migration costs are always unpredictable

Reality: Proven frameworks and validation steps ensure predictability

One of the most common misconceptions is treating migration solely from a cost perspective. While migrations indeed require financial investment - directly or indirectly - they should be viewed as strategic unlockers of new possibilities. With a carefully crafted business case, a rapid and positive Return on Investment (ROI) is not only achievable but expected.

Migration is complex, but it doesn't have to be financially risky. By employing strategies such as Proof of Concepts (POCs) or Minimum Viable Products (MVPs) during the validation phase, organizations can test feasibility and demonstrate value quickly before committing to a full-scale rollout. By prioritizing the proper use cases based on business value and unlocking new capabilities early, teams can prove the model's effectiveness without risking the entire budget.

The Databricks proven migration framework, combined with accelerators like Lakebridge and deep domain expertise, helps execute migrations end-to-end in the most streamlined fashion. This structured approach reduces the "validation gap" and minimizes manual effort, ultimately reducing the required team size, timeline and associated costs.

Finally, our commercial ecosystem supports financial predictability. With strong partnerships with hyperscalers, Databricks provides cost-efficient execution support. We actively financially support customer migrations by investing in Certified Partners and providing Professional Services assurance, ensuring that your path to the Lakehouse is as commercially sound as it is technically robust.

Getting Started

Successful migrations are not an end state. They establish a foundation for serverless analytics, governed AI, and faster innovation — without maintaining parallel systems.

Migrations can be challenging. There will always be tradeoffs to balance and unexpected issues and delays to manage. You need proven partners and solutions for the people, process, and technology aspects of the migration. We recommend trusting the experts at Databricks Professional Services and our certified migration partners, who have extensive experience in delivering high-quality migration solutions in a timely manner. Reach out to get your migration assessment started.

Get the latest posts in your inbox

Subscribe to our blog and get the latest posts delivered to your inbox.