Beyond the spreadsheet: How Databricks is delivering the modern CFO in financial services

Discover how Databricks powers a real-time, AI-driven Office of the CFO

by Jennifer Miller, Marcela Granados, Andrea DeSosa, Alex Oberlander, Kim Hatton, Pavithra Rao, Naeem Rehman, Pravin Varma, Olga Deriy and Prasanna Selvaraj

- What is the Databricks? A unified architecture that integrates real-time data streaming, AI-driven modeling, and centralized governance– helping CFOs shift from reactive reporting to forward-looking financial strategy.

- What problem does it solve? Finance teams spend up to 80% of their time cleaning and reconciling data across fragmented systems. Databricks eliminates this "Data and Governance Tax" by replacing slow batch cycles and siloed reporting with a single, governed data foundation.

- What results can CFOs expect? Organisations have cut regulatory reporting from 10 hours to 8 minutes, improved insurance combined ratios by 5%, and enabled continuous close. CFOs can also query complex financial data in plain English using AI-powered natural language tools.

In the traditional halls of financial services, the Office of the CFO has long been viewed through the lens of two primary "faces": the Steward, protecting assets and ensuring compliance, and the Operator, focused on planning and retrospective reporting.

But a seismic shift is underway. Deloitte’s research on the evolving role of finance leaders reveals a stark ambition: CFOs are now expected to spend more than 60% of their time as Strategists and Catalysts, driving enterprise-wide transformation and shaping the future of the firm. Today’s finance leaders are no longer just helping the CEO "run the financial institution"; they are tasked with "changing the financial institution."

However, for most, this transition is hindered by a foundational "Data and Governance Tax." While the ambition is to lead strategy, the reality is often a struggle against legacy infrastructure that keeps teams tethered to the "Operator" role. The goal is clear: move from static, monthly forecasts to dynamic, real-time capital deployment.

The Data Problem: The Structural Friction of Modern Finance

Most Financial Services organizations are currently hindered by a foundational "Data Tax" that prevents the Office of the CFO from acting as a strategic engine. This systemic architectural failure keeps CFOs trapped in the "Operator" phase:

- The Fragmentation Gap (Decoupled Systems): Data is trapped in disconnected legacy silos; sub-ledgers, vendor platforms, and core systems running on decades-old mainframes. Because the General Ledger is decoupled from raw transaction data, CFOs pay a heavy "reconciliation tax". McKinsey finds that data users can spend 30–40% of their time searching for data and a further 20–30% of their time on data cleansing it, rather than analyzing it.

- The "Batch Tax" (T+1 Latency): Financial data pipelines are traditionally built on rigid, nightly batch cycles. In a world of 24/7 digital transfers and instant market volatility, T+1 is a structural liability. Without real-time visibility into intraday liquidity, CFOs must maintain oversized "lazy" cash buffers, leaving yield on the table and keeping the firm in a reactive posture during market stress.

- The Lineage Problem (Opaque Math): Global reporting requirements, such as CCAR, FR 2052a, and LCR, demand extreme granularity. On legacy stacks, the "math" between a source transaction and a final report is often hidden in a "black box" of complex ETL code. This creates Regulatory Fragility, where every audit inquiry triggers an expensive, manual reconstruction of the data's journey.

- The Semantic Gap: There is a fundamental disconnect between how IT stores data (technical schemas) and how Finance speaks about it (GAAP/IFRS, NIM, HQLA). Analysts spend 80% of their time as "data janitors," translating raw data into business meaning before analysis can even begin.

The Solution: Databricks

To transition from Steward to Catalyst, CFOs need a platform that doesn't just store data, but understands it. Databricks provides a unified platform that brings together real-time streaming, centralized governance and democratized data and AI.

1. Unified Lineage and Trust with Unity Catalog

Unity Catalog provides a single, governed view of all data assets, from raw transactions to the final ML models used in regulatory reporting, strategic decision-making, and forecasting. By integrating semantic standards like FIBO, it transforms this view from a simple list of files into an ontological backbone. This ensures that AI queries are not merely convenient, but inherently trustworthy, as they are anchored in industry-standard logic rather than statistical guesswork.

This framework effectively eliminates "Audit Debt" by providing comprehensive, end-to-end lineage. If a regulator questions how a liquidity ratio was calculated, the CFO doesn't just show a path of files; they can trace the semantic logic, proving exactly how a "liquid asset" was defined and mapped back to the individual transaction. This level of transparency turns a process that once took weeks of forensic manual labor into a verifiable insight available in seconds.

2. Eliminating the "Batch Tax" with Lakeflow

With Lakeflow and Spark Declarative Pipelines, the Modern CFO moves from "batch" to "continuous." The impact spans both sides of the CFO Office. For Treasury, streaming data into the Lakehouse as it happens provides a real-time view of cash concentrations and intraday risk, allowing the bank to shrink non-earning cash buffers and redeploy liquidity into higher-yielding assets instantly. For the Comptroller, Lakeflow enables real-time General Ledger processing, ingesting origination messages (loan bookings, trade settlements, payment transactions) and posting to the subledger as events occur, rather than waiting for end-of-day batch cycles. This eliminates the reconciliation lag between when a transaction happens and when it appears in the books, compressing the close cycle and keeping the GL audit-ready at all times.

3. Bridging the Semantic Gap: "Speak to your Data"

Databricks uses LLMs to understand the semantics of financial data. This allows the Office of the CFO and line-of-business leaders to use Genie to query their entire financial estate in plain English. By leveraging AI on top of governed data, the "Chat CFO" can bridge the gap between "Raw Data" and "Business Insight," allowing analysts to focus on strategy rather than data preparation and reports.

4. Transparent, Governed Modeling with Agent Bricks

Critical Treasury models like Deposit Beta, PPNR forecasting, CECL reserves, hedging effectiveness have traditionally lived in black-box vendor tools or fragile local spreadsheets that no one else can audit or reproduce. Agent Bricks brings these models onto the same governed platform as the data they consume. Models are trained, registered, and versioned in Unity Catalog alongside the datasets they depend on, creating a single lineage chain from raw transaction data through to model output. When a regulator or internal auditor asks "how was this PPNR forecast produced?", the answer isn't a person's name, it's a traceable, reproducible pipeline.

Databricks is powering the modern CFO across financial services

In Banking and Capital Markets

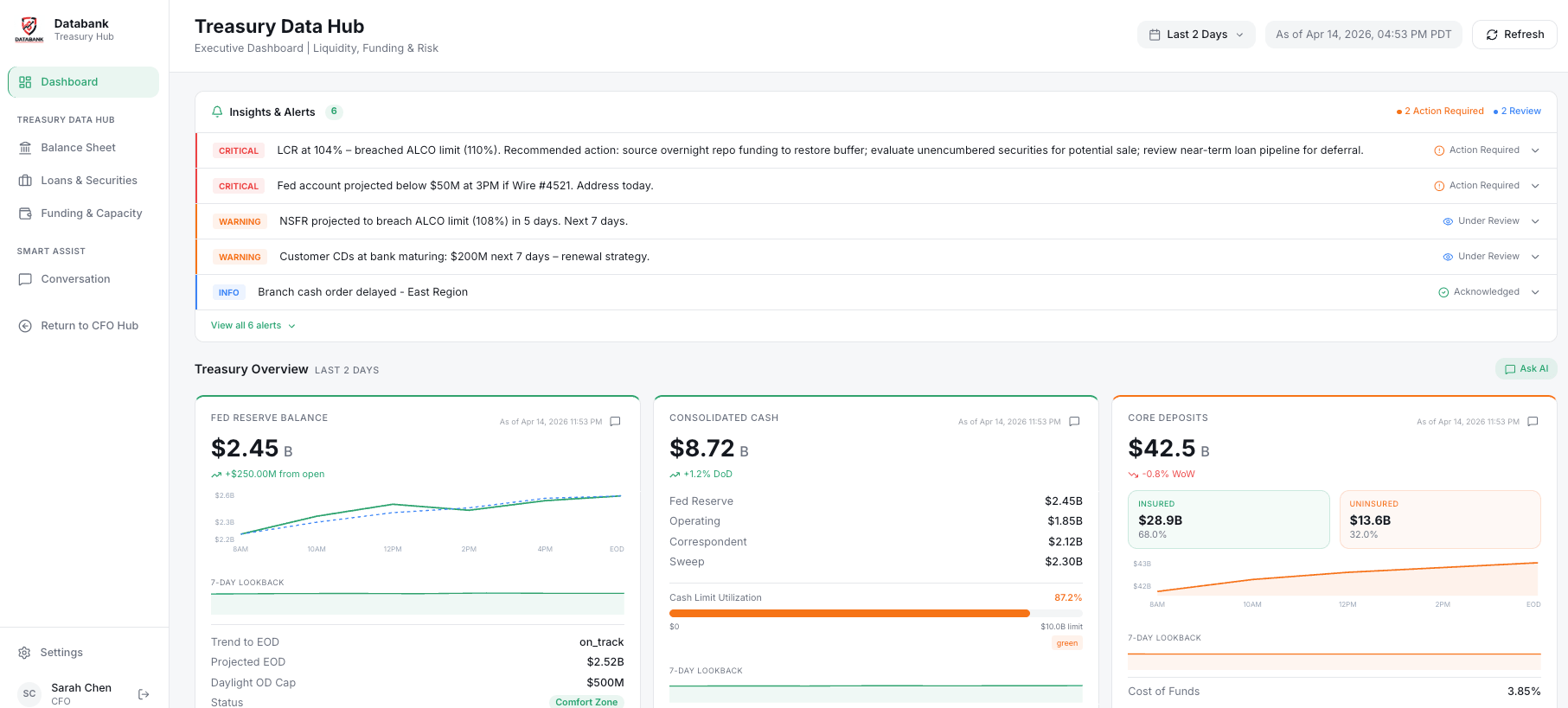

The modern bank now runs on a Unified Treasury Hub. By breaking down silos between the General Ledger and sub-ledgers to unify portfolio and market data, Databricks enables Treasurers to move from defensive reporting to offensive capital management across the following key pillars:

Interest Rate Risk & ALM: Traditional Asset Liability Management (ALM) relies on aggregated buckets. Databricks enables loan-level simulation and Net Interest Income (NII) forecasting under thousands of interest rate scenarios in minutes. This allows Treasurers to optimize the balance sheet for margin rather than just managing for "middle of the road" outcomes.

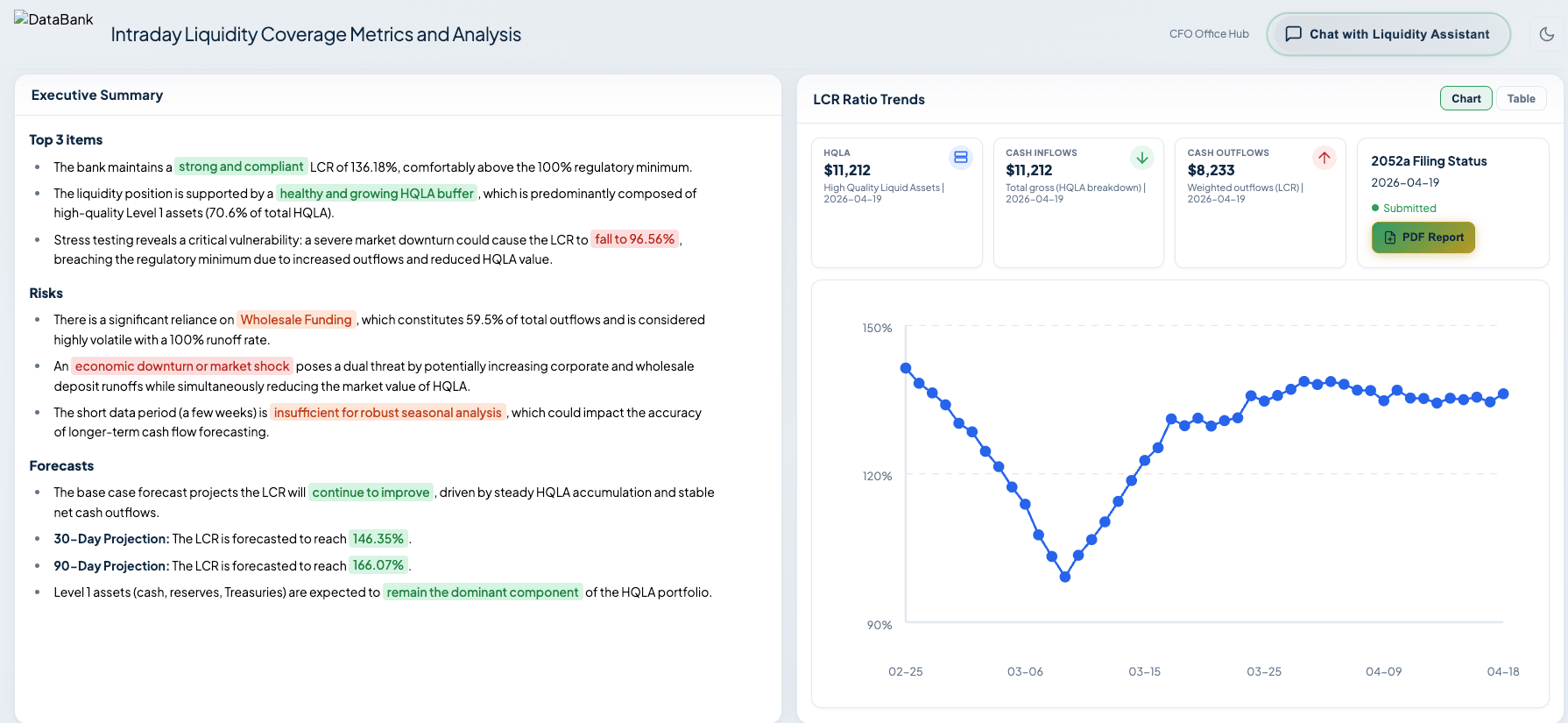

Funding & Liquidity (LCR, ILST, FR 2052a): Using a Unified Data Hub that integrates internal cash flows with reference market data, Treasury teams can monitor intraday liquidity and funding risks as they happen. Predictive AI models flag potential breaches in Liquidity Coverage Ratios (LCR) or Internal Liquidity Stress Tests (ILST) hours before they occur, allowing for proactive rebalancing.

Intraday Liquidity Coverage Metrics and Analysis - Capital Planning & Adequacy (CCAR): Instead of being treated as a manual annual exercise, capital adequacy becomes a continuous process. By unifying risk and finance data, teams can automate the heavy lifting of Comprehensive Capital Analysis and Review (CCAR) reporting, running "what-if" scenarios on capital buffers to see the impact of new business lines or macroeconomic shifts in real-time.

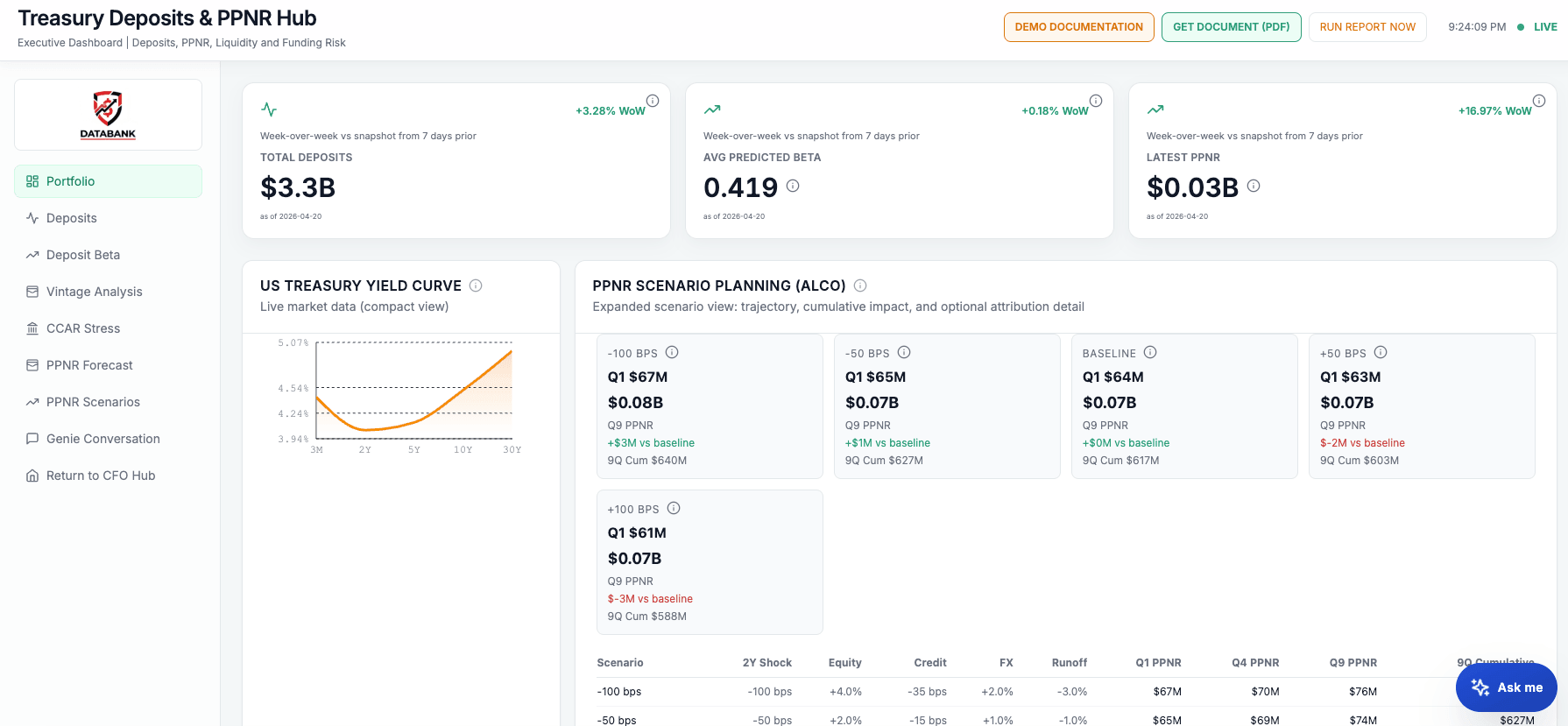

AI-driven Deposit Scenario Planning: Treasurers move beyond historical averages to achieve the pricing precision necessary to maximize NIM and PPNR. AI models deposit "stickiness" under stress and replace blanket adjustments with surgical recommendations. For example, following a 25bps market increase, AI identifies "high-inertia" retail segments requiring only a 5bps hike to maintain retention while recommending a 22bps increase for rate-sensitive commercial accounts. This targeted calibration protects the balance sheet from "hot money" outflows while aggressively defending interest expenses, ensuring optimal spread management and balance sheet protection.

Treasury Deposits & PPNR Hub. Executive Dashboard for Deposits, PPNR, Liquidity and Funding Risk - Fit for purpose regulatory reporting: Databricks transforms compliance from a periodic obligation into a continuous capability. FR 2052a and LCR outputs derive from the same streaming data powering intraday Treasury monitoring. No separate extraction, no reconciliation lag. Auditor questions are answered in seconds, not weeks of forensic reconstruction. Stress-testing models are versioned and reused across CCAR, CECL, and ILST regimes, reducing the cost of adaptation as regulations evolve. This gives the CFO the confidence to stand behind every number with certainty.

In Insurance

Just as in banking, insurance finance functions are constrained by structural data friction that keeps the Office of the CFO in a reactive, reporting-only posture. The core problems echo the broader "Data and Governance Tax," but show up through insurance-specific systems and regulatory frameworks:

The Policy & Claims Fragmentation Gap:

Policy administration systems, claims platforms, reinsurance ledgers, and investment portfolios all live in disconnected silos. Actuarial teams spend 30–40% of their time reconciling data across these systems before meaningful analysis or decision-support can begin.

The "Batch Tax" in Reserving & Close:

Monthly or quarterly close cycles force actuaries and finance teams to work off stale snapshots. In a world of real-time catastrophe events and volatile capital markets, T+1 or T+30 becomes a structural liability for dynamic reserving, solvency monitoring, and capital deployment.

The Lineage Problem in Regulatory Reporting:

Global frameworks — IFRS 17, Solvency II, NAIC RBC, and LDTI — demand granular, end-to-end auditability. On legacy stacks, the "math" between a policy transaction and a final reserve or capital number is buried in opaque actuarial tools and custom code, creating regulatory fragility and expensive manual audit reconstruction.

The Semantic Gap Between Actuarial and Finance:

Actuaries speak in loss development triangles, exposure units, tail factors, and catastrophe curves; Finance speaks in GAAP/IFRS line items, combined ratios, and capital ratios. Manually translating between these worlds consumes analyst bandwidth, introduces reconciliation risk, and slows decision-making.

The Databricks for Insurance Finance

To move from backward-looking reporting to real-time solvency, pricing, and capital optimization, insurance CFOs need a platform that unifies data, models, and governance on a single, intelligent foundation.

- Unified Lineage and Trust with Unity Catalog

Unity Catalog creates a single governed view from raw policy and claims transactions through actuarial models to final financial statements. By integrating insurance semantic standards such as the ACORD data model as an ontological backbone, it provides an end-to-end audit trail for IFRS 17 CSM calculations, LDTI remeasurements, and RBC reporting. Regulators and auditors get transparent, traceable answers in seconds instead of weeks of manual reconstruction. - Eliminating the "Batch Tax" with Lakeflow

Lakeflow continuously ingests policy endorsements, claims events, and investment transactions into the Lakehouse as they occur. Real-time subledger posting removes the reconciliation lag between claims payments and reserve movements, enabling a continuous close posture where the GL is always audit-ready and both statutory and GAAP close cycles are compressed. - Bridging the Semantic Gap: "Speak to Your Data"

With Genie on top of governed data, CFOs, controllers, and business unit leaders can query the full financial estate in plain English. Questions like, "What is our current combined ratio by line of business if catastrophe losses exceed $500M?" are answered in seconds, not days. This bridges the actuarial-to-finance translation layer and frees analysts from data janitorial work so they can focus on pricing, reserving, and capital strategy. - Transparent, Governed Modeling with Agent Bricks

Critical insurance models — loss reserves, IBNR, reinsurance recoverables, CSM roll-forward, asset-liability matching, and more — move off fragile spreadsheets and black-box actuarial vendor tools onto the same governed platform as the data they consume. Models are trained, versioned, and registered in Unity Catalog, creating full reproducibility and a single lineage chain from raw transactions to model outputs. When auditors or regulators ask, "How was this reserve or capital number produced?"the answer is a traceable, governed pipeline — not a person’s desktop workbook.

This isn't theory: Databricks is powering the modern CFO

- A top global bank: By solving the fragmentation problem they reduced regulatory data processing times for liquidity reporting from 10 hours to just 8 minutes. This 75x performance gain allows their Treasury team to respond to market volatility with unprecedented speed.

- Nationwide Insurance: Nationwide’s finance and marketing teams relied on fragile spreadsheet‑driven BI and SAS‑based risk models. Infrastructure overhead and long, manual reporting cycles meant critical pricing and performance insights took weeks instead of minutes, limiting their ability to react to market conditions. They built a Data Harmonization Framework with Spark Declarative Pipelines and Serverless SQL Warehouses to replace spreadsheet workflows. This resulted in 5pp improvement in combined ratio and 3pp improvement in expense ratio.

- Databricks runs on Databricks: Our own office of the CFO uses the platform to unify and govern transactional data (ERP, CRM, HRIS, planning) into a governed, single source of truth. Internal adoption of the Databricks platform as customer 0 drives measurable outcomes

- Faster close and forecast cycles

- Elimination of “what’s the right number” and seamless spreadsheet data ingestion via unified single source of truth

- AI-driven analytics, insights, and forecasts in natural language with Genie

- Audit readiness for SOX compliance via Unity Catalog

Legacy systems weren't built for the speed of the AI revolution. The office of the CFO must move past the era of multiple fragmented point solutions for Data + AI. Databricks is the backbone of the CFO stack of the future. By unifying every transactional signal into a single, governed source of truth, we’re moving from reporting the past to using AI to run our business in real time. — Dave Conte, CFO of Databricks

Conclusion: The New Standard for CFO Leadership

The "CFO Stack of the Future" is a unified platform where transactions, predictive analytics, and AI converge.

As the role of the CFO continues to evolve, the stakes have never been higher. Those who continue to operate in T+1 batch mode will find themselves constrained by "lazy capital" and rising regulatory costs. Conversely, the CFOs who embrace a Lakehouse architecture are transforming their departments from cost centers that report on value into strategic hubs that create value.

This post marks the beginning of our exploration into the modernization of the finance office. While we have established the architectural requirements for the Modern CFO, the true test of this platform lies in its application to the industry’s most volatile variables.

In the second part of this series, we will move from architectural strategy to functional execution. We will go deeper into AI-driven Deposit Scenario Planning and PPNR optimization, demonstrating how AI-driven Treasury Deposits & PPNR modeling allows leaders to move beyond historical "beta" averages to achieve the surgical pricing precision necessary to protect the balance sheet and maximize earnings in real time.

See it in action today

The future of finance isn't just data-driven; it's intelligence-led. The transition to a Modern CFO office starts with seeing what’s possible on your own data. To see these workflows in action, including a live demo of the Unified Treasury Hub and our AI-driven scenario planning, reach out to your Databricks account team today.

Stay tuned for Part 2: “Driving PPNR Performance with Real-Time Deposit Intelligence”

Get the latest posts in your inbox

Subscribe to our blog and get the latest posts delivered to your inbox.