Mercedes-Benz builds a cross-cloud data mesh with Delta Sharing and intelligent replication, cutting costs by 66%

How a luxury automaker built a cross-cloud and cross-region data mesh using Delta Sharing, balancing freshness and egress cost with intelligent replication

- Mercedes-Benz built a cross-cloud data mesh with Databricks Delta Sharing and local replication (Delta Deep Clone) to securely exchange after-sales data between AWS and Azure.

- Delta Sharing flexibility enables Mercedes-Benz to optimize both freshness and egress cost across clouds and regions.

- For large data sets that are frequently accessed, Mercedes Benz used Deep Clone on top of Delta Sharing to intelligently and incrementally update data, reducing egress costs by 66%.

Mercedes-Benz, one of the world's most recognizable luxury automotive brands, is currently navigating two major industry shifts: digitization and the transition to electric vehicles. This era is defined by the concept of the "data-defined vehicle".

- From Hardware to Data: In the past, vehicles were hardware-defined, then software-defined, but now the industry is entering the era of data-defined vehicles. This shift means data—including vehicle telemetry and customer information—is the core asset driving product improvement and customer experience.

- The Need for Data Sharing: To build this data-defined vehicle, various business units, like Research & Development (R&D), After-Sales, and Marketing, must be able to share data seamlessly, securely, and cost-effectively. Mercedes-Benz aimed to replace previous, insecure, or inefficient methods like FTP servers and email for data transfer with a robust, central data sharing marketplace.

The critical challenge arose from the company's multi-cloud architecture (AWS and Azure). Data consumers on Azure needed access to large, frequently updated after-sales datasets primarily stored in AWS. This cross-cloud access led to high egress costs and posed significant technical hurdles for ensuring data freshness.

The Business Challenge: High Egress Costs and Data Silos

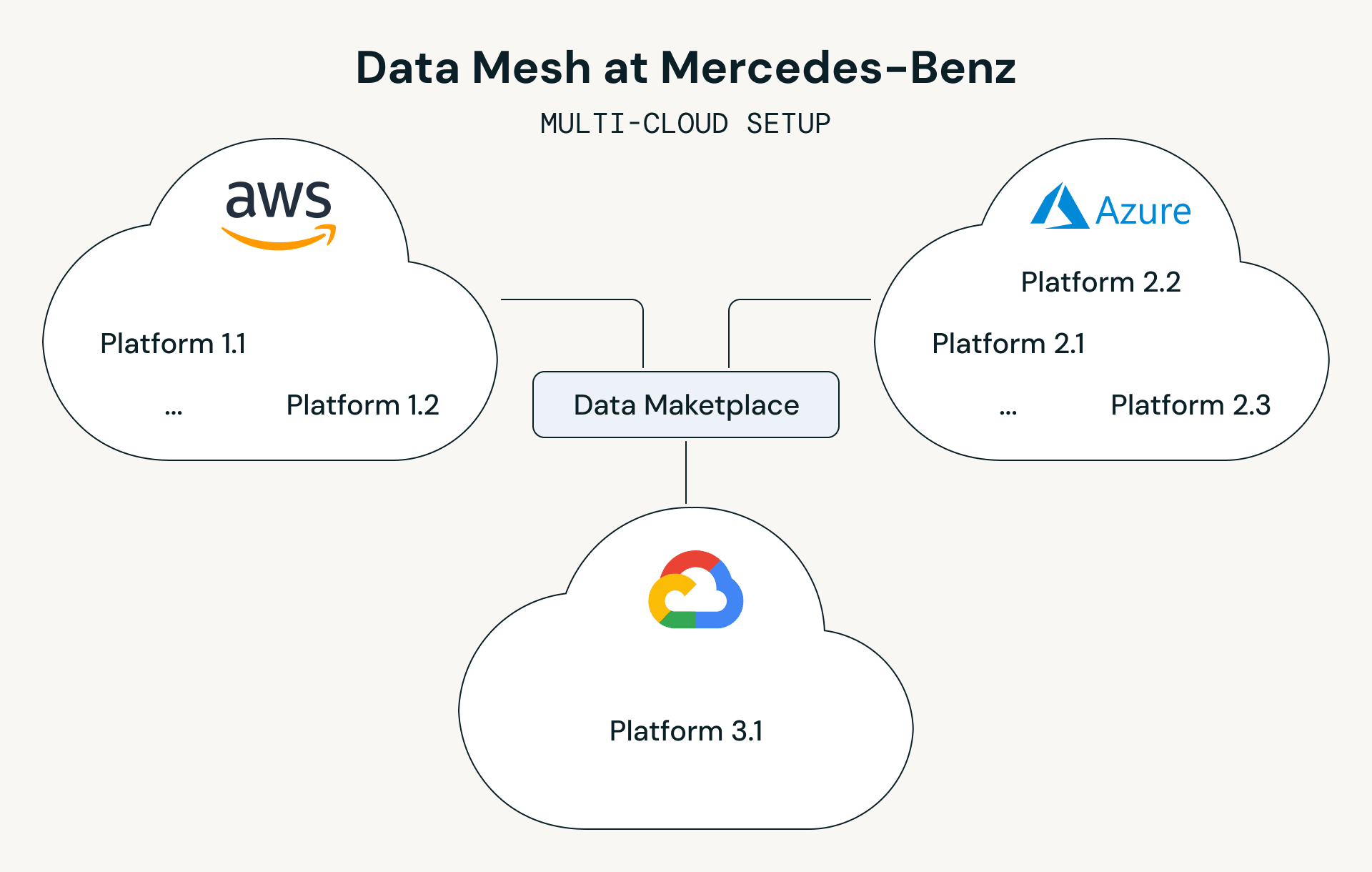

Mercedes-Benz operates a multi-cloud setup, utilizing AWS and Azure, along with a multi-region setup within those clouds. This approach allows them to select the hyperscaler services that best fit specific technical requirements.

A crucial example involves their after-sales data, which includes information from vehicle over-the-air events and workshop visits. This data is vital for improving components in research and development (R&D) and analyzing warranty cases.

- Data Volume: The core after-sales data is substantial, with a subset of approximately 60 TB needed to serve dozens of use cases running on Azure. This volume is continually growing.

- Cost Barrier: When Azure-based consumers directly queried this large dataset residing on AWS, egress costs became a consideration for cost-conscious use cases. While direct access was suitable for certain real-time analytics needs, the team sought a more economical approach for less time-sensitive workloads.

- Data Latency and Freshness: Prior to the new solution, the full dataset was often copied over as a weekly full load. Data consumers requested more frequent updates, but full loads every day were too expensive. A delay of seven days could be critical when reacting to warranty cases.

- Data Format Compatibility: The original data on AWS was in the Iceberg format, while many data consumers on the Azure side expected a Delta-compatible format.

The Solution: A Hybrid Delta Sharing and Replication Strategy

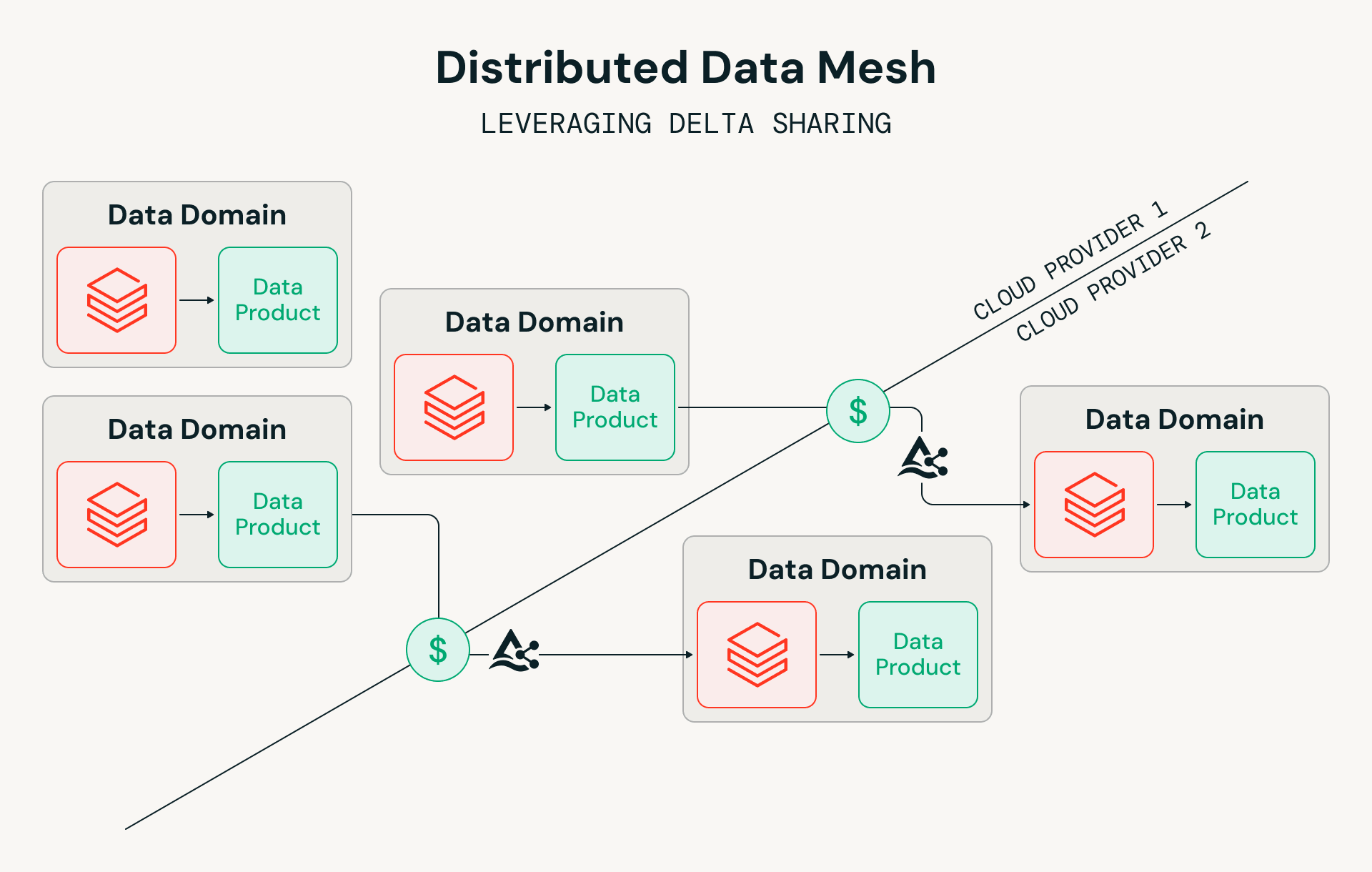

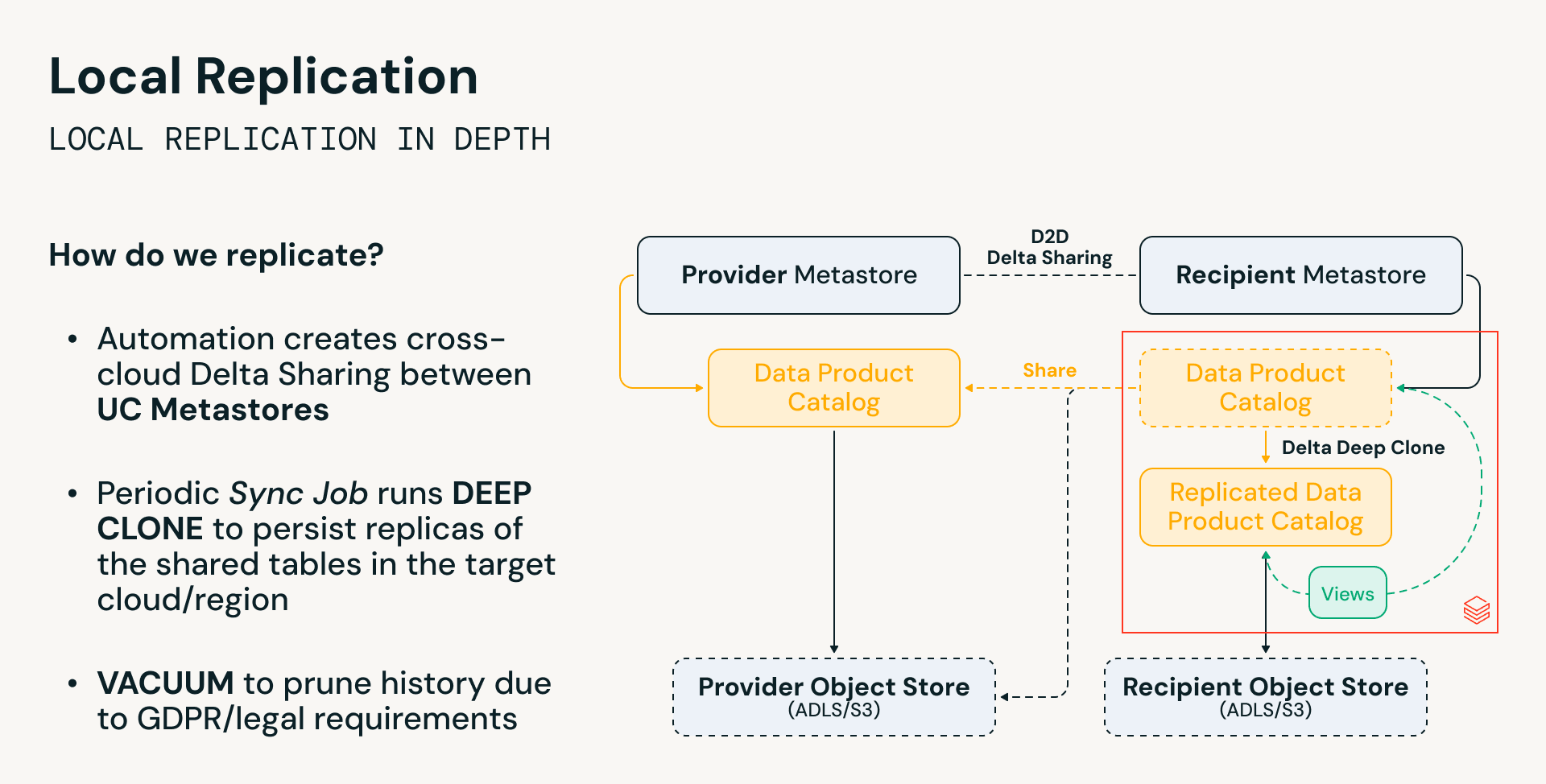

Mercedes-Benz implemented a technical solution that combined the secure data exchange capability of Databricks Delta Sharing with a controlled local replication mechanism (Delta Deep Clone) to address the recurrent egress costs associated with sharing large, highly demanded datasets.

Unity Catalog and Delta Sharing: The Foundation

The solution is anchored in the Databricks Data Intelligence Platform, built upon Unity Catalog (UC) and Delta Sharing.

- Unity Catalog (UC): UC functions as the global catalog for all data products across the enterprise. It centralizes metadata, manages access, and enables a "hub-and-spoke" governance model, allowing data to become transparent to others while maintaining control. UC also simplified the process by federating tables over from AWS Glue, registering them directly in Unity to trigger data sharing.

- Delta Sharing: Delta Sharing serves as the open protocol for securely exchanging data between different UC Metastores, across various regions, and across hyperscalers (AWS to Azure). It was chosen because it is an open source technology and supported incremental data updates.

Delta Sharing is used in three main configurations within the Mercedes-Benz data mesh:

- Cross-Cloud/Cross-Hyperscaler Sharing: This is the primary use case, bridging the gap between AWS and Azure. It leverages the unified Databricks platform on both sides to use the same technology across clouds.

- Cross-Region/Cross-Metastore Sharing: Delta Sharing is utilized internally between different regions in the same cloud.

- External Sharing: The solution enables sharing data with external partners, like suppliers, who may also be using Databricks or Delta Sharing. This is a more secure way to receive data than sending around secrets or using FTP.

Hybrid Approach: Local Replication to Minimize Egress

Recognizing that not all use cases require real-time data freshness, Mercedes-Benz designed a controlled, incremental replication approach for large, heavily accessed datasets where cost efficiency was prioritized over sub-hourly freshness.

- Cross-Cloud Share: Delta Sharing is configured between the Provider Metastore (AWS) and the Recipient Metastore (Azure).

- Periodic Sync Job: Automated Sync Jobs run periodically, utilizing Delta Deep Clone to persist replicas of the shared tables in the recipient cloud's object store (ADLS/S3).

- Incremental Updates: Deep Clone enables the process to update data incrementally, so the full dataset is not copied over constantly, saving cost.

- Local Consumption: Data consumers on Azure query the replicated data locally on Azure, drastically reducing cross-cloud data movement and the high associated egress costs.

This architecture reflects Delta Sharing's core strength: flexibility users can choose between high data freshness with higher cost (direct Delta Shares) or low data freshness with minimal cost and latency (local replicated data). This tiered approach allows Mercedes-Benz to serve diverse use cases efficiently.

Technical Implementation and Best Practices

The team had the end-to-end solution ready in just a few weeks. To ensure scalability, security, and accurate cost management, Mercedes-Benz incorporated several operational and architectural best practices:

- Dynamic Data eXchange (DDX) Orchestrator: DDX plays a central role as a self-service meta-catalog. DDX automates permission management (granting permissions via microservices and Databricks APIs), Sync Job management, and data sharing/replication workflows.

- Automation with Databricks Asset Bundles (DABs): The deployment of Sync Jobs and configuration is fully automated using DABs and YAML-driven deployments via Azure DevOps. This ensures a robust, full DevOps approach.

- Cost Tracking and Attribution: The Sync Jobs record the exact amount of data transferred. A separate Reporting Job aggregates this data daily to calculate the approximate egress cost per Data Product, which is then used to bill the upstream data producers. This cost dashboard also tracks compute costs for the Sync Jobs.

- GDPR and Governance: The solution addresses GDPR concerns by using the Delta Lake VACUUM functionality on the replicated tables, ensuring that data deletions on the source side are reflected on the recipient side.

Quantitative Benefits and ROI

The cross-cloud data mesh solution yielded significant and measurable business results, transforming the economic model for data sharing at Mercedes-Benz.

1. Reduced OPEX / Egress Costs

By leveraging Delta Sharing's incremental update capabilities and intelligent replication via Deep Clone, Mercedes-Benz optimized data freshness while reducing egress costs.

- Egress Cost Reduction: The egress costs for the initial 10 data products dropped by 66%.

- ROI on Egress: This represents a reduction of approximately two thirds in weekly egress costs. Considering the same calculation example for 50 use cases from above for direct data consumption from AWS, the approximate annual egress cost was reduced by 93%.

2. Increased Data Freshness and Business Agility

The ability to sync data incrementally allowed the frequency of updates for Azure consumers to be dramatically increased.

- Improved Freshness: Data consumers now receive fresh data more frequently (e.g., every second day), instead of waiting a full seven days. This prevents critical delays in reacting to issues like warranty cases.

3. Reduced IT Operations Cost

The use of fully Serverless Databricks Jobs for the synchronization process lowered compute expenses and operational overhead.

- Operational Stability: The jobs are running "more or less without any problem and without any intervention," minimizing IT operations cost.

Strategic Impact: The Data-Defined Vehicle

The centralized and cost-efficient data sharing framework is essential to Mercedes-Benz’s vision of the "data-defined vehicle".

Delta Sharing and the resulting data mesh help connect previously isolated data sources, such as after-sales data, with research and development, marketing, and sales colleagues. This creates a holistic view of the vehicle and the customer, accelerating the company’s mission toward digitization and the electrification of its product line.

Want to learn how Mercedes-Benz leveraged Delta Sharing's flexibility to optimize their cross-cloud data mesh? Watch Alexander Summa's presentation from the Data + AI Summit:

Watch the presentation on YouTube

In this session, you'll learn more about the technical architecture, implementation challenges, and lessons learned from deploying this solution at scale.

Get the latest posts in your inbox

Subscribe to our blog and get the latest posts delivered to your inbox.