What is Structured Streaming?

Learn how to process real-time data using the same Spark APIs you use for batch processing

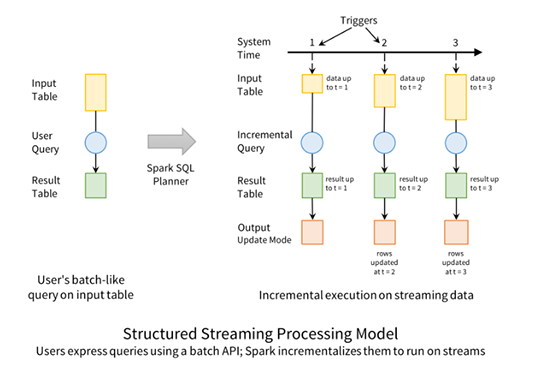

- Structured Streaming is a high level stream processing API in Apache Spark that lets you work with real time data using the same structured APIs you already use for batch.

- Converting existing batch jobs into streaming jobs requires minimal code changes, making it practical to reduce latency and process new data incrementally as it arrives.

- By reusing familiar Spark patterns, Structured Streaming helps teams get value from streaming systems quickly without needing to adopt a completely new stream processing framework.

Structured Streaming is a high-level API for stream processing that became production-ready in Spark 2.2. Structured Streaming allows you to take the same operations that you perform in batch mode using Spark’s structured APIs, and run them in a streaming fashion. This can reduce latency and allow for incremental processing. The best thing about Structured Streaming is that it allows you to rapidly and quickly get value out of streaming systems with virtually no code changes. It also makes it easy to reason about because you can write your batch job as a way to prototype it and then you can convert it to a streaming job. The way all of this works is by incrementally processing that data.

The agentic AI playbook for the enterprise

Get the latest posts in your inbox

Subscribe to our blog and get the latest posts delivered to your inbox.