How leading tech companies are killing the builder's tax with Lakebase

AI-native apps need operational data and analytics to live together — here’s how teams are removing the barrier.

by Amey Banarse and Madelyn Mullen

- Collapse data movement layers: Leading organizations are using Databricks Lakebase, eliminating ETL and reverse ETL by unifying operational and analytical data on a single governed platform.

- Enable real-time intelligence: Apps and AI systems operate directly on fresh, low-latency data without synchronization lag.

- Productionize AI systems: A unified architecture reduces operational overhead and enables continuous learning loops at scale.

The hidden cost killing your AI apps roadmap

Across leading tech organizations building AI-native apps, the primary constraint has shifted from model capability to the underlying data architecture, and specifically, data pipelines. The requirements of AI systems to access real-time, stateful context for agents and low marginal cost for rapid, experimental development have exposed the critical flaw in traditional, separated data architectures.

Operational workloads typically run on cloud transactional databases (e.g., managed Postgres/MySQL engines), while analytics, ML pipelines, and feature engineering live in the lakehouse. Synchronization between these layers relies on a complex mesh of CDC pipelines, ETL/ELT jobs, and reverse ETL frameworks. This results in systemic inefficiencies:

- Data staleness: AI systems operate on lagging snapshots rather than real-time state

- Architectural fragmentation: Governance, lineage, and access control are duplicated across systems

- Operational overhead: Engineering effort shifts from product development to pipeline orchestration and failure management

We call this the builder’s tax: a structural inefficiency arising from decoupled operational and analytical stacks. For the people within tech companies that build the platforms, SaaS products and developer tools everyone else runs on, this tax is especially damaging. Every new AI feature spawns another database, another pipeline, another quarter of delay.

Architectural shift: co-locating apps and data

To break this pattern, leading tech companies are redesigning the architecture, moving beyond the adoption of just another specialized tool. We see them running apps and AI directly on the same governed foundation as their analytics.

That foundation is Lakebase: a fully managed serverless Postgres engine natively integrated into the Databricks Platform.

- Apps read and write directly against lakehouse-managed data

- Governance is centralized through Unity Catalog across all workloads

- Reliable operational data with automated snapshots and built-in failure recovery

This establishes an Interoperable Application Foundation: a single, governed layer where apps, AI, and analytics share the same operational store.

Real world scenario

Amey Banarse presented “From Transactions to Agents: PostgreSQL in Modern AI Applications” at PostgresConf 2026 [Slides]. Amey covers a live walkthrough of a healthtech claims app built entirely on Lakebase + the AI DevKit, showing how lakehouse intelligence, operational insights and a continuous learning loop run on a single Databricks foundation.

Organizations are typically entering this architecture through three primary vectors:

- Elimination of reverse ETL pipelines: Analytical datasets (gold-layer tables) are synchronized directly into Lakebase through native integration. This removes dependency on external tools and reduces pipeline fragility.

- AI-native apps and internal tools: Run Databricks Apps + Lakebase as a single, serverless stack with model serving, feature store and analytics. No extra infrastructure to provision.

- Agentic memory and state: Lakebase with pgvector for semantic search becomes the operational memory layer for agents built with Agent Bricks, alongside the data they reason over.

What tech companies are actually seeing

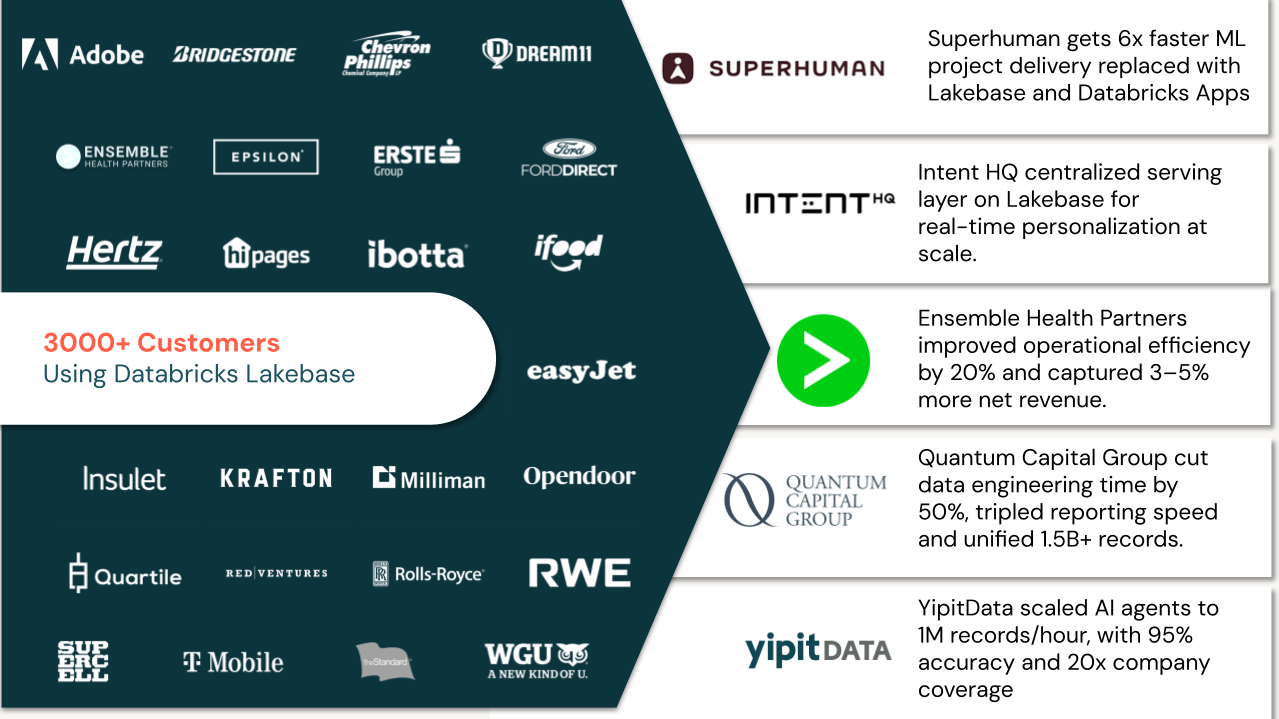

The outcomes below come from tech companies that have moved to a Lakebase architecture:

Superhuman replaced a brittle custom pipeline syncing data from Databricks into Redis and DynamoDB with Lakebase as the managed transactional layer for their ML infrastructure and go-to-market apps. Feature onboarding, which previously took three months, dropped to two weeks. New data integrations that once required a full sprint can now be completed in under an hour. On-call disruption fell 20x, from three days per shift to roughly two hours. “We stopped maintaining pipelines and started shipping features. Lakebase cut out the intermediary and let us move in weeks instead of months." — Michael Kobelev, Software Engineer, ML Infrastructure at Superhuman

YipitData scaled their AI agent pipeline to process 1M records per hour, achieving 92–95% tagging accuracy and 20x company coverage. By using Lakebase as the relational system of record inside Unity Catalog, their agents operate with a durable, governed state — no fragile external stores, no sync lag.

Quantum Capital Group was managing 1.5B+ records across six fragmented data sources. After consolidating on Lakebase, they eliminated 100+ redundant tables, cut data engineering time by 50%, and tripled reporting speed — teams now work from a single trusted dataset instead of fighting version sprawl.

Ensemble Health Partners unified 15+ fragmented SQL Server systems. With Lakebase as the transactional layer, they deployed AI-driven revenue-cycle workflows that improved operational efficiency by 20% and helped customers capture 3–5% more net revenue year over year.

Replit, whose platform powers millions of developers building and deploying software, uses Lakebase + the Databricks AI DevKit to help customers launch production code-generation AI in 3 weeks with 10x developer velocity, eliminating the gap between operational and analytical systems from day one.

IntentHQ, a consumer intelligence platform, centralized its serving layer on Lakebase to power real-time personalization at scale — giving AI models a low-latency operational store that stays in sync with its lakehouse data without custom pipelines.

The architecture pattern behind the outcomes

Despite differing use cases — from AI agents and personalization engines to healthcare workflows and developer platforms — these organizations are not succeeding through isolated optimizations. They are converging on a fundamentally different architectural model.

At its core, this architectural model eliminates the traditional separation between transactional systems, analytical platforms, and AI pipelines, replacing it with a shared, interoperable data foundation.

This pattern consistently includes three tightly integrated layers:

Lakehouse intelligence layer

A governed, scalable foundation where batch and streaming data, feature engineering, and AI/ML workloads operate. This layer provides the system of insight, enabling large-scale processing, model training, and analytics on unified data.

Operational data layer

A low-latency transactional interface (Lakebase) that serves as the system of execution for applications and agents. This layer enables real-time reads/writes, state management, and application logic directly on governed data — without replication or synchronization overhead.

Continuous learning loop

A closed feedback system where application interactions, agent outputs, and user signals are captured and reintegrated into model pipelines. This establishes a system of continuous improvement, allowing AI capabilities to evolve based on real-world use.

When these three layers share a foundation, AI systems transition from isolated workloads to continuously improving production systems.

Eliminating the builder’s tax

The builder’s tax isn’t inevitable. It’s a consequence of building AI on top of infrastructure that was designed for a different era — when databases were monolithic, apps were stateless, and intelligence was a separate project.

Lakebase changes the math! Apps run where the data lives. Agents have the context they need. And the engineering time your team spent on pipelines goes back to shipping.

Watch Amey Banarse’s PostgresConf 2026 session, “From Transactions to Agents: PostgreSQL in Modern AI Applications” [Slides] to see a full AI-native app built on Lakebase in action.

Databricks Lakebase is serverless Postgres built for agents and apps. Learn more at databricks.com/product/lakebase.

Get the latest posts in your inbox

Subscribe to our blog and get the latest posts delivered to your inbox.