Rethinking SQL ETL for modern data platforms

Reduce cost and complexity by unifying fragmented SQL pipelines on a single platform

by Matt Jones and Shanelle Roman

- Fragmented SQL ETL drives hidden cost, brittle pipelines, and slow incident resolution

- Running ETL across warehouses, orchestrators, and tools creates operational drag that scales with every pipeline

- A unified platform for all SQL ETL removes coordination overhead and lets teams ship faster on one governed system

SQL is the foundation of modern data work. It’s how analytics engineers define transformations, how data warehouse engineers manage pipelines, and how analysts explore and refine data.

But while SQL itself is standardized, the systems used to run SQL ETL are anything but.

In most organizations, SQL pipelines are spread across a combination of tools: a data warehouse for execution, a transformation framework for modeling, an orchestrator for scheduling, and separate systems for monitoring, lineage, and data quality. Each layer addresses a specific need, but together they create a fragmented environment that is difficult to operate and increasingly difficult to scale.

As data teams scale, this fragmentation starts to show up in day-to-day operations. Pipelines fail across multiple systems, dependencies are difficult to trace, and resolving issues often requires jumping between tools that were never designed to work together. At the same time, expectations increase. Teams are asked to deliver fresher data, support more use cases, and move faster, without adding operational overhead.

This is where many data platform strategies begin to break down. Even as organizations invest in modern infrastructure, SQL ETL often remains distributed across multiple systems, carrying forward the same complexity and constraints.

The challenge isn’t SQL itself - it’s how SQL ETL is implemented.

If SQL ETL were designed from the ground up for how teams actually work today, it would look very different. In practice, it would mean:

- A single platform for ETL

- Support for every SQL practitioner

- Open, future-ready pipelines

Together, these principles define a simpler and more durable approach to SQL ETL - one that reduces fragmentation today while supporting how data workloads evolve over time.

Run and operate SQL ETL on one platform

The challenge in SQL ETL isn’t writing transformations - it’s operating pipelines as they span multiple systems.

In practice, this means coordinating execution in the data warehouse, orchestration in a separate system, and observability layered on afterward. Keeping pipelines running requires stitching these pieces together - tracking dependencies, diagnosing failures, and managing retries across tools that don’t share context.

As pipelines grow in number and importance, this coordination becomes a significant operational burden.

A unified platform simplifies this model by bringing these capabilities together. When execution, orchestration, observability, and governance are part of the same system, pipelines become easier to manage by design. Dependencies are tracked automatically, and issues can be identified and resolved more quickly because the relevant context is available in one place.

On Databricks, SQL ETL is defined and executed within a single platform. Pipelines run with built-in orchestration, while lineage and observability are captured automatically across each stage. Data quality checks and governance controls are integrated directly into pipeline execution rather than managed through separate tools.

This approach is further strengthened by serverless infrastructure and AI-driven optimization. Performance tuning, resource management, and scaling are handled automatically, allowing teams to focus on delivering reliable data rather than operating systems.

After transitioning our Databricks pipelines to serverless compute, HP realized cloud savings of over 32% and decreased the combined runtime of jobs by 36%. The effortless infrastructure management provided by serverless made this decision an obvious and strategic choice. — Luis Alonso, Head of Data Strategy & Engineering at HP Marketing

The result is a more streamlined and dependable foundation for SQL ETL - one that reduces operational overhead while improving performance and reliability at scale.

Support how teams actually build SQL pipelines

SQL ETL is fragmented not just because of tools, but because teams don’t all build pipelines the same way.

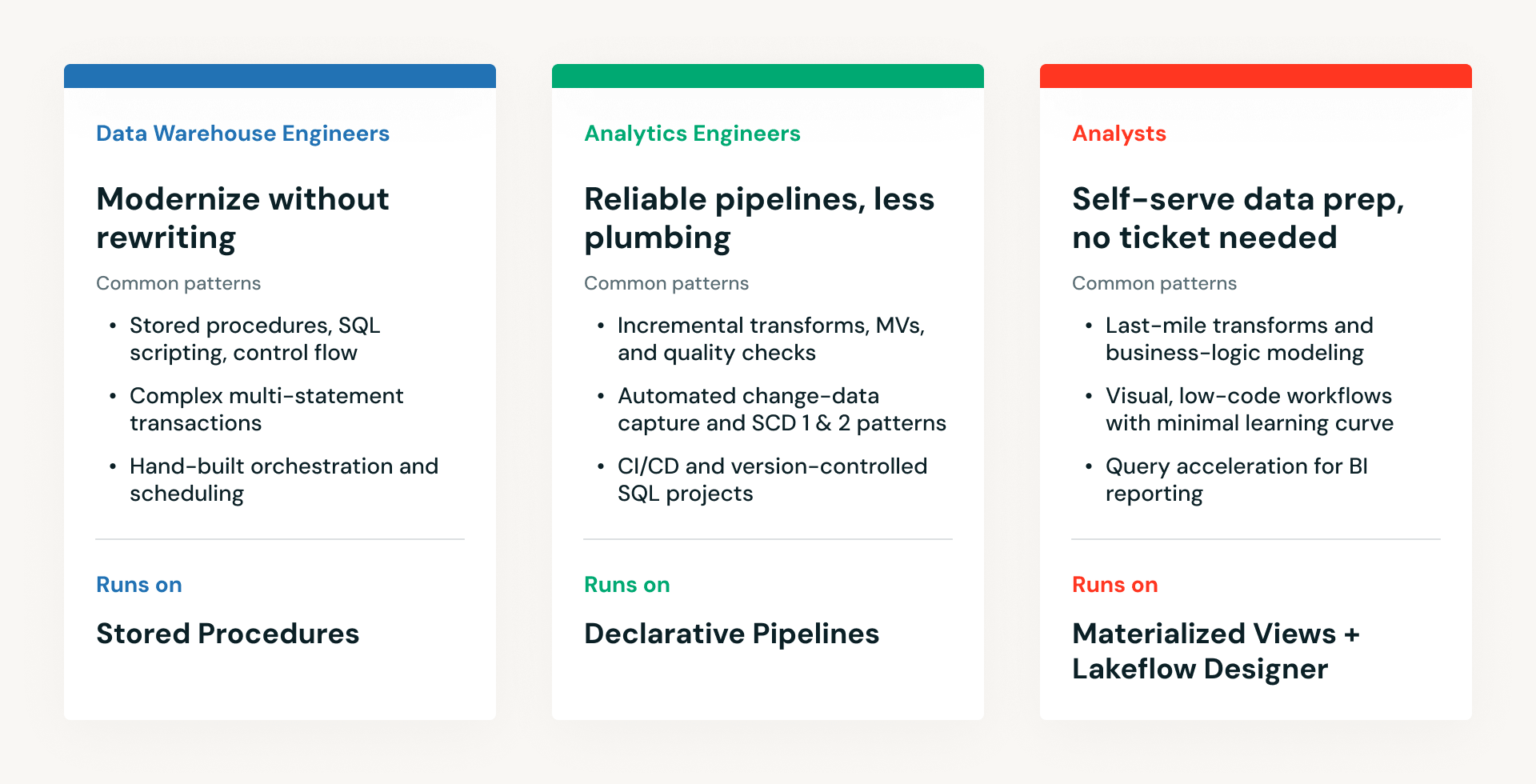

Analytics engineers - who focus on defining business logic in SQL - often want a way to build pipelines without managing the underlying infrastructure, with testing, version control, and dependencies handled automatically. Data warehouse engineers tend to rely on SQL scripts and stored procedures, often within tightly controlled execution environments. Analysts may create transformations directly within no-code tools or lightweight SQL interfaces.

Many platforms implicitly favor one of these approaches. As organizations grow, they often introduce additional systems to support other personas, resulting in parallel environments that are difficult to standardize and maintain.

A more effective approach is to standardize the platform rather than the interface.

Databricks supports a range of SQL ETL workflows within the same environment. Teams can run existing dbt workflows directly on the platform, lift and shift warehouse-style SQL into scripts and stored procedures, accelerate BI workloads with Materialized Views in Databricks SQL, define declarative pipelines that simplify production workflows, or use no-code tools for business analysts built on the same platform. Although these approaches differ in how pipelines are authored, they share the same execution engine, governance model, and observability framework.

This consistency allows organizations to support multiple development styles without introducing fragmentation in how pipelines are run. Teams can work at the level of abstraction that fits their needs, while still benefiting from shared lineage, monitoring, and operational controls.

It also ensures that existing warehouse-style SQL scripts and newer approaches can coexist on the same foundation. Teams do not need to choose between maintaining what they have and adopting new patterns—they can do both within a single system.

Each of these workflows is reflected in a dedicated authoring experience.

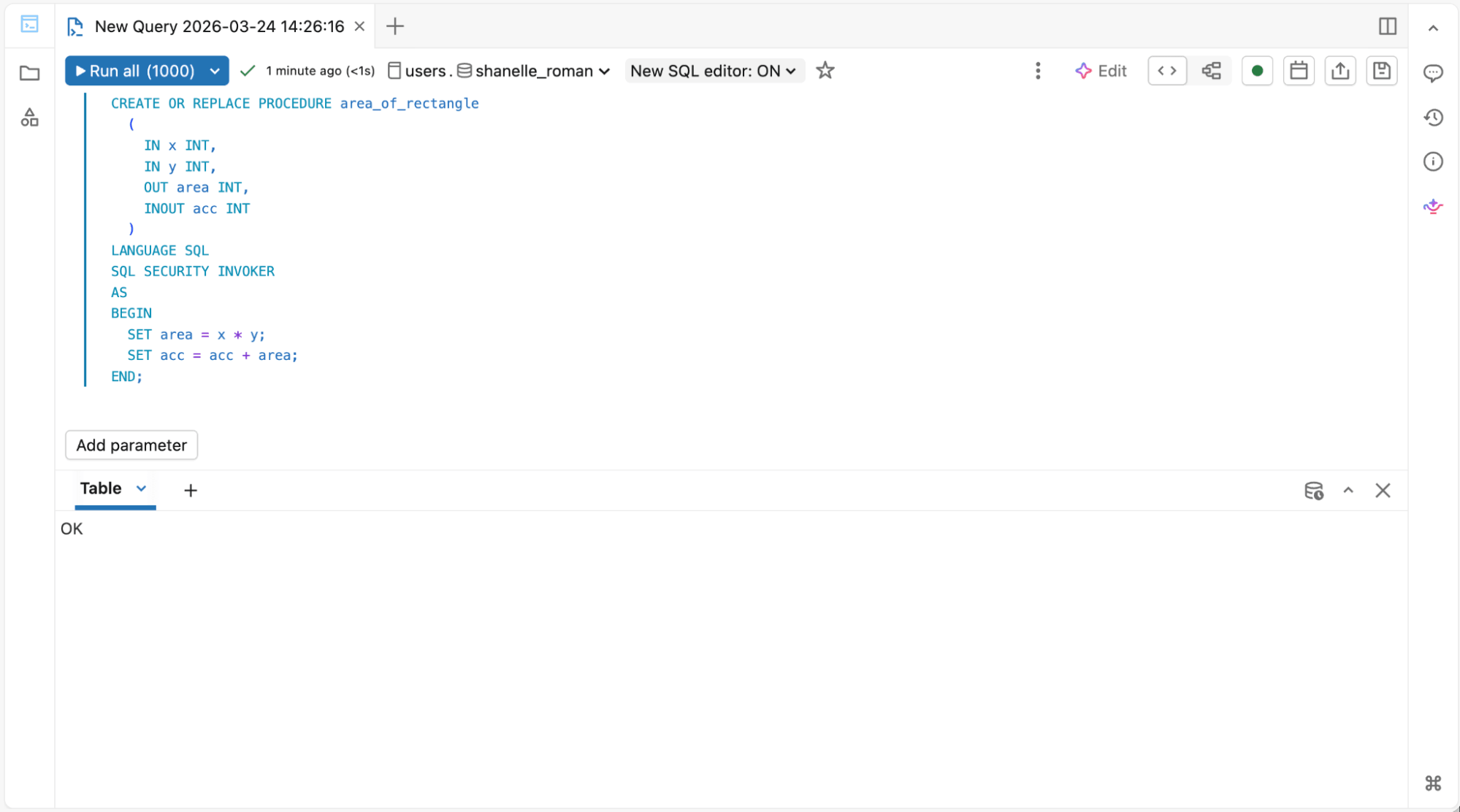

1. For data warehouse engineers running SQL scripts and stored procedures:

SQL Editor for Stored Procedures & Materialized Views

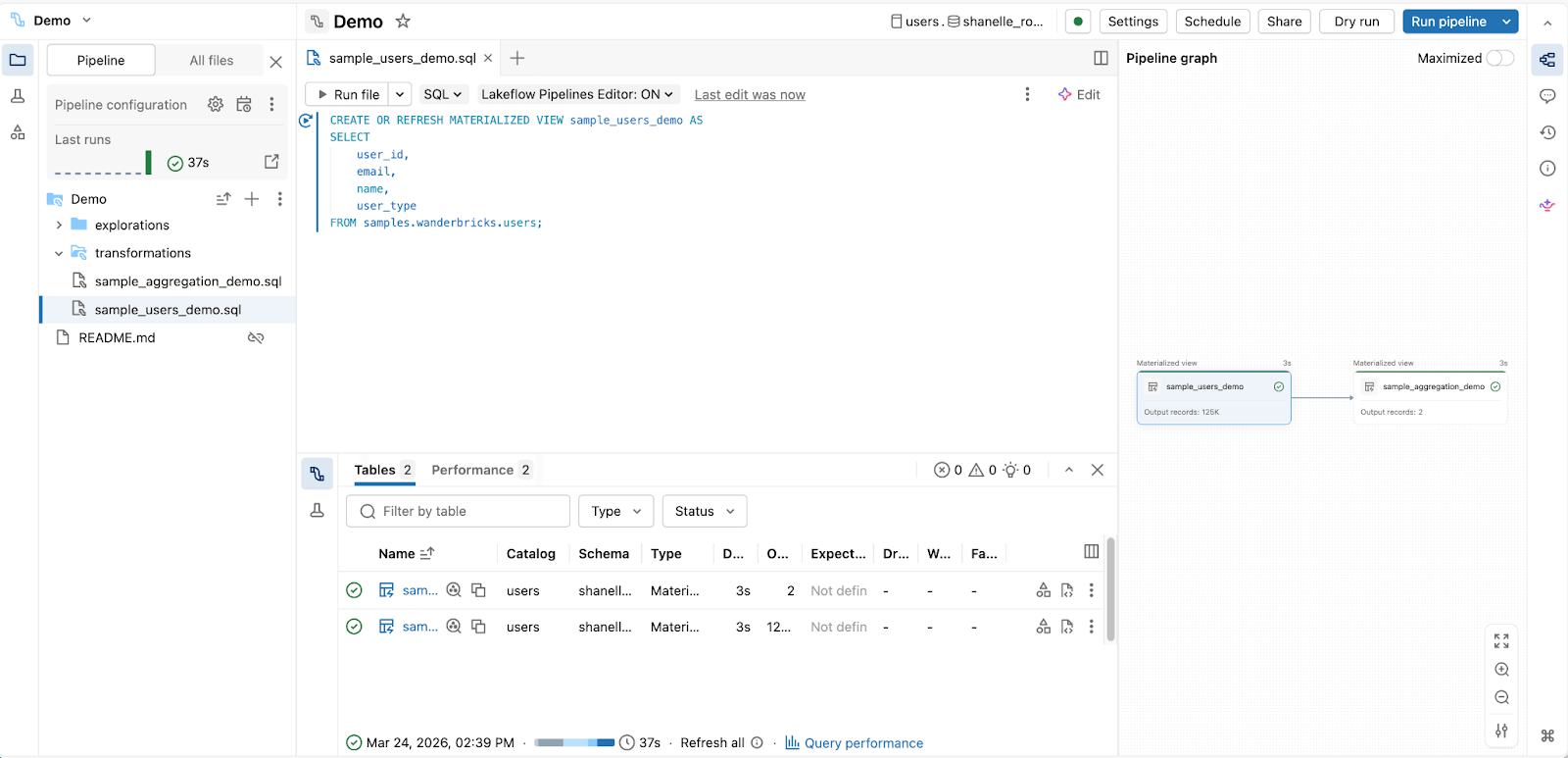

2. For analytics engineers building production pipelines with SQL:

Spark Declarative Pipelines Editor

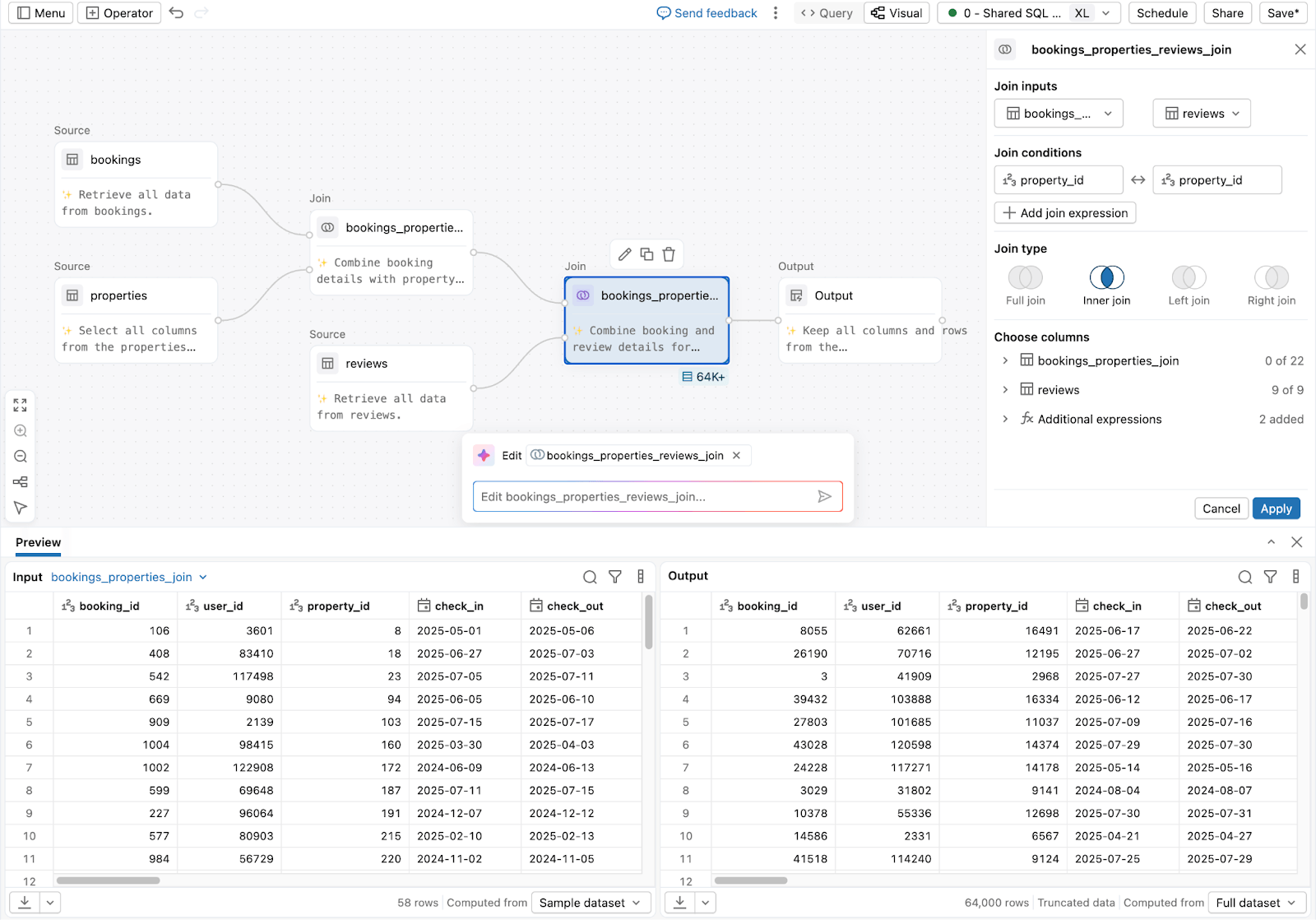

3. For analysts and business users preparing data without code:

Lakeflow Designer

The result is a more cohesive environment for SQL ETL, where collaboration improves and operational complexity does not increase with scale.

Build SQL pipelines that evolve with your workloads

As new data sources, real-time use cases, and AI workloads emerge, teams are often forced to introduce additional systems or rewrite existing pipelines - adding complexity and cost over time.

Many SQL ETL solutions introduce these constraints through proprietary formats, tightly coupled execution models, or assumptions about how data will be processed. These constraints may not be immediately apparent, but they tend to surface as organizations expand into new workloads, require fresher data, or support a broader set of use cases.

A future-ready approach to SQL ETL prioritizes openness and flexibility from the outset.

Databricks builds SQL ETL on open table formats and ANSI SQL, helping ensure that pipelines remain portable and interoperable across systems. This reduces the risk of lock-in and allows organizations to retain control over their data and logic as their architecture evolves.

At the same time, Databricks provides a unified SQL model that supports both batch and real-time analytics use cases. Rather than requiring separate systems for different workloads, the same SQL-based approach can be applied across a wide range of use cases.

This flexibility allows pipelines to evolve alongside the organization. Teams can continue to run existing SQL workflows while adopting more advanced patterns - such as incremental processing or declarative pipelines - when they are needed.

The conversion to Materialized Views has resulted in a drastic improvement in query performance, with the execution time decreasing from 8 minutes to just 3 seconds. This enables our team to work more efficiently and make quicker decisions based on the insights gained from the data. Plus, the added cost savings have really helped. — Karthik Venkatesan, Security Software Engineering Sr. Manager, Adobe

By avoiding rigid architectural constraints, this approach provides a stable foundation that can support both current requirements and future demands without requiring disruptive changes.

Why SQL ETL should shape your data platform strategy

Data platform discussions often focus on where data is stored and how queries are executed. In practice, however, the effectiveness of a platform depends just as much on how data pipelines are built and maintained, and whether they are defined in open, interoperable ways that avoid long-term lock-in.

If SQL ETL remains fragmented across multiple systems, organizations are likely to carry forward the same operational complexity and inefficiencies, even after adopting a new platform. Over time, this limits the value of the platform and makes it more difficult to scale data operations.

A more effective approach is to evaluate how well a platform supports SQL ETL across its full lifecycle - from development and execution to monitoring and governance. This includes the ability to support different working styles, reduce operational overhead, and adapt to evolving requirements without introducing additional systems.

Databricks addresses these needs by combining SQL execution, pipeline management, governance, and optimization within a single platform. This unified approach allows teams to build and operate SQL pipelines more efficiently while maintaining the flexibility to support a wide range of workloads.

Conclusion

SQL will continue to play a central role in how organizations work with data.

As a result, the way SQL ETL is implemented has a direct impact on the effectiveness of the overall data platform. Fragmented approaches introduce complexity and slow teams down, while unified approaches simplify operations and improve scalability.

For organizations evaluating how to evolve their data platforms, SQL ETL is a core consideration. Databricks provides a model for unified, future-proof SQL ETL that brings together execution, pipeline management, and governance within a single platform, while remaining open and adaptable as requirements evolve.

In practice, most organizations aren’t starting from scratch. SQL ETL modernization often stalls because the cost and risk of rewriting production pipelines are too high. Rather than forcing a disruptive rebuild, a more effective approach is to evolve incrementally - running existing pipelines first, consolidating systems over time, and modernizing step by step.

This is how teams can reduce fragmentation today while building toward a more unified, future-proof data platform over time. We’ll dive into this approach in more detail in a future post. In the meantime, you can read more about building, running, and scaling SQL pipelines on a unified lakehouse platform in this ebook, A Guide to Building ETL Pipelines with SQL.

Get the latest posts in your inbox

Subscribe to our blog and get the latest posts delivered to your inbox.