Powering KPMG UK Audit's AI future with Databricks

by Mark Wallington and Greta Nasai

KPMG UK has been using data and analytics at scale across its Audit portfolio for many years, and is a leader in the market for its use of technology in Audit. Building on this strong foundation, KPMG UK is now evolving its data platform with Databricks technologies to stay ahead in the AI era and unlock a new generation of intelligent, insight-driven Audit.

From strong D&A to trusted, AI‑first delivery

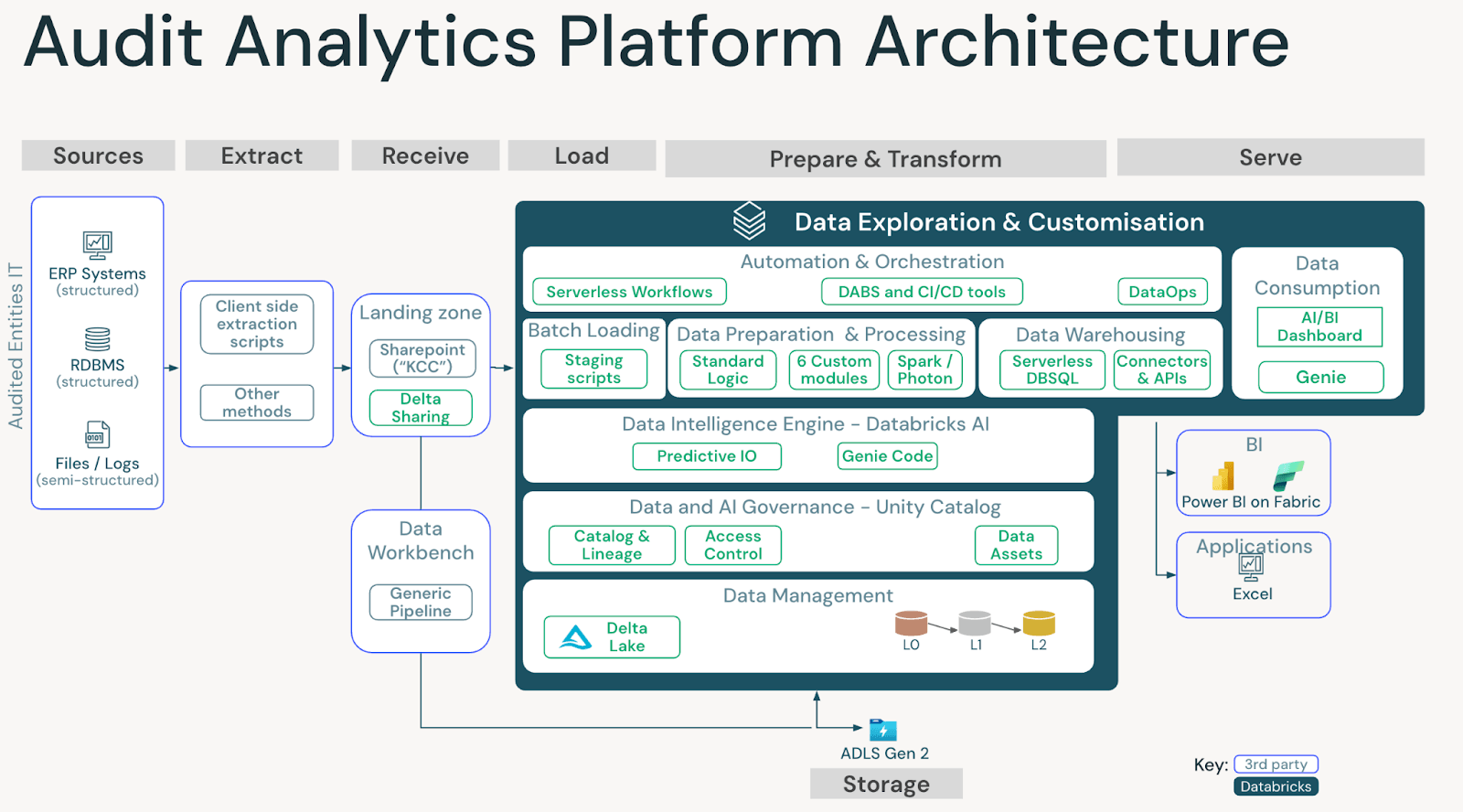

KPMG UK’s Audit business runs complex, high-volume analytics when auditing entities globally, with mature data and analytics processes. The latest wave of transformation focuses on evolving a proven, trusted data foundation into one that is AI-enabled while preserving the rigour, governance and professional standards required for Audit. By converging onto a unified, cloud-native Lakehouse architecture, Audit teams gain a governed platform that brings together structured data, advanced analytics and AI in one place. This modern foundation preserves the robustness and trust expected of Audit while enabling faster experimentation, richer insights and more dynamic audit delivery.

Databricks SQL as a core engine

Part of this evolution is the migration of core SQL workloads from traditional SQL Server environments to Databricks SQL. This shift brings critical Audit data directly onto Lakehouse, where it can support everything from classic reporting to advanced AI and machine learning use cases on the same platform, as appropriate.

Databricks SQL delivers horizontal scaling, real‑time and streaming capabilities, and native support for AI and machine learning workloads, all underpinned by Delta. This makes it far easier to handle seasonal peaks, diverse data volumes, and increasingly complex analytical demands. It also naturally integrates with low‑code and no‑code tooling such as Lakeflow Designer and AI‑enabled BI products, opening the door for a broader range of users to participate in analytics.

AI‑accelerated migration of SQL

To keep the program fast and focused, KPMG UK used AI to accelerate the migration and modernisation of its SQL estate. Lakebridge was used early in the process to scan and estimate the complexity of existing SQL code, providing a clear, data‑driven view of effort, risk and prioritisation for moving onto Databricks SQL.

From there, Databricks‑hosted large language models, such as Claude Sonnet and Genie Code, were integrated into the developer workflow. These models helped automate and streamline tasks such as converting T‑SQL to Databricks SQL, helping to not just take a lift and shift approach but also removing technical debt by improving joins and window functions, and suggesting performance optimisations that leverage Delta features.

They also assisted in explaining and decomposing complex stored procedures, breaking them into modular queries and notebooks better suited to Lakehouse architecture. This approach was embedded within a controlled developer workflow, with outputs reviewed and validated by engineers to ensure accuracy, consistency, and audit quality standards were maintained. This reduced refactoring time by around 60%, enabling the team to modernise over 400 scripts and stored procedures within roughly three months without compromising quality or control.

Reimagining the Audit Process with Genie

Powered by the Databricks SQL foundation, Genie can redefine how analysts and auditors explore and verify data. Users can ask natural‑language questions while Genie generates fully traceable, version‑controlled SQL, maintaining the transparency and data integrity required for regulatory compliance.

The Genie Agent takes it a step further, driving deeper, research‑grade analysis while maintaining complete transparency and control. Every result is linked to approved, auditable data sources, giving teams the confidence to move faster without sacrificing accuracy or assurance. Together, Genie is setting a new standard for intelligent, trusted audit analytics.

Elastic performance with SQL Serverless

Audit workloads are inherently spiky, with pronounced peak seasons and unpredictable data volumes – from thousands to billions of rows of financial data that are being processed across thousands of KPMG UK audits that are delivered globally. Databricks SQL Serverless addresses this by providing a fully managed, elastic compute that automatically scales up and down to match demand.

This means analysts and auditors spend more time focusing on analytics and less on infrastructure planning, capacity management, or idle resources. The result is faster time‑to‑insight, reduced operational overhead, and a consistent experience for teams delivering high‑value analytics under time pressure.

Connected, governed sharing by design

Secure, governed connectivity with audited entities is a core requirement for KPMG UK’s audit data platform. Following a vision developed with the support of Databricks, Delta Sharing and broader data federation capabilities have become core components of the architecture.

These capabilities enable auditable, traceable, and timely data exchange while maintaining tight control over access and lineage. They also support collaboration across geographies and entities, helping auditors work from a consistent, trusted view of the data.

People at the center of change

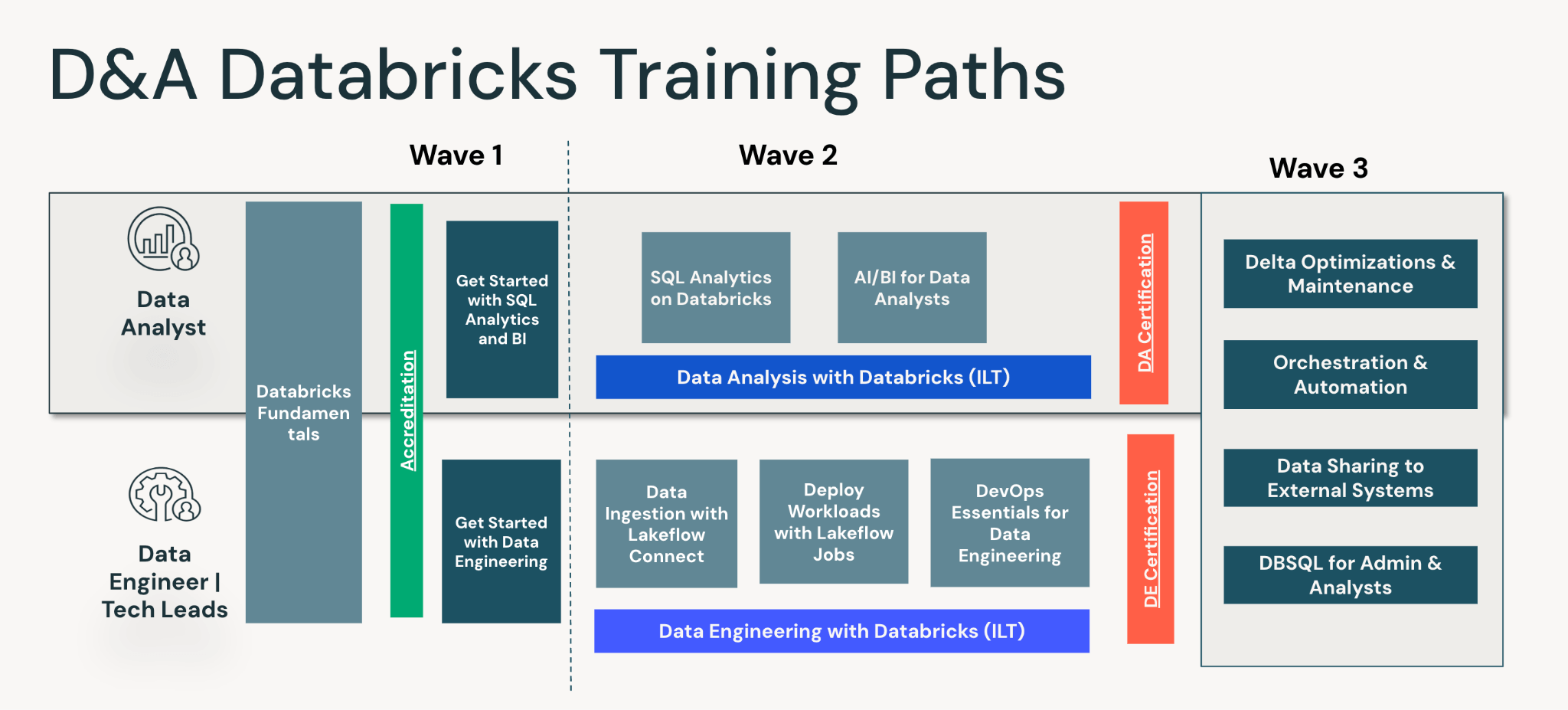

Technology on its own does not deliver transformation. KPMG UK placed people and capability building at the center of the program. A coordinated enablement initiative upskilled audit data analysts and data engineers to deliver analytics alongside the core audit team, using a Databricks‑based platform, alongside new operational processes and modern, agile ways of working.

Over 200 new users were upskilled and onboarded to the new platform within three months, ensuring a smooth onboarding into the new platform and ways of performing analytics. Training covered data engineering best practices, Databricks usage, governance principles and more.

Outcomes and the road ahead

The result is a unified Audit data platform that consolidates governance, access control, and data lineage while simplifying operations through serverless, automated scaling. Spark execution improvements, streamlined SQL workloads, and tighter integration with downstream tools such as Power BI have all contributed to higher productivity and a smoother analyst experience.

Looking forward, KPMG UK is expanding Delta Sharing and data federation, scaling to more complex global workloads and continuing to migrate and unify data, code and teams on the Lakehouse. Low‑code and no‑code capabilities such as Lakeflow Designer, together with Databricks Apps and Lakebase, will further democratise analytics, while AI‑readiness is being designed into every layer of the architecture. The Financial Report Analyser, an in-house GenAI Audit tool at KPMG UK, was developed and has been widely adopted by Auditors. Meanwhile, an AI Factory is being built to ensure the reusability of patterns and artefacts from existing AI use cases. This platform also provides a foundation for future AI governance and assurance, enabling consistent controls, monitoring and evidence across the AI lifecycle as regulatory expectations continue to evolve.

Building more trusted AI on a unified Lakehouse

With a solid lakehouse architecture and audit data consolidated into a single, governed platform, KPMG UK is positioned to build trusted AI and GenAI solutions. Most AI agents do not fail because they “cannot reason”, but because they are not given the right, complete, or well‑governed data in the first place. A lakehouse directly tackles this by making high‑quality, auditable data the default input to every AI workflow.

On top of this foundation, KPMG UK can design AI agents that work from a single source of truth, with lineage, access controls, and quality checks enforced at the data layer rather than in each model. This means retrieval‑augmented generation (RAG), scenario analysis, and intelligent assistants for auditors can all draw from the same curated tables and governed views. By aligning data architecture, model development, and controls with KPMG UK’s Trusted AI principles, AI outputs can be traced back to governed data sources, supporting explainability, auditability, and confidence in use.

Get the latest posts in your inbox

Subscribe to our blog and get the latest posts delivered to your inbox.