Introducing Data Clean Rooms for the Lakehouse

by Matei Zaharia, Itai Weiss, Steve Mahoney, Sachin Thakur, Dan Morris and Jay Bhankharia

We are excited to announce data clean rooms for the Lakehouse, allowing businesses to easily collaborate with their customers and partners on any cloud in a privacy-safe way. Participants in the data clean rooms can share and join their existing data and run complex workloads in any language - Python, R, SQL, Java, and Scala - on the data while maintaining data privacy.

With the demand for external data greater than ever, organizations are looking for ways to securely exchange their data and consume external data to foster data-driven innovations. Historically, organizations have leveraged data-sharing solutions to share data with their partners and relied on mutual trust to preserve data privacy. But the organizations relinquish control over the data once it is shared and have little to no visibility into how data is consumed by their partners across various platforms. This exposes potential data misuse and data privacy breaches. With stringent data privacy regulations, it is imperative for organizations to have control and visibility into how their sensitive data is consumed. As a result, organizations need a secure, controlled, and private way to collaborate on data, and this is where data clean rooms come into the picture.

This blog will discuss data clean rooms, the demand for data clean rooms, and our vision for a scalable data clean room on the Databricks Lakehouse Platform.

What is a Data Clean Room and why does it matter for your business?

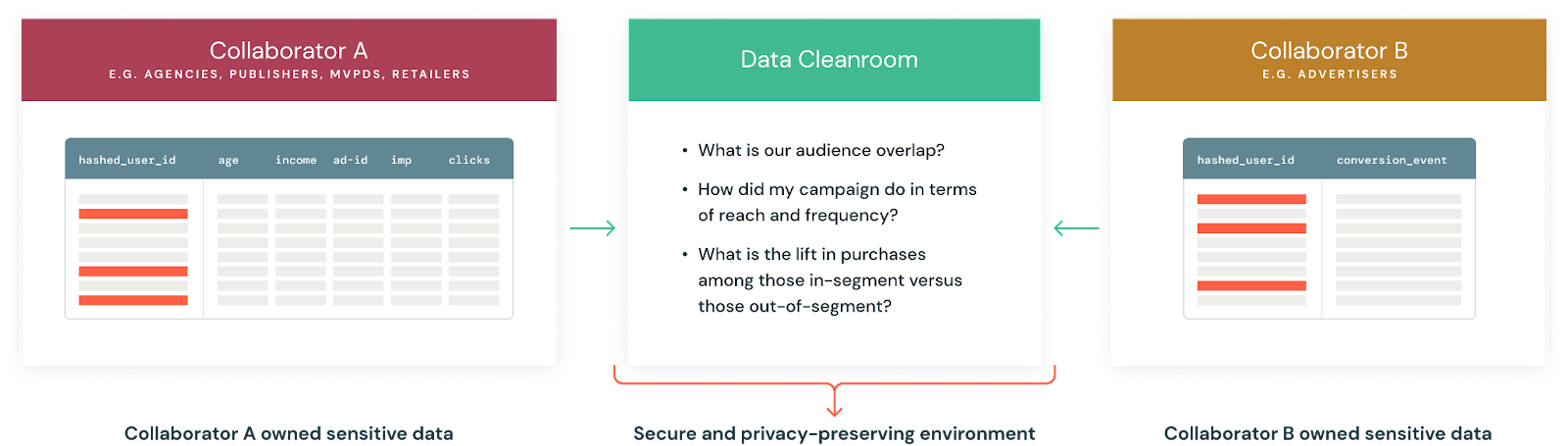

A data clean room provides a secure, governed, and privacy-safe environment, in which multiple participants can join their first-party data and perform analysis on the data, without the risk of exposing their data to other participants. Participants have full control of their data and can decide which participants can perform what analysis on their data without exposing any sensitive data such as personally identifiable information (PII).

Data clean rooms open a broad array of use cases across industries. For example, consumer packaged goods (CPG) companies can see sales uplift by joining their first-party advertisement data with the point of sale (POS) transactional data of their retail partners. In the media industry, advertisers and marketers can deliver more targeted ads, with broader reach, better segmentation, and greater ad effectiveness transparency while safeguarding data privacy. Financial services companies can collaborate across the value chain to establish proactive fraud detection or anti-money laundering strategies. In fact, IDC predicts that by 2024, 65% of G2000 Enterprises will form data-sharing partnerships with external stakeholders via data clean rooms to increase interdependence while safeguarding data privacy.

Let's look at some of the compelling reasons driving the demand for clean rooms:

Rapidly changing security, compliance, and privacy landscape: Stringent data privacy regulations such as GDPR and CCPA, along with sweeping changes in third-party measurement, have transformed how organizations collect, use, and share data, particularly for advertising and marketing use cases. For example, Apple's App Tracking Transparency Framework (ATT) provides users of Apple devices the freedom and flexibility to easily opt out of app tracking. Google also plans to phase out support for third-party cookies in Chrome by late 2023. As these privacy laws and practices evolve, the demand for data clean rooms is likely to rise as the industry moves to new identifiers that are PII based, such as UID 2.0. Organizations will try to find new solutions to join data with their partners in a privacy-centric way to achieve their business objectives in the cookie-less reality.

Collaboration in a fragmented data ecosystem: Today, consumers have more options than ever before when it comes to where, when, and how they engage with content. As a result, the digital footprint of consumers is fragmented across different platforms, necessitating that companies collaborate with their partners to create a unified view of their customers' needs and requirements. To facilitate collaboration across organizations, clean rooms provide a secure and private way to combine their data with other data to unlock new insights or capabilities.

New ways to monetize data: Most organizations either already have or are looking to develop monetization strategies for their existing data or IP. With today's privacy laws, companies will try to find any possible advantages to monetize their data without the risk of breaking privacy rules. This creates an opportunity for data vendors or publishers to join data for big data analytics without having direct access to the data.

Existing data clean room solutions come with big drawbacks

As organizations explore various clean room solutions, there are some glaring shortcomings in the existing solutions, which don't realize the full potential of the "clean rooms" and meet the business requirements of organizations.

Data movement and replication: The existing data clean room vendors require participants to move their data into the vendor platforms, which results in platform lock-in and added data storage costs to the participants. Additionally, it is time-consuming for participants to prepare the data in a standardized format before performing any analysis on the aggregated data. Furthermore, participants have to replicate the data across different clouds and regions to facilitate collaborations with participants on different clouds and regions, resulting in operational and cost overhead.

Restricted to SQL: Existing clean room solutions don't provide much flexibility to run arbitrary workloads and analyses and are often restricted to simple SQL statements. While SQL is powerful and absolutely needed for clean rooms, there are times when you require complex computations such as machine learning, integration with APIs, or other analysis workloads where SQL just won't cut it.

Hard to scale: Most of the existing clean room solutions are tied to a single vendor and are not scalable to expand collaboration beyond two participants at a time. For example, an advertiser might want to get a detailed view of their ad performance across different platforms, which requires analysis of the aggregated data from multiple data publishers. With collaboration limited to just two participants, organizations get partial insights on one clean room platform and end up moving their data to another clean room vendor, incurring the operational overhead of manually collating partial insights.

Deploy a scalable and flexible data clean room solution with the Databricks Lakehouse platform

Databricks Lakehouse Platform provides a comprehensive set of tools to build, serve, and deploy a scalable and flexible data clean room based on your data privacy and governance requirements.

Secure data sharing with no replication: With Delta Sharing, clean room participants can securely share data from their data lakes with other participants without any data replication across clouds or regions. Your data stays with you and it is not locked into any platform. Additionally, clean room participants can centrally audit and monitor the usage of their data.

Full support to run arbitrary workloads and languages: Databricks Lakehouse platform provides the clean room participants the flexibility to run any complex computations such as machine learning or data workloads in any language — SQL, R, Scala, Java, Python — on the data.

Easily scalable with guided onboarding experience: Clean rooms on the Databricks Lakehouse Platform are easily scalable to multiple participants on any cloud or region. It is easy to get started and guide participants through common use cases using predefined templates (e.g., jobs, workflows, dashboards), reducing time to insights.

Privacy-safe with fine-grained access controls: With Unity Catalog, you can enable fine-grained access controls on the data and meet your privacy requirements. Integrated governance allows participants to have full control over queries or jobs that can be executed on their data. All the queries or jobs on the data are executed on Databricks-hosted trusted compute. Participants never get access to the raw data of other participants, ensuring data privacy. Participants can also leverage open-source or third-party differential privacy frameworks, making your clean room future-proof.

To learn more about data clean rooms on Databricks Lakehouse, please reach out to your Databricks account representatives.

Want to see it in action?

Try the Clean Room Product Tour to help businesses collaborate securely with customers and partners across any cloud platform, ensuring privacy and security.

Get the latest posts in your inbox

Subscribe to our blog and get the latest posts delivered to your inbox.