Launching a New Files Experience for the Databricks Workspace

Work with files and notebooks together in the same familiar editor

by Jason Messer, Austin Ford, Weston Hutchins, Jerry James and Jim Allen Wallace

Today, we are excited to announce the general availability of files throughout the Databricks workspace. Files support allows Databricks users to store Python source code, small sample data, or any other type of file content directly alongside their notebooks. Databricks is also making generally available a new rich file editor that supports inline code execution. The new editor brings many Notebook features to the file editor: autocomplete-as-you-type, object inspection, code folding, and more, offering a more powerful editing experience.

Files support in the Workspace extends capabilities users will be familiar with from Databricks Repos, making those available throughout the entire platform, whether or not users are working with version control systems.

Files enable software development best practices

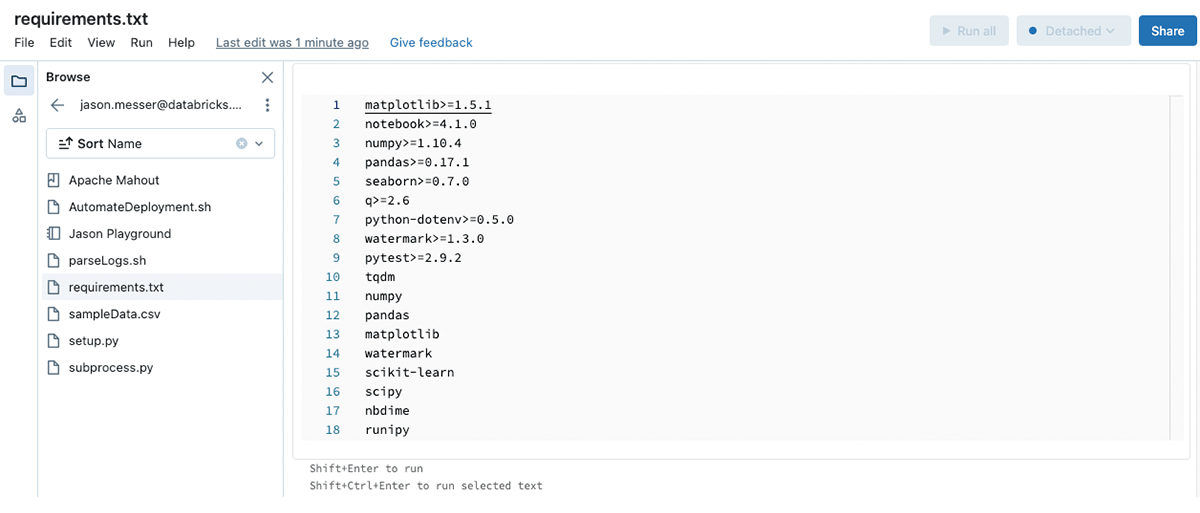

Workspace files expands the surface area in which you can apply software development best practices, such as modular code, unit testing, library and artifact reuse, and specifying software dependencies as code. Historically, Databricks Workspaces only supported notebooks and folders containing notebooks, but now you can create and store files that are less than 200MB in the Workspace. These could include source code and associated requirements (e.g., Python scripts, modules, requirements.txt, or .whl files), small sample data (e.g., .csv files), and more.

Benefits of using workspace files include:

- Modular code and reuse: Files support enables you to refactor your large, many-celled notebooks into smaller and more understandable modules. Your notebooks can reference these modules using an 'import' statement.

- Testing: You can create unit tests for your code in notebooks or modules and package these tests as files alongside the source code.

- Initialization scripts: You can store cluster-scoped initialization scripts in workspace files. These will be access-controlled so that only authorized users may modify them.

- Library and artifact reuse: You can store Wheel, Jar, and shared Python library source files with your notebooks, making it easier to share, distribute, and reproduce the work in these notebooks.

- Improved software dependency management: With requirements.txt files, you can encapsulate the software dependencies for notebooks or other Python code assets in the Workspace into a file, reducing the future replication of that software environment to a simple `%pip -r` call.

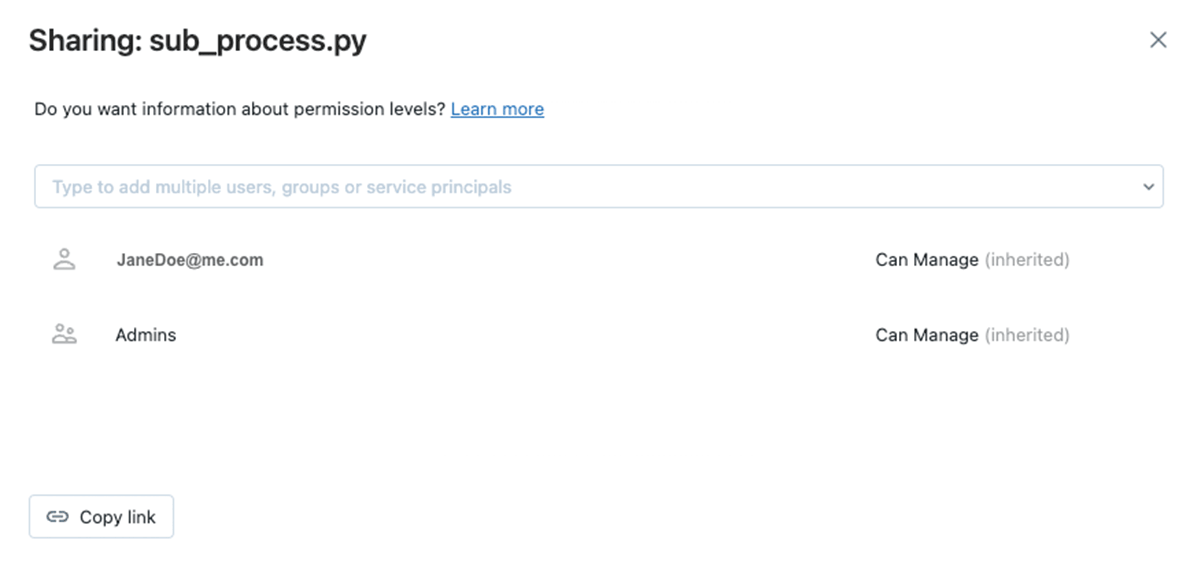

Secure access to files or folders with Access Control Lists

Secure access to individual files or folders using that object's Access Control Lists (ACLs). You can restrict access to individual files or folders to only the users or groups of users that should have access. ACLs can be controlled directly from the Workspace browser or inside the object.

Boost your productivity with powerful file editing and execution

The updated file editor unifies the authoring experiences for files and notebooks by replacing the previous file editing experience with the same one used in the Notebook. You now have a single experience whether working in notebooks or files.

The new editor offers improved programming ergonomics including:

- Autocomplete-as-you-type: With the new editor, the autocomplete suggestion box will appear automatically as you type.

- Object inspection: Hover over a variable or other object to see its details.

- Code folding: Code folding lets you temporarily hide sections of code, enabling you to focus only on the parts of long code locks on which you are working.

- Side-by-side diffs in version history: When displaying a previous version of your file, the new editor will display side-by-side diffs to easily see what changed.

We will also shortly release a bottom-docked output window for the File Editor, so you don't need to scroll to see your execution outputs. Keep an eye on our release notes for updates when it releases.

Try it today

You can now use and reference any file type in the Workspace without any additional setup or the introduction of source control. Simply upload a file (<200MB) and reference it in your code. Workspace files are enabled by default for Databricks Runtime 11.2 and above, and support for cluster-scoped init scripts is enabled on all current Databricks Runtimes. Learn more in our developer documentation.

Get the latest posts in your inbox

Subscribe to our blog and get the latest posts delivered to your inbox.