What is a Digital Twin?

Virtual representation of physical assets updated with real-time IoT data, enabling predictive analysis, optimization, and scenario testing

- A digital twin is a virtual representation of a physical object or system that stays in sync with its real world counterpart through live data.

- Organizations use digital twins to monitor performance, simulate scenarios and optimize operations across assets, factories and supply chains.

- The Databricks Lakehouse Platform helps build digital twins by unifying streaming sensor data, historical information and AI models in one environment.

What is a Digital Twin?

The classical definition of of digital twin is; ""A digital twin is a virtual model designed to accurately reflect a physical object."" – IBM[KVK4] For a discrete or continuous manufacturing process, a digital twin gathers system and processes state data with help of various IoT sensors (operational technology data (OT)) and enterprise data (informational technology (IT)) to forms a virtual model which is then used to run simulations, study performance issues, and generate possible insights.

The concept of Digital Twins is not new. In fact, it is reported that the first application was over twenty five years ago, during the early phases of foundation and cofferdam construction for the London Heathrow Express facilities, to monitor and predict foundation borehole grouting. In the years since this first application, edge computing, AI, data connectivity, 5G connectivity, and the improvements of the Internet of Things (IoT) have enabled digital twins to become cost-effective and are now an imperative in today's data-driven businesses.

Digital twins are now so ingrained in Manufacturing that the global industry market is forecasted to reach $48Bn dollars in 2026. This figure is up from $3.1 Bn in 2020 at a CAGR of 58%, riding on the wave of Industry 4.0.

Today's manufacturing industries are expected to streamline and optimize all the processes in their value chain from product development and design, through operations and supply chain optimization to obtaining customer feedback to reflect and respond to rapidly growing demands swiftly. The digital twins category is broad and is addressing a multitude of challenges within manufacturing, logistics and transportation.

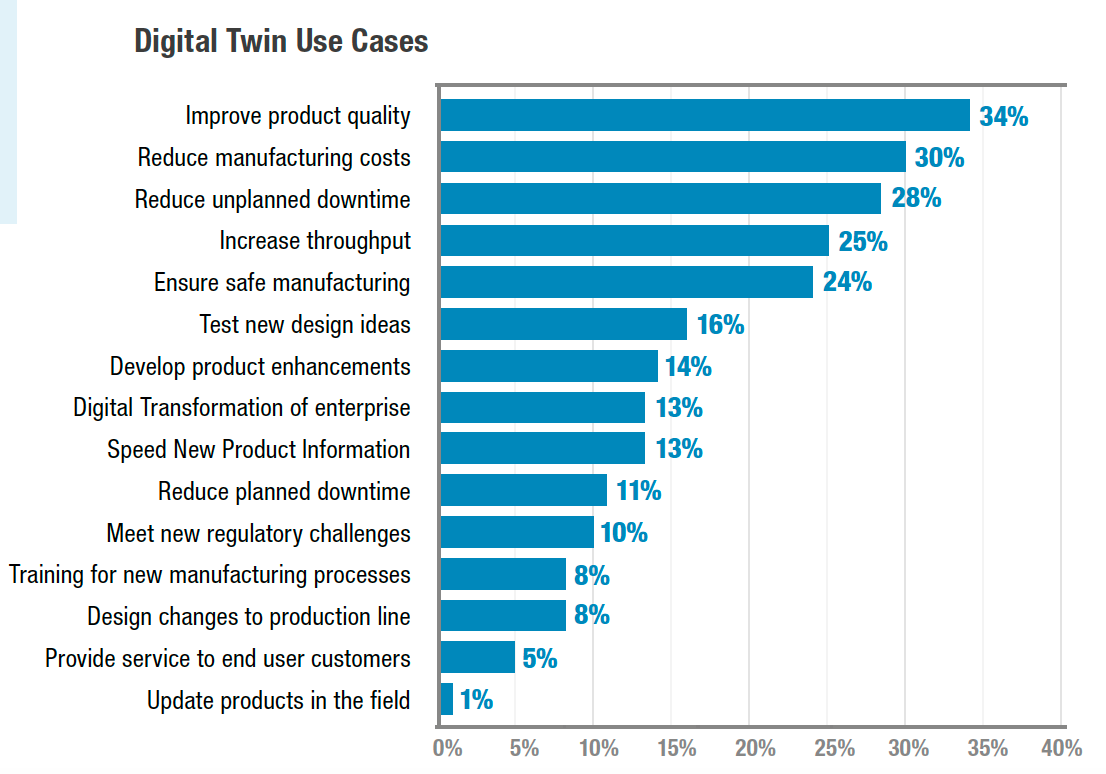

The most common challenges faced by the manufacturing industry that digital twins are addressing are:

- Product designs are more complex, resulting in higher cost, and increasingly longer development times

- The supply chain is opaque

- Production lines are not optimized – performance variations, unknown defects and projection of operating cost is obscure

- Poor quality management – over reliance on theory, managed by individual departments,

- Reactive maintenance costs are too high resulting in excessive downtime or process disruptions

- Incongruous collaborations between the departments

- Invisibility of customer demand for gathering real time feedback

Why is this important?

Industry 4.0 and the subsequent Intelligent Supply Chain efforts have made significant strides in improving operations and building agile supply chains - but these efforts would have come at significant costs without digital twin technology. Can you imagine the cost to change an oil refinery's crude distillation unit process conditions to improve the output of diesel one week and gasoline the next to address changes in demand and insure maximum economic value? Can you imagine how to replicate an even simple supply chain to model risk? It is financially and physically impossible to build a physical twin of a supply chain.

Let's look at the benefits that digital twins deliver to the manufacturing sector:

- Product design and development is performed with less cost and is completed in less time as iterative simulations, using multiple constraints deliver the best or most optimized design - all commercial aircraft are designed using digital twins

- Digital twins provide us with the awareness of how long inventory will last, when to replenish, and how to minimize the supply chains disruptions - the oil and gas industry uses supply chain oriented digital twins to reduce supply chain bottlenecks in storage and mid-stream delivery, schedule tanker off-loads and model demand with externalities.

- Continuous quality checks on produced items with ML/AI generated feedbacks pre-emptively assures improved product quality - automotive final paint inspection is performed with computer vision built on top of digital twin technology

- Striking the sweet spot between when to replace a part before the process degrades or breaks down and utilizing the components to its fullest, digital twin provides with a real time feedback - digital twins are the backbone of building an asset performance management suite

- Digital twins create the opportunity to have multiple departments in sync by providing necessary instructions modularly to attain a required throughput - digital twins are the backbone of kaizen events that optimizes manufacturing process flow

- Customer feedback loops can be modeled through inputs, from point of sale customer behavior, buying preferences, or product performance and then integrated into the product development process, forming a closed loop providing an improved product design

The agentic AI playbook for the enterprise

What are Databricks' differentiated capabilities?

- Databricks' Lakehouse uses technologies that include Delta, DLT, Autoloader and Photon to enable customers to make data available for real-time decisions.

- Lakehouse for MFG supports the largest data jobs at near real-time intervals. For example, customers are bringing nearly 400 million events per day from transactional log systems at 15-second intervals. Because of the disruption to reporting and analysis that occurs during data processing, most retail customers load data to their data warehouse during a nightly batch. Some companies are even loading data weekly or monthly.

- A Lakehouse event-driven architecture provides a simpler method of ingesting and processing batch and streaming data than legacy approaches, such as lambda architectures. This architecture handles the change data capture and provides ACID compliance to transactions.

- DLT simplifies the creation of data pipelines and automatically builds in lineage to assist with ongoing management.

- The Lakehouse allows for real-time stream ingestion of data and analytics on streaming data. Data warehouses require the extraction, transformation, loading, and additional extraction from the data warehouse to perform any analytics.

- Photon provides record-setting query performance, enabling users to query even the largest of data sets to power real-time decisions in BI tools.

Additional Resources

Get the latest posts in your inbox

Subscribe to our blog and get the latest posts delivered to your inbox.