What is Tensorflow?

Google's open-source machine learning framework that powers deep neural networks for image recognition, natural language processing, and numerical computation at scale

- TensorFlow is Google's open source library for numerical computation and large scale machine learning that powers deep learning models for tasks such as image recognition, word embeddings and natural language processing.

- TensorFlow runs on CPUs, GPUs and Google TPUs, provides high level and low level APIs in languages such as Python, C++ and Java, and is widely adopted thanks to its active community and strong production tooling.

- Databricks Runtime for Machine Learning includes TensorFlow and TensorBoard out of the box so teams can quickly spin up clusters, train models and visualize runs without separate installation.

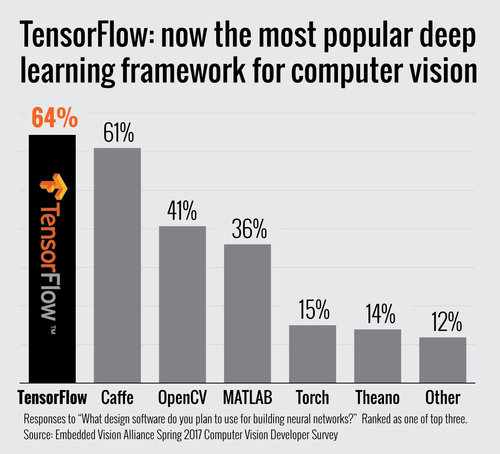

In November of 2015, Google released its open-source framework for machine learning and named it TensorFlow. It supports deep-learning, neural networks, and general numerical computations on CPUs, GPUs, and clusters of GPUs. One of the biggest advantages of TensorFlow is its open-source community of developers, data scientists, and data engineers who contribute to its repository. The current version of TensorFlow can be found on GitHub along with release notes. TensorFlow is by far the most popular AI engine being used today.

What is TensorFlow?

TensorFlow is an open-source library for numerical computation, large-scale machine learning, deep learning, and other statistical and predictive analytics workloads. This type of technology makes it faster and easier for developers to implement machine learning models, as it assists the process of acquiring data, serving predictions at scale, and refining future results.

Okay, so exactly what does TensorFlow do? It can train and run deep neural networks for things like handwritten digit classification, image recognition, word embeddings, and natural language processing (NLP). The code contained in its software libraries can be added to any application to help it learn these tasks.

TensorFlow applications can run on either conventional CPUs (central processing units) or GPUs (higher-performance graphics processing units). Because TensorFlow was developed by Google, it also operates on the company’s own tensor processing units (TPUs), which are specifically designed to speed up TensorFlow jobs.

You might also be wondering: what language is TensorFlow written in? Although it uses Python as a front-end API for building applications with the framework, it actually has wrappers in several languages including C++ and Java. This means you can train and deploy your machine learning model quickly, regardless of the language or platform you use.

Click here for more FAQs about TensorFlow model development.

TensorFlow history

Google first released TensorFlow in 2015 under the Apache 2.0 license. Its predecessor was a closed-source Google framework called DistBelief, which provided a testbed for deep learning implementation.

Google's first TPUs were detailed publicly in 2016, and used internally in conjunction with TensorFlow to power some of the company’s applications and online services. This included Google’s RankBrain search algorithm and the Street View mapping technology.

In early 2017, Google TensorFlow reached Release 1.0.0 status. A year later, Google made the second generation of TPUs available to Google Cloud Platform users for training and running their own machine learning models.

Google released the most recent version, TensorFlow 2.0, in October 2019. Google had taken user feedback into account in order to make various improvements to the framework and make it easier to work with — for instance, it now uses the relatively simple Keras API for model training.

Who created TensorFlow?

As you now know, Google developed TensorFlow, and continues to own and maintain the framework. It was created by the Google Brain team of researchers, who carry out fundamental research in order to advance key areas of machine intelligence and promote a better theoretical understanding of deep learning.

The Google Brain team designed TensorFlow to be able to work independently from Google’s own computing infrastructure, but it gains many advantages from being backed by a commercial giant. As well as funding the project’s rapid development, over the years Google has also improved TensorFlow to ensure that it is easy to deploy and use.

Google chose to make TensorFlow an open source framework with the aim of accelerating the development of AI. As a community-based project, all users can help to improve the technology — and everyone shares the benefits.

How does TensorFlow work?

TensorFlow combines various machine learning and deep learning (or neural networking) models and algorithms, and makes them useful by way of a common interface.

It enables developers to create dataflow graphs with computational nodes representing mathematical operations. Each connection between nodes represents multidimensional vectors or matrices, creating what are known as tensors.

While Python provides the front-end API for TensorFlow, the actual math operations are not performed in Python. Instead, high-performance C++ binaries perform these operations behind the scenes. Python directs traffic between the pieces and hooks them together via high-level programming abstractions.

TensorFlow applications can be run on almost any target that’s convenient, including iOS and Android devices, local machines, or a cluster in the cloud — as well as CPUs or GPUs (or Google’s custom TPUs if you’re using Google Cloud).

TensorFlow includes sets of both high-level and low-level APIs. Google recommends the high-level APIs for simplifying data pipeline development and application programming, but the low-level APIs (called TensorFlow Core) are useful for debugging applications and experimentation.

What is TensorFlow used for? What can you do with TensorFlow?

TensorFlow is designed to streamline the process of developing and executing advanced analytics applications for users such as data scientists, statisticians, and predictive modelers.

Businesses of varying types and sizes widely use the framework to automate processes and develop new systems, and it’s particularly useful for very large-scale parallel processing applications such as neural networks. It’s also been used in experiments and tests for self-driving vehicles.

As you’d expect, TensorFlow’s parent company Google also uses it for in-house operations, such as improving the information retrieval capabilities of its search engine, and powering applications for automatic email response generation, image classification, and optical character recognition.

One of the advantages of TensorFlow is that it provides abstraction, which means developers can focus on the overall logic of the application while the framework takes care of the fine details. It’s also convenient for developers who need to debug and gain introspection into TensorFlow apps.

The TensorBoard visualization suite has an interactive, web-based dashboard that lets you inspect and profile the way graphs run. There’s also an eager execution mode that allows you to evaluate and modify each graph operation separately and transparently, rather than creating the entire graph as a single opaque object and evaluating it all at once.

Databricks Runtime for Machine Learning includes TensorFlow and TensorBoard, so you can use these libraries without installing any packages.

Now let’s take a look at how to use TensorFlow.

How to install TensorFlow

Full instructions and tutorials are available on tensorflow.org, but here are the basics.

System requirements:

- Python 3.7+

- pip 19.0 or later (requires manylinux2010 support, and TensorFlow 2 requires a newer version of pip)

- Ubuntu 16.04 or later (64-bit)

- macOS 10.12.6 (Sierra) or later (64-bit) (no GPU support)

- Windows 7 or later (64-bit)

Hardware requirements:

- Starting with TensorFlow 1.6, binaries use AVX instructions which may not run on older CPUs.

- GPU support requires a CUDA®-enabled card (Ubuntu and Windows)

#1. Install the Python development environment on your system

Check if your Python environment is already configured:

python3 - -version

pip3 - -version

If these packages are already installed, skip to the next step.

Otherwise, install Python, the pip package manager, and venv.

If not in a virtual environment, use python3 -m pip for the commands below. This ensures that you upgrade and use the Python pip instead of the system pip.

#2. Create a virtual environment (recommended)

Python virtual environments are used to isolate package installation from the system.

#3. Install the TensorFlow pip package

Choose one of the following TensorFlow packages to install from PyPI:

- tensorflow —Latest stable release with CPU and GPU support (Ubuntu and Windows)

- tf-nightly —Preview build (unstable). Ubuntu and Windows include GPU support

- tensorflow==1.15 —The final version of TensorFlow 1.x.

Verify the installation. If a tensor is returned, you've installed TensorFlow successfully.

Note: A few installation mechanisms require the URL of the TensorFlow Python package. The value you specify depends on your Python version.

How to update TensorFlow

The pip package manager offers a simple method to upgrade TensorFlow, regardless of the environment.

Prerequisites:

- Python 3.6-3.9 installed and configured (check the Python version before starting).

- TensorFlow 2 installed.

- The pip package manager version 19.0 or greater (check the pip version and upgrade if necessary).

- Access to the command line/terminal or notebook environment.

To upgrade TensorFlow to a newer version:

#1. Open the terminal (CTRL+ALT+T).

#2. Check the currently installed TensorFlow version:

pip3 show tensorflow

The command shows information about the package, including the version.

#3. Upgrade TensorFlow to a newer version with:

pip3 install - -upgrade tensorflow==<version>

Make sure you select a version compatible with your Python release, otherwise the version will not install. For the notebook environment, use the following command, and restart the kernel after completion:

!pip install - -upgrade tensorflow==<version>

This automatically removes the old version along with the dependencies and installs the newer upgrade.

#4. Check the upgraded version by running:

pip3 show tensorflow

The agentic AI playbook for the enterprise

What Is TensorFlow Lite?

In 2017, Google introduced a new version of TensorFlow called TensorFlow Lite. TensorFlow Lite is optimized for use on embedded and mobile devices. It’s an open-source, product-ready, cross-platform deep learning framework that converts a pre-trained TensorFlow model to a special format that can be optimized for speed or storage.

To ensure you’re using the right version for any given scenario, you need to know when to use TensorFlow and when to use TensorFlow Lite. For example, if you need to deploy a high-performance deep learning model in an area without a good network connection, you’d use TensorFlow Lite to reduce the file size.

If you’re developing a model for edge devices, it needs to be lightweight so that it uses minimal space and increases download speed on lower-bandwidth networks. To achieve this, you need optimization to reduce the size of the model or improve the latency—which TensorFlow Lite does via quantization and weight pruning.

The resulting models are lightweight enough to be deployed for a low-latency inference on edge devices such as Android or iOS cell phones, or Linux-based embedded devices like Raspberry Pis or Microcontrollers. TensorFlow Lite also uses several hardware accelerators for speed, accuracy, and optimizing power consumption, which are important for running inferences at the edge.

What is a dense layer in TensorFlow?

Dense layers are used in the creation of both shallow and deep neural networks. Artificial neural networks are brain-like architectures made up of a system of neurons, and they’re able to learn through examples instead of being programmed with specific rules.

In deep learning, multiple layers are used to extract higher-level features from the raw input; when the network is composed of several layers, it’s called a stacked neural network. Each of these layers is made of nodes, which combine input from the data with a set of coefficients called weights that either amplify or dampen the input.

For its 2.0 release, TensorFlow has adopted a deep learning API called Keras, which runs on top of TensorFlow and provides a number of pre-built layers for different neural network architectures and purposes. A dense layer is one of these — it has a deep connection, meaning that each neuron receives input from all the neurons of its previous layer.

Dense layers are typically used for changing dimensions, rotation, scaling, and translation of the vectors it generates. They have the ability to learn features from all the combined features of the previous layer.

What's the difference between TensorFlow and Python?

TensorFlow is an open-source machine learning framework, and Python is a popular computer programming language. It’s one of the languages used in TensorFlow. Python is the recommended language for TensorFlow, although it also uses C++ and JavaScript.

Python was developed to help programmers write clear, logical code for both small and large projects. It’s often used to build websites and software, automate tasks, and carry out data analysis. This makes it relatively simple for beginners to learn TensorFlow.

A useful question to ask is: what version of Python does TensorFlow support? Certain TensorFlow releases are only compatible with specific versions of Python, and 2.0 requires Python 3.7 to 3.10. Make sure you check the requirements before installing TensorFlow.

What is PyTorch and TensorFlow?

TensorFlow is not the only machine learning framework in existence. There are a number of other choices, such as PyTorch, which have similarities and cover many of the same needs. So, what is the actual difference between TensorFlow and PyTorch?

PyTorch and TensorFlow are just two of the frameworks developed by tech companies for the Python deep learning environment, helping human-like computers to solve real-world problems. The key difference between PyTorch and TensorFlow is the way they execute code. PyTorch is more tightly integrated with the Python language.

As we’ve seen, TensorFlow has robust visualization capabilities, production-ready deployment options, and support for mobile platforms. PyTorch isn’t as established but is still popular for its simplicity and ease of use, as well as dynamic computational graphs and efficient memory usage.

As for the question of which is better, TensorFlow or Pytorch — it really depends on what you want to achieve. If your aim is to build AI-related products, TensorFlow will be a good fit for you, whereas PyTorch is more suited to research-oriented developers. PyTorch is a good fit for getting projects up and running in short order, but TensorFlow has more robust capabilities for larger projects and more complex workflows.

Companies Using TensorFlow

According to the TensorFlow website, a number of other big-name companies use the framework as well as Google. These include Airbnb, Coca-Cola, eBay, Intel, Qualcomm, SAP, Twitter, Uber, Snapchat developer Snap Inc., and sports consulting company STATS LLC.

Top five TensorFlow alternatives

1. DataRobot

DataRobot is a cloud-based machine learning framework designed to help businesses extend their data science capabilities by deploying machine learning models and creating advanced AI applications.

The framework enables you to use and optimize the most valuable open-source modeling techniques from the likes of R, Python, Spark, H2O, VW, and XGBoost. By automating predictive analytics, DataRobot helps data scientists and analysts produce more accurate predictive models.

There’s a growing library of the best features, algorithms, and parameter values for building each model—and with automated ensembling, users can easily find and combine multiple algorithms and pre-built prototypes for feature extraction and data preparation (with no need for trial-and-error guesswork).

2. PyTorch

Developed by the team at Facebook and open sourced on GitHub.com in 2017, PyTorch is one of the newer deep learning frameworks. As we mentioned earlier, it has several similarities with TensorFlow, including hardware-accelerated components and a highly interactive development model for design-as-you-go.

PyTorch also optimizes performance by taking advantage of native support for asynchronous execution from Python. Benefits include built-in dynamic graphs and a stronger community than that of TensorFlow.

However, PyTorch doesn't provide a framework to deploy trained models directly online, and an API server is needed for production. It also requires a third party — Visdom — for visualization, and the features of this are rather limited.

3. Keras

Keras is a high-level open-source neural network library designed to be user-friendly, modular, and easy to extend. It’s written in Python and supports multiple back-end neural network computation engines — although its primary (and default) back end is TensorFlow, and its primary supporter is Google.

We already mentioned the TensorFlow Keras high-level API, and Keras also runs on top of Theano. It has a number of standalone modules that you can combine, including neural layers, cost functions, optimizers, initialization schemes, activation functions, and regularization schemes.

Keras provides support for a wide range of production deployment options, and strong support for multiple GPUs and distributed training. However, community support is minimal, and the library is typically used for small datasets.

4. MXNet

Apache MXNet is an open-source deep learning software framework, which is used to define, train and deploy deep neural networks on a wide array of devices. It has the honor of being adopted by Amazon as the premier deep learning framework on AWS.

It can scale almost linearly across multiple GPUs and multiple machines, allowing for fast model training, and supports a flexible programming model that enables users to mix symbolic and imperative programming for maximum efficiency and productivity.

MXNet also supports multiple programming language APIs, including Python, C++, Scala, R, JavaScript, Julia, Perl, and Go (although its native APIs aren’t as task-agreeable as TensorFlow’s).

5. CNTK

CNTK, also known as the Microsoft Cognitive Toolkit, is a unified deep-learning toolkit that uses a graph structure to describe data flow as a series of computational steps (just like TensorFlow, although it isn’t as easy to learn or deploy).

It focuses largely on creating deep learning neural networks and can handle these tasks rapidly. CNTK allows users to easily realize and combine popular model types such as feed-forward DNNs, convolutional nets (CNNs), and recurrent networks (RNNs/LSTMs).

CNTK has a broad set of APIs (Python, C++, C#, Java) and can be included as a library in your Python, C#, or C++ programs—or used as a standalone machine-learning tool through its own model description language (BrainScript). It supports 64-bit Linux or 64-bit Windows operating systems.

Note: the 2.7 version was the last main release of CNTK, and there are no plans for new feature development.

Should I use TensorFlow?

TensorFlow has plenty of advantages. The open source machine learning framework provides excellent architectural support, which allows for the easy deployment of computational frameworks across a variety of platforms. It benefits from Google’s reputation, and several big names have adopted TensorFlow to carry out artificial intelligence tasks.

On the flipside, some details of TensorFlow’s implementation make it difficult to obtain totally deterministic model training results for some training jobs — but the team is considering more controls to affect determinism in a workflow.

Getting started is simple, especially with TensorFlow by Databricks, an out-of-the-box integration via Databricks Runtime for Machine Learning. You can get clusters up and running in seconds and benefit from a range of low-level and high-level APIs.

Additional Resources

Get the latest posts in your inbox

Subscribe to our blog and get the latest posts delivered to your inbox.