Databricks Intelligence Platform for FSI: Smart Claims

Demo Type

Product Tutorial

Duration

Self-paced

What you’ll learn

The Databricks Data Intelligence Platform allows your entire organization to use data and AI. It’s built on lakehouse architecture to provide an open, unified foundation for all data and governance, and is powered by the Data Intelligence Engine, which understands the uniqueness of your data.

In this demo, we’ll show you how to build an end-to-end smart claims processing pipeline to ingest claims, policy and telematics data and extract actionable insights to serve claims investigators.

This demo covers the end-to-end lakehouse platform. You will learn how to:

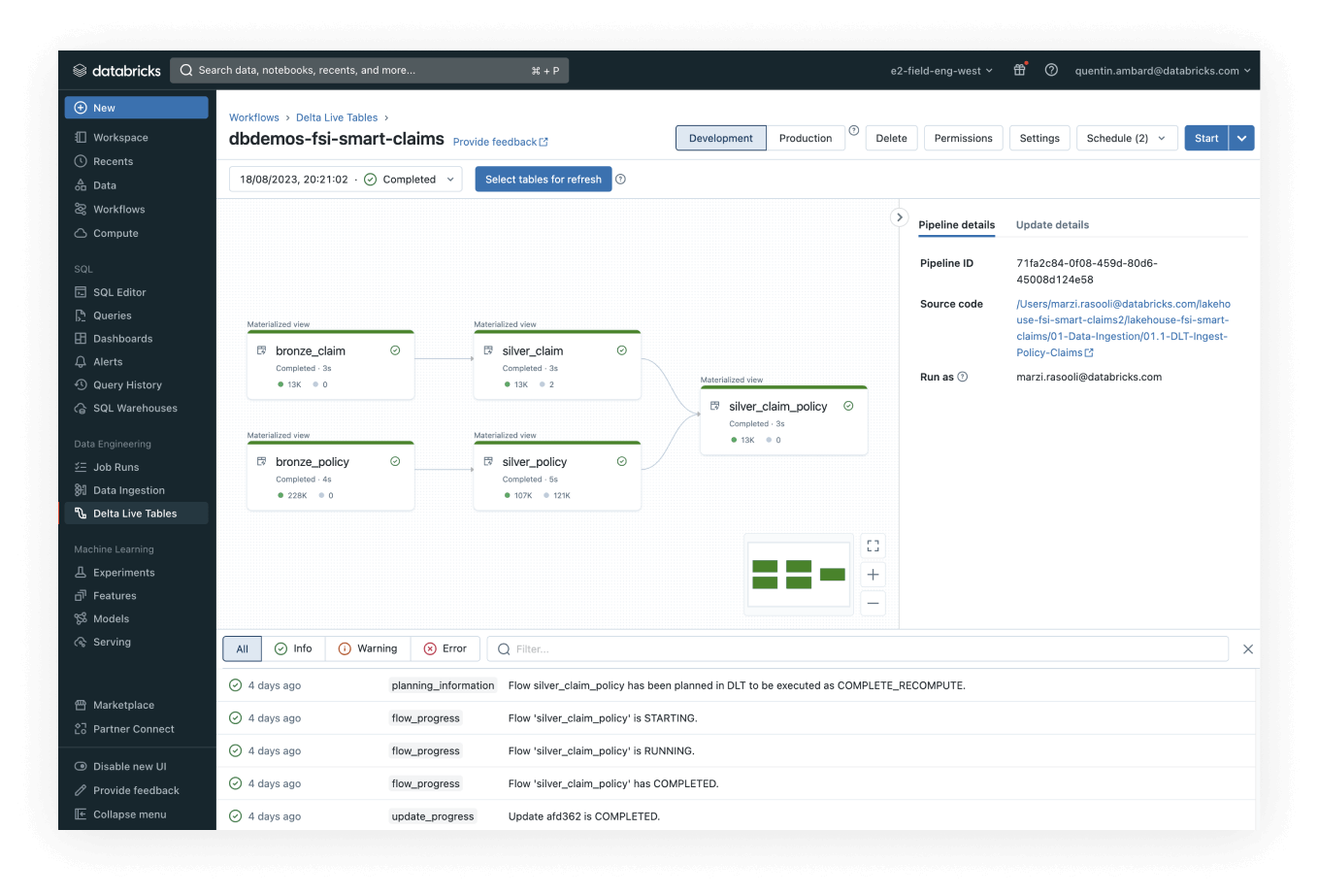

- Ingest claims and policy data, and then transform and curate it using DLT, a declarative ETL framework for building reliable, maintainable and stable data processing pipelines

- Add external information such as telematics insights

- Build a machine learning model to predict the severity of the claim using an MLflow framework

- Leverage Databricks SQL and the warehouse endpoints to visualize the summary of claims and actionable insights extracted from the claims information and accident images

- Orchestrate all these steps with Databricks Workflows

To run the demo, get a free Databricks workspace and execute the following two commands in a Python notebook:

These assets will be installed in this Databricks demo: