eBook

Apache Spark™ and Delta Lake Under the Hood

Apache Spark™ and Delta Lake have seen immense growth over the past several years, becoming the de-facto data processing and AI engine in enterprises today due to its speed, ease of use, and sophisticated analytics. Spark unifies data and AI by simplifying data preparation at massive scale across various sources, providing a consistent set of APIs for both data engineering and data science workloads, as well as seamless integration with popular AI frameworks and libraries such as TensorFlow, PyTorch, R and SciKit-Learn. And Delta Lake brings data reliability and performance to data lakes, with capabilities like ACID transactions, schema enforcement, DML commands, and time travel.

Databricks, founded by the team that originally created Apache Spark and Delta Lake, is proud to share excerpts from the book, Spark: The Definitive Guide as well as the Delta Lake Quickstart. Enjoy this free mini-ebook, courtesy of Databricks.

In this eBook, we cover:

- The past, present, and future of Apache Spark.

- Basic steps to install and run Spark yourself.

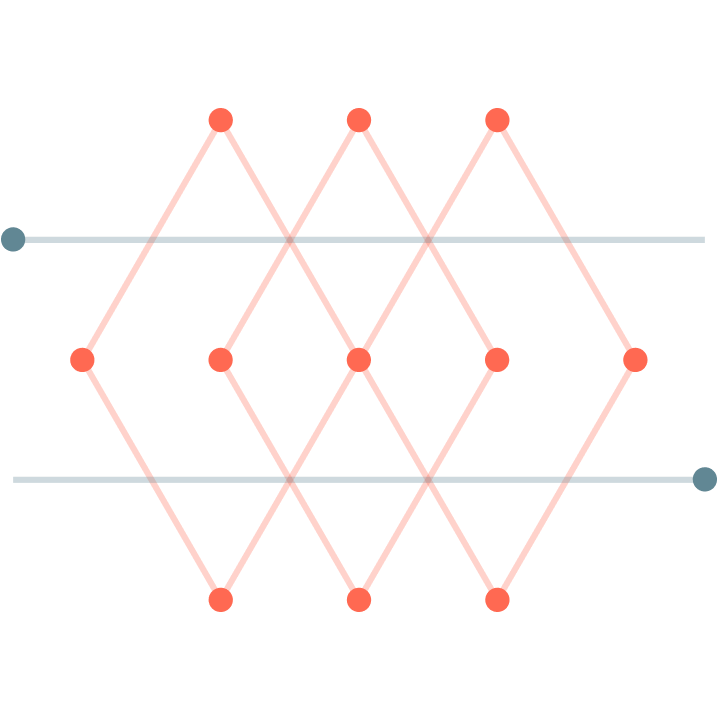

- A summary of Spark’s core architecture and concepts.

- Spark’s powerful language APIs and how you can use them.

- Load, update and roll back data in your data lake with Delta Lake.

Get the eBook to learn more.