Azure Databricks – Bring Your Own VNET

How to deploy Databricks clusters in your own custom VNET

by Abhinav Garg and Anna Shrestinian

Azure Databricks Unified Analytics Platform is the result of a joint product/engineering effort between Databricks and Microsoft. It’s available as a managed first-party service on Azure Public Cloud. Along with one-click setup (manual/automated), managed clusters (including Delta), and collaborative workspaces, the platform has native integration with other Azure first-party services, such as Azure Blob Storage, Azure Data Lake Store (Gen1/Gen2), Azure SQL Data Warehouse, Azure Cosmos DB, Azure Event Hubs, Azure Data Factory, etc., and the list keeps growing.

Additionally, the platform is built on a strong security foundation, providing native integration with Azure Active Directory (AAD); and is compliant with major security certifications, such as ISO 27001, SOC 2 Type 2, HIPAA, etc. The service is backed by Microsoft SLAs and support.

In this blog, we’ll provide an overview of Azure Databricks platform architecture, and how one could deploy the clusters in their own-managed Azure VNET.

Platform Architecture

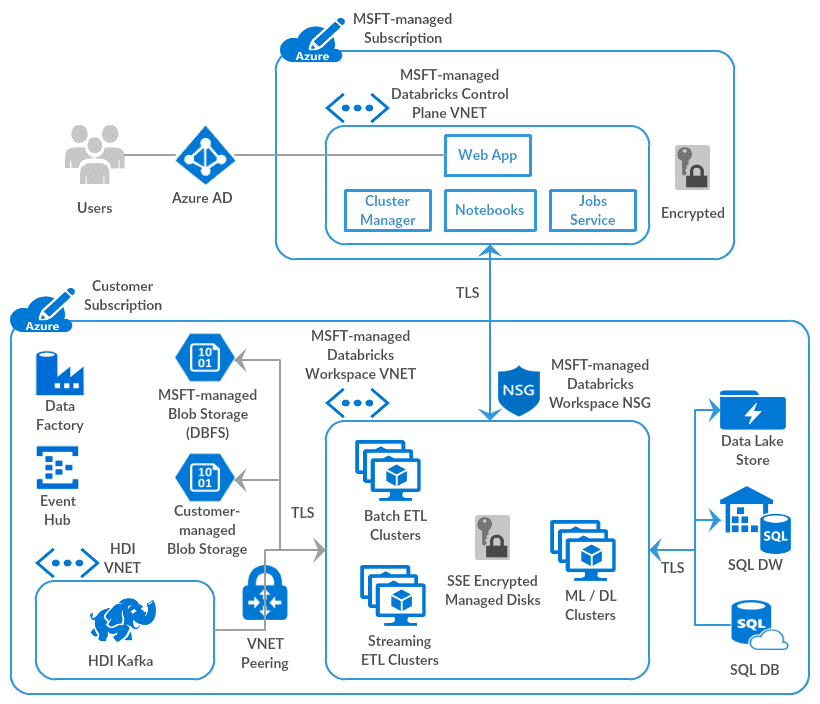

Azure Databricks is a managed application, consisting of two high-level components:

- The Control Plane – A management layer that resides in a Microsoft-managed Azure subscription and consists of services such as cluster manager, web application, jobs service, etc. Each service has its own mechanism to isolate the processing, metadata, and resources based on a workspace identifier, which is then used to execute every request.

- The Data Plane – Consists of a locked virtual network (Azure VNET) that’s created in a customer-managed Azure subscription. All clusters are created in that VNET, and any data processing is done on data residing in customer-managed sources.

Platform architecture – In the default deployment mode (above diagram), the data-plane VNET and the Network Security Group (NSG) are managed by Microsoft, although these are provisioned in customer’s subscription. These resources are “locked” against any changes by the customer, similar to how other Azure first-party services operate. The goal is to make it easy to use and avoid non-intended changes by users.

One could peer other Azure cloud VNETs using the Azure Databricks-specific VNET Peering feature, though connectivity to on-premises data sources via an ExpressRoute or a VPN Gateway is not possible with this deployment mode (please read further for how to implement that connectivity).

Bring Your Own VNET

Even though the default-deployment mode works for many, a number of enterprise customers want more control over the service network configuration to comply with internal cloud/data governance policies and/or adhere to external regulations, and/or do networking customizations, such as:

- Connect Azure Databricks clusters to other Azure data services securely using Azure Service Endpoints

- Connect Azure Databricks clusters to data sources deployed in private/co-located data centers (on-premises)

- Restrict outbound traffic from Azure Databricks clusters to specific Azure data services and/or external endpoints only

- Configure Azure Databricks clusters to use custom DNS

- Configure a custom CIDR range for the Azure Databricks clusters

- And more

To make the above possible, we provide a Bring Your Own VNET (also called VNET Injection) feature, which allows customers to deploy the Azure Databricks clusters (data plane) in their own-managed VNETs. Such workspaces could be deployed using Azure Portal, or in an automated fashion using ARM Templates, which could be run using Azure CLI, Azure Powershell, Azure Python SDK, etc.

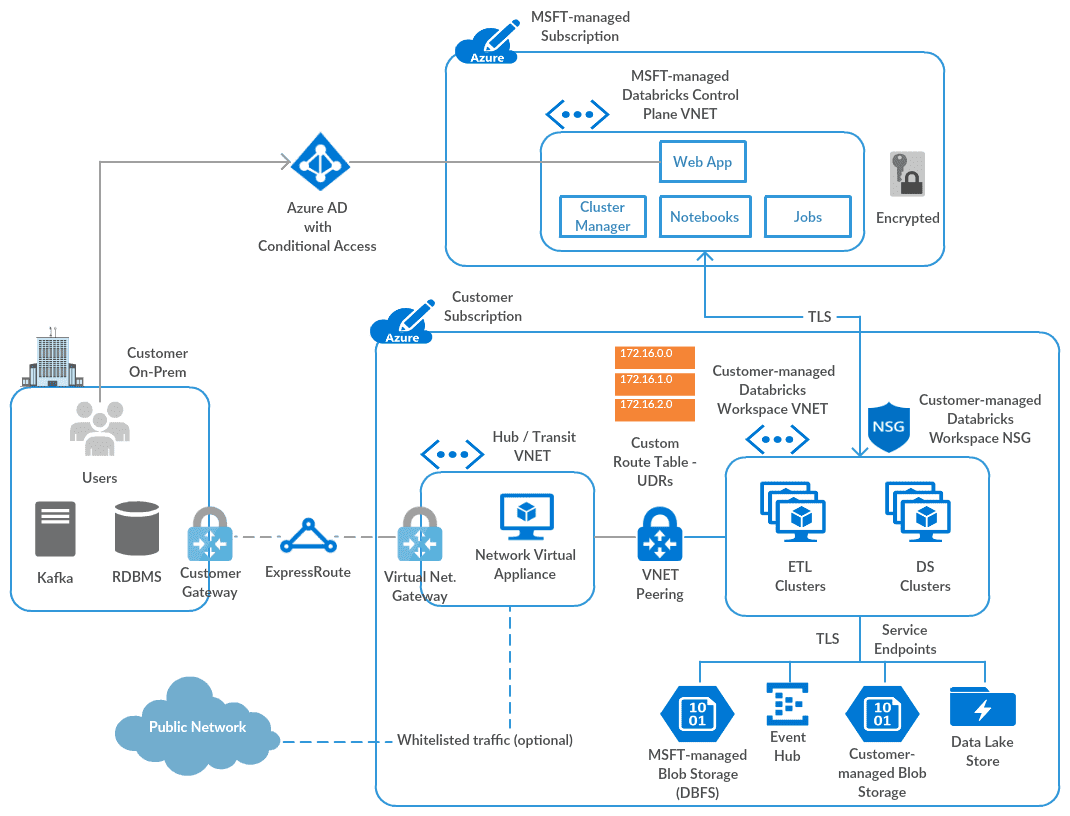

With this capability, the Databricks workspace NSG is also managed by the customer. We manage a set of inbound and outbound NSG rules using a Network Intent Policy, as those are required for secure, bidirectional communication with the control/management plane. The platform architecture with on-prem connectivity (optional) looks like this:

With the Bring Your Own VNET/VNET injection feature, one could configure:

- Connectivity to on-premises data sources (requires whitelisting of Databricks control-plane traffic using Azure UDRs)

- Routing of outbound traffic via a firewall appliance/service

- Configuring Azure Databricks Subnets as a source in the firewall rules for Azure Blob Storage, Azure Data Lake Store, Azure SQL Data Warehouse etc. – requires Azure Service Endpoints

- and other things as discussed earlier.

This allows customers to comply with various internal and external security policies and frameworks, while maintaining the PaaS nature of the service, thus providing the same ease of use with the managed platform as with default-deployment mode.

The feature is in public preview today with full production SLAs in all Azure Databricks regions. General availability is coming soon.

Try It!

- If you are not already using Azure Databricks, you can try it by following these directions.

Get the latest posts in your inbox

Subscribe to our blog and get the latest posts delivered to your inbox.