How to upgrade your Hive tables to Unity Catalog

by Dipankar Kushari, Liran Bareket, Sreeram Thoom and Som Natarajan

In this blog we will demonstrate with examples, how you can seamlessly upgrade your Hive metastore (HMS)* tables to Unity Catalog (UC) using different methodologies depending on the variations of HMS tables being upgraded.

*Note: Hive metastore could be your default or external metastore or even AWS Glue Data Catalog. For simplicity, we have used the term "Hive metastore" throughout this document

Before we dive into the details, let us look at the steps we would take for the upgrade -

- Assess - In this step, you will evaluate the existing HMS tables identified for upgrade so that we can determine the right approach for upgrade. This step is discussed in this blog.

- Create - In this step, you create the required UC assets such as, Metastore, Catalog, Schema, Storage Credentials, External Locations. For details refer to the documentations - AWS, Azure, GCP

- Upgrade - In this step, you will follow the guidance to upgrade the tables from HMS to UC. This step is discussed in this blog.

- Grant - In this step, you will need to provide grants on the newly upgraded UC tables to principals, so that they can access the UC tables. For detail refer to the documentations - AWS, Azure, GCP

Unity Catalog, now generally available on all three cloud platforms (AWS, Azure, and GCP), simplifies security and governance of your data with the following key features:

- Define once, secure everywhere: Unity Catalog offers a single place to administer data access policies that apply across all workspaces.

- Standards-compliant security model: Unity Catalog's security model is based on standard ANSI SQL and allows administrators to grant permissions in their existing data lake using familiar syntax, at the level of catalogs, databases (also called schemas), tables, and views.

- Built-in auditing and lineage: Unity Catalog automatically captures user-level audit logs that record access to your data. Unity Catalog also captures lineage data that tracks how data assets are created and used across all languages.

- Data discovery: Unity Catalog lets you tag and document data assets, and provides a search interface to help data consumers find data.

- System tables (Public Preview): Unity Catalog lets you easily access and query your account's operational data, including audit logs, billable usage, and lineage.

- Data Sharing: Delta Sharing is an open protocol developed by Databricks for secure data sharing with other organizations regardless of the computing platforms they use. Databricks has built Delta Sharing into its Unity Catalog data governance platform, enabling a Databricks user, called a data provider, to share data with a person or group outside of their organization, called a data recipient.

All these rich features which are available with Unity Catalog (UC) out of the box are not readily available in your Hive metastore today and would take a huge amount of your resources to build ground up. Additionally, as most (if not all) of the newer Databricks features, such as, Lakehouse Monitoring, Lakehouse Federation, LakehouseIQ, are built on, governed by and needs Unity Catalog as a prerequisite to function, delaying upgradation of your data assets to UC from HMS would limit your ability to take advantage of those newer product features.

Hence, one question that comes to mind is how can you easily upgrade tables registered in your existing Hive metastore to the Unity Catalog metastore so that you can take advantage of all the rich features Unity Catalog offers. In this blog, we will walk you through considerations, methodologies with examples for upgrading your HMS table to UC.

Upgrade Considerations and Prerequisites

In this section we review considerations for upgrade before we dive deeper into the Upgrade Methodologies in the next section.

Upgrade Considerations

Variations of Hive Metastore tables is one such consideration. Hive Metastore tables, deemed for upgrade to Unity Catalog, could have been created with mixing and matching types for each parameter shown in the table below. For example, one could have created a CSV Managed Table using DBFS root location or a Parquet External table on Amazon S3 location.This section describes the parameters based on which different variation of the tables could have been created in your

|

Parameter |

Variation |

Table Identification Guide |

|

Table Type |

Run |

|

|

Run |

||

|

Data Storage Location |

Run |

|

|

DBFS Mounted Cloud Object Storage |

Run |

|

|

Directly specifying cloud storage Location (such as S3://, abfss:// or gs://) |

Run |

|

|

Table file format and interface |

File formats such as Delta, Parquet, Avro |

Run |

|

Interface such as Hive SerDe interface |

Run |

Depending on the variations of the parameters mentioned above, the adopted upgrade methodologies could vary. Details are discussed in the Upgrade Methodologies section below.

Another point needs to be considered before you start the upgrade of HMS tables to UC in Azure Databricks:

For AZURE Cloud - Tables currently stored on Blob storage (wasb) or ADLS gen 1 (adl) needs to be upgraded to ADLS gen 2 (abfs). Otherwise it will raise an error if you try to use unsupported Azure cloud storage with Unity Catalog.

Error example: Table is not eligible for an upgrade from Hive Metastore to Unity Catalog. Reason: Unsupported file system scheme wasbs.

Upgrade Prerequisites

Before starting the upgrade process, the storage credentials and external locations should be created as shown in the steps below.

- Create Storage Credential(s) with access to the target cloud storage.

- Create External Location(s) pointing to the target cloud storage using the storage credential(s).

- The External Locations are used for creating UC External Tables, Managed Catalogs, or Managed schemas.

Upgrade Methodologies

In this section we show you all the different upgrade options in the form of a matrix. We also use diagrams to show the steps involved in upgrading.

There are two primary methods for upgrading, using SYNC (for supported scenarios) or using data replication (where SYNC is not supported).

- Using SYNC - For all the supported scenarios (as shown in the Upgrade Matrix section below) use SYNC to upgrade HMS tables to UC. Using SYNC allows you to upgrade tables without data replication

- Using Data Replication - For all unsupported scenarios (as shown in the Upgrade Matrix section below) use either Create Table As Select (CTAS) or DEEP CLONE*. This method would require data replication

*Note - Consider using deep clone for HMS Parquet and Delta tables to copy the data and upgrade tables in UC from HMS. Use Create Table As Select (CTAS) for other file formats.

The diagrams below describes the upgrade steps for each method. To understand which method to use for your upgrade use case, refer to the Upgrade Matrix section below.

Pictorial Representation of upgrade

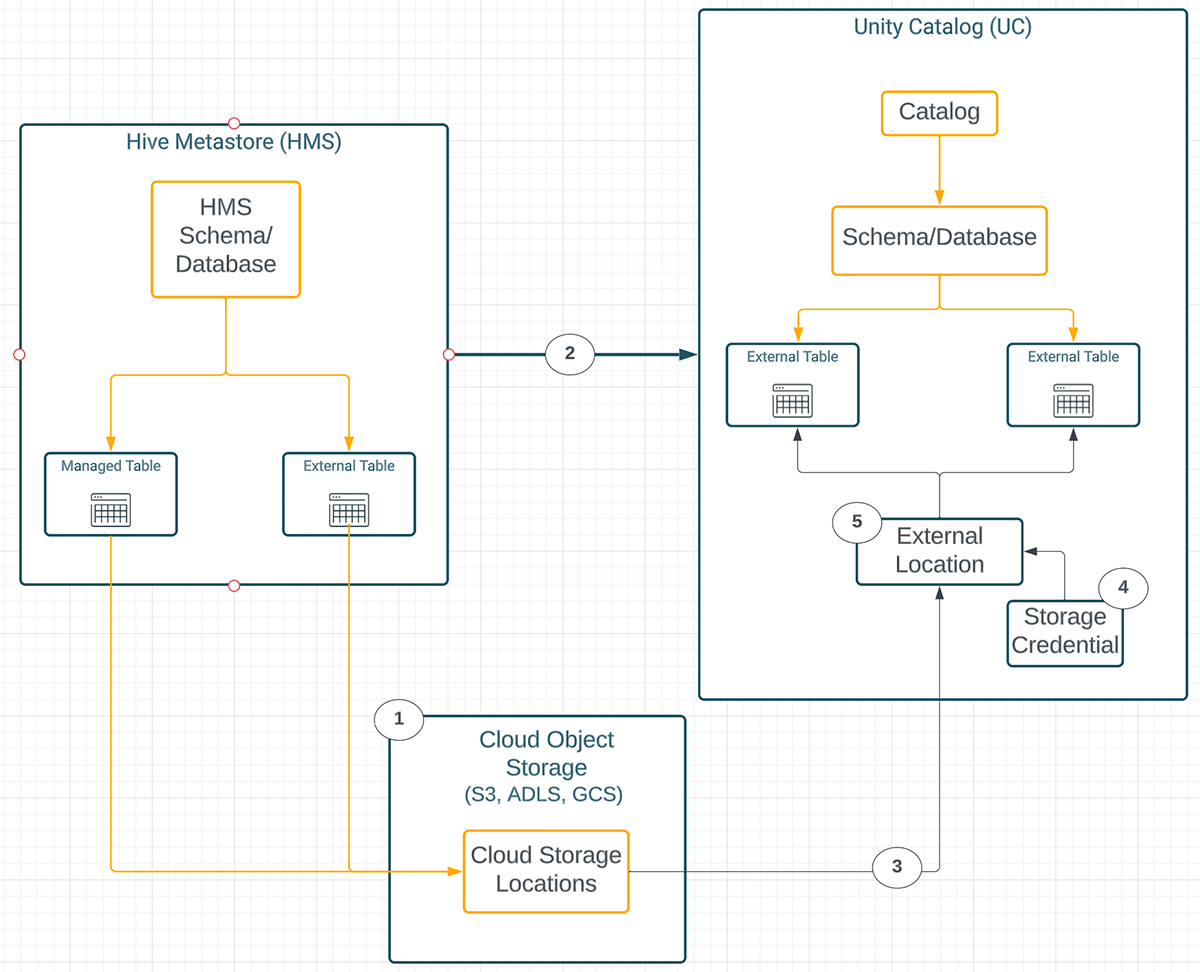

Diagram 1 - Upgrading HMS tables to UC using SYNC (without data replication)

Diagram Keys:

- HMS Managed and external tables store data as a directory of files on cloud object storage

- SYNC Command is used to upgrade table metadata from HMS to UC. Target UC tables are External irrespective of the source HMS table types.

- No Data is copied when the SYNC command is used for upgrading tables from HMS to UC. Same underlying cloud storage location (used by the source HMS table) is referred to by the target UC External table.

- A storage credential represents an authentication and authorization mechanism for accessing data stored on your cloud tenant.

- An external location is an object that combines a cloud storage path with a storage credential that authorizes access to the cloud storage path.

Note: When using SYNC command for upgrading your HMS Managed tables, whose data is stored as directories of files on cloud object storage, to UC External tables, the following spark configuration has to be set at the spark session or at the cluster config -

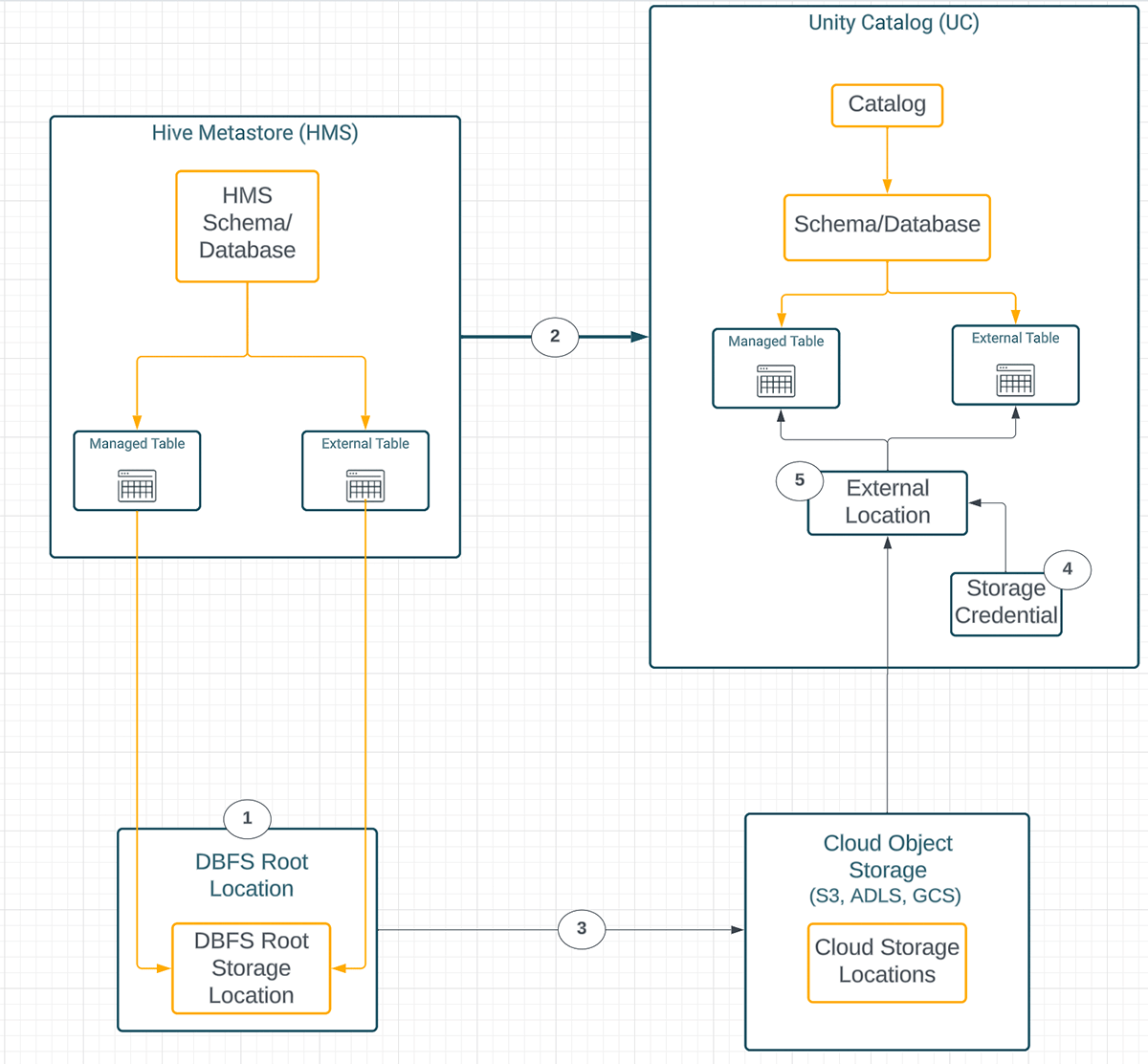

Diagram 2 - Upgrading HMS tables to UC with data replication

Diagram Keys:

- HMS Managed and external tables store data as a directory of files on DBFS Root Storage Location.

- CTAS or Deep Clone creates the UC target table metadata from the HMS table. One can choose to upgrade to an external or managed table irrespective of the HMS table type.

- CTAS or Deep Clone copies data from DBFS root storage to target cloud storage.

- A storage credential represents an authentication and authorization mechanism for accessing data stored on your cloud tenant.

- An external location is an object that combines a cloud storage path with a storage credential that authorizes access to the cloud storage path.

Upgrade Matrix

Below table showcases the different possibilities of Upgrading HMS tables to UC tables. For each scenario, we provide steps that you can follow for the upgrade.

HMS Storage Format using DBFS Root Storage

|

Ex. |

HMS Table Type |

Description of HMS Table Type |

Example of HMS Table |

Target UC TableType |

Target UC Data File Format |

Upgrade Methodology |

|---|---|---|---|---|---|---|

|

1 |

Managed |

The data files for the managed tables reside within DBFS Root (the default location for the Databricks managed HMS database). |

%sql create table if not exists hive_metastore.hmsdb_upgrade_db.people_parquet |

External Or Managed |

Delta is the preferred file format for both Managed and External Tables. External tables do support non-delta file formats.1 |

CTAS or Deep Clone |

|

2 |

External |

This means the data files for the External tables reside within DBFS Root. The table definition has the "Location" clause which makes the table external. |

%sql

|

External Or Managed |

Delta is the preferred file format for both Managed and External Tables. External tables do support non-delta file formats. 1 |

CTAS or Deep Clone |

1. Note - Preferably change it to Delta while Upgrading with CTAS.

HMS Hive SerDe table

|

Ex. |

H MS Table Type |

Description of HMS Table Type |

Example of HMS Table |

Target UC TableType |

Target UC Data File Format |

Upgrade Methodology |

|---|---|---|---|---|---|---|

|

3 |

Hive SerDe External or Managed 2 |

These are the tables created using the Hive SerDe interface. Refer to this link to learn more about hive tables on databricks. |

%sql

|

External Or Managed |

Delta is the preferred file format for both Managed and External Tables. External tables do support non-delta file formats. 3 |

CTAS or Deep Clone |

2. Note - irrespective of the underlying storage format, hive SerDe follows the same upgrade path..

3. Note - Preferably change it to Delta while you are doing the upgrade using CTAS.

HMS Storage Format using DBFS Mounted Storage

|

Ex. |

HMS Table Type |

Description of HMS Table Type |

Example of HMS Table |

Target UC TableType |

Target UC Data File Format |

Upgrade Methodology |

|---|---|---|---|---|---|---|

|

4 |

Managed |

This is when the parent database has its location set to external paths, e.g., a mounted path from the object store. The table is created without a location clause and table data is saved underneath that default database path. |

%sql |

External |

As that of the HMS source data file format |

|

|

5 |

Managed |

Managed |

Delta |

CTAS or Deep Clone |

||

|

6 |

External |

The table is created with a location clause and a path specifying a mounted path from a cloud object store. |

%sql |

External |

As that of the HMS source data file format |

|

|

7 |

External |

Managed |

Delta |

CTAS or Deep Clone |

4. Note - Ensure that the HMS table is dropped individually after conversion to an external table. If the HMS database/schema was defined with a location and if the database is dropped with the cascade option, then the underlying data will be lost and the upgraded UC tables will lose the data..

HMS Storage Format using Cloud Object Storage

|

Ex. |

HMS Table Type |

Description of HMS Table Type |

Example of HMS Table |

Target UC TableType |

Target UC Data File Format |

Upgrade Methodology |

|---|---|---|---|---|---|---|

|

8 |

Managed |

The parent database has its location set to external paths, e.g., a cloud object store. The table is created without a location clause and table data is saved underneath that default database path. |

%sql create database if not exists hive_metastore.hmsdb_upgrade_db location "s3://databricks-dkushari/hmsdb_upgrade_db/"; |

Extern al |

As of the source data file format |

|

|

9 |

Managed |

Managed |

Delta |

CTAS or Deep Clone |

||

|

10 |

External |

The table is created with a location clause and a path specifying a cloud object store. |

%sql |

External |

As of the source data file format |

|

|

11 |

External |

Managed |

Delta |

CTAS or Deep Clone |

Examples of upgrade

In this section, we are providing a Databricks Notebook with examples for each scenario discussed above.

Conclusion

In this blog, we have shown how you can upgrade your Hive metastore tables to Unity Catalog metastore. Please refer to the Notebook to try different upgrade options. You can also refer to the Demo Center to get started with automating the upgrade process. To automate upgrading Hive Metastore tables to Unity Catalog we recommend you use this Databricks Lab repository.

Upgrade your tables to Unity Catalog today and benefit from unified governance features. After Upgrading to UC, you can drop Hive metastore schemas and tables if you no longer need them. Dropping an external table does not modify the data files on your cloud tenant. Take precautions (as described in this blog) while dropping managed tables or schemas with managed tables.

Appendix

Get the latest posts in your inbox

Subscribe to our blog and get the latest posts delivered to your inbox.