Common Sense Product Recommendations using Large Language Models

by Avinash Sooriyarchchi, Sam Sawyer, Colton Peltier and Bryan Smith

Check out our LLM Solution Accelerators for Retail for more details and to download the notebooks.

Product recommendations are a core feature of the modern customer experience. When users return to a site with which they have previously interacted, they expect to be greeted by recommendations related to those prior interactions that help pickup where they left off. When users engage a specific item, they expect similar, relevant alternatives to be suggested to help them find just the right item to meet their needs. And as items are placed in a cart, users expect additional products to be suggested that complete and enhance your overall purchasing experience. When done right, these product recommendations not only facilitate the shopping journey but leave the customer feeling recognized and understood by the retail outlet.

While there are many different approaches to generating product recommendations, most recommendation engines in use today rely upon historical patterns of interaction between products and customers, learned through the application of sophisticated techniques applied to large collections of retailer-specific data. These engines are surprisingly robust at reinforcing patterns learned from successful customer engagements, but sometimes we need to break from these historical patterns in order to deliver a different experience.

Consider the scenario where a new product has been introduced where there is only a limited number of interactions within our data. Recommenders requiring knowledge learned from numerous customer engagements may fail to suggest the product until sufficient data is built up to support a recommendation.

Or consider another scenario where a single product draws an inordinate amount of attention. In this scenario, the recommender runs the risk of falling into the trap of always suggesting this one item due to its overwhelming popularity to the detriment of other viable products in the portfolio.

To avoid these and other similar challenges, retailers might incorporate a tactic that employs widely-recognized patterns of product association based on common knowledge. Much like a helpful sales associate, this type of recommender could examine the items a customer seems to have an interest in and suggest additional items that seem to align with the path or paths those product combinations may indicate.

Using a Large Language Model to Make Recommendations

Consider the scenario where a customer shops for winter scarves, beanies and mittens. Clearly, this customer is gearing up for a cold weather outing. Let’s say the retailer has recently introduced heavy wool socks and winter boots into their product portfolio. Where other recommenders might not yet pick up on the association of these items with those the customer is browsing because of a lack of interactions in the historical data, common knowledge links these items together.

This kind of knowledge is often captured by large language models (LLMs), trained on large volumes of general text. In that text, mittens and boots might be directly linked by individuals putting on both items before venturing outdoors and associated with concepts like “cold”, “snow” and “winter” that strengthen the relationship and draw in other related items.

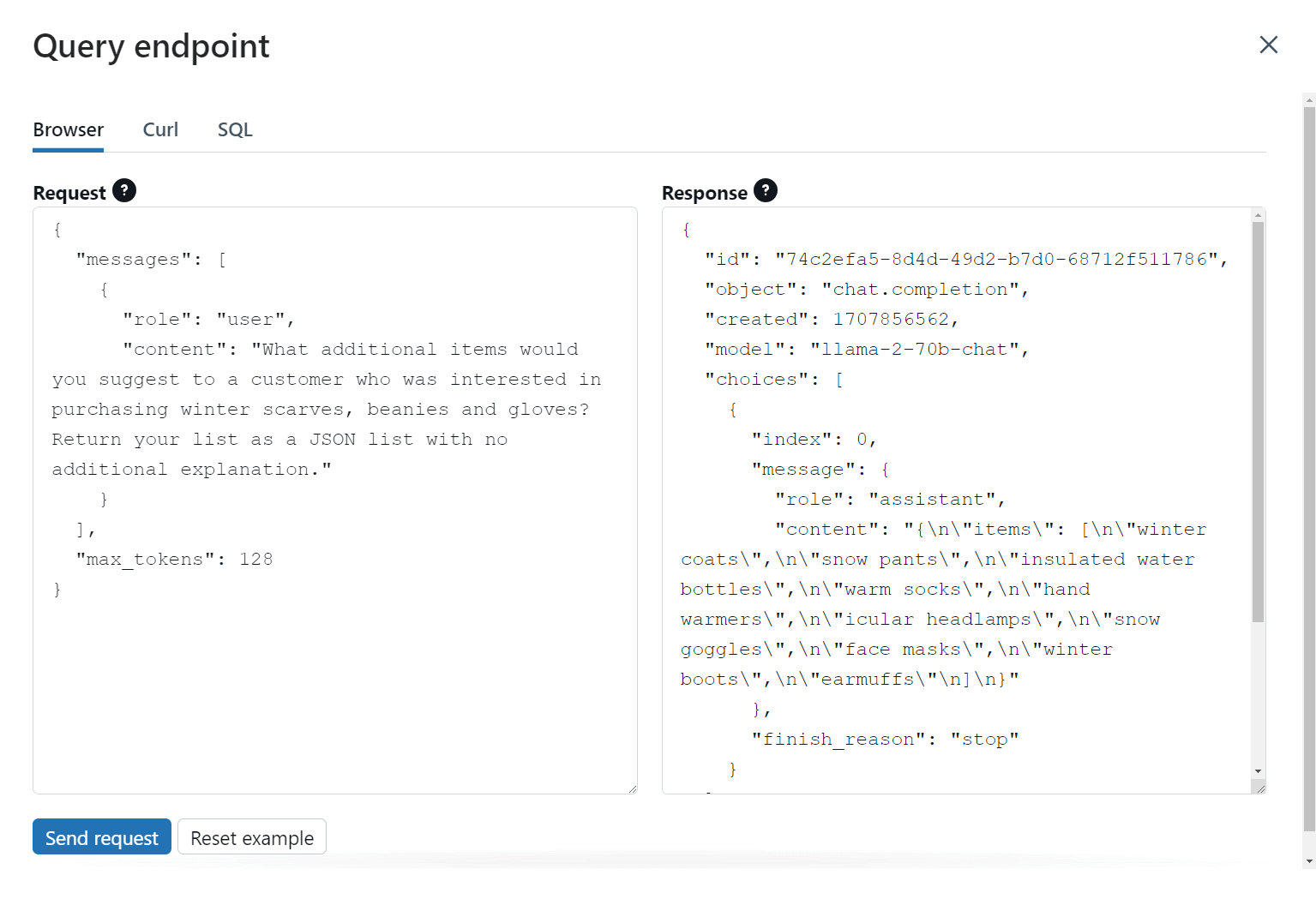

When the LLM is then asked what other items might be associated with a scarf, beanie and mittens, all of this knowledge, captured in billions of internal parameters, is used to suggest a prioritized list of additional items that are likely of interest. (Figure 1)

The beauty of this approach is that we are not limited to asking the LLM to consider just the items in the cart in isolation. We might recognize that a customer shopping for these winter items in south Texas may have a certain set of preferences that differ from a customer shopping these same items in northern Minnesota and incorporate that geographic information into the LLM’s prompt. We might also incorporate information about promotional campaigns or events to encourage the LLM to suggest items associated with those efforts. Again, much like a store associate, the LLM can balance a variety of inputs to arrive at a meaningful but still relevant set of recommendations.

Connecting the Recommendations with Available Products

But how do we relate the general product suggestions provided by the LLM back to the specific items in our product catalog? LLMs trained on publicly available datasets don’t typically have knowledge of the specific items in a retailer’s product portfolio, and training such a model with retailer-specific information is both time-consuming and cost-prohibitive.

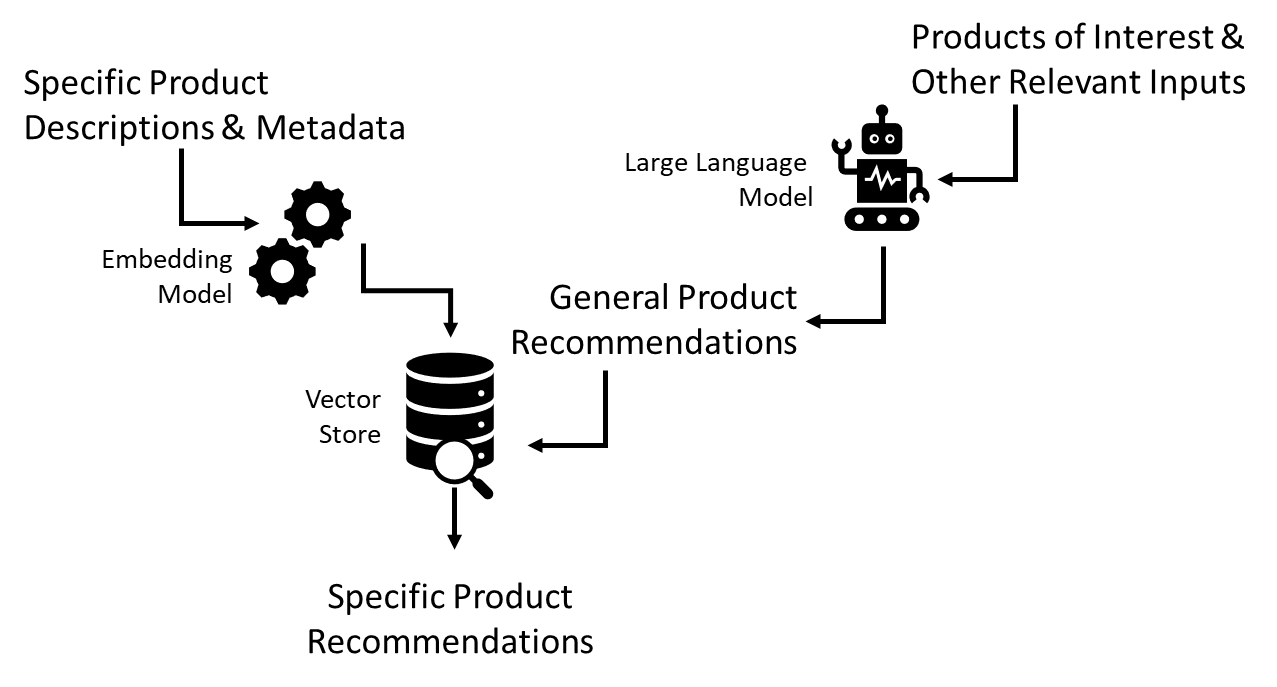

The solution to this problem is relatively simple. Using a lightweight embedding model, such as one of the many freely available open source models available online, we can translate the descriptive information and other metadata for each of our products into what are known as embeddings. (Figure 2)

The concept of an embedding gets a little technical, but in a nutshell, it’s a numerical representation of the text and how it maps a set of recognized concepts and relationships found within a given language. Two items conceptually similar to one another such as the general winter boots and the specific Acme Troopers that allow a wearer to tromp through snowy city streets or along mountain paths in the comfort of waterproof canvas and leather uppers to withstand winter's worst would have very similar numerical representations when passed through an appropriate LLM. If we calculate the mathematical difference (distance) between the embeddings associated with each item, we’d find there would be relatively little separation between them. This would indicate these items are closely related.

To put this concept into action, all we’d need to do is convert all of our specific product descriptions and metadata into embeddings and store these in a searchable index, what is often referred to as a vector store. As the LLM makes general product recommendations, we would then translate each of these into embeddings of their own and search the vector store for the most closely related items, providing us specific items in our portfolio to place in front of our customer. (Figure 3)

Bringing the Solution Together with Databricks

The recommender pattern presented here can be a great addition to the suite of recommenders used by organizations in scenarios where general knowledge of product associations can be leveraged to make useful suggestions to customers. To get the solution off the ground, organizations must have the ability to access a large language model as well as a lightweight embedding model and bring together the functionality of both of these with their own, proprietary information. Once this is done, the organization needs the ability to turn all of these assets into a solution which can easily be integrated and scaled across the range of customer-facing interfaces where these recommendations are needed.

Through the Databricks Data Intelligence Platform, organizations can address each of these challenges through a single, consistent, unified environment that makes implementation and deployment easy and cost effective while retaining data privacy. With Databricks’ new Vector Search capability, developers can tap into an integrated vector store with surrounding workflows that ensure the embeddings housed within it are up to date. Through the new Foundation Model APIs, developers can tap into a wide range of open source and proprietary large language models with minimal setup. And through enhanced Model Serving capabilities, the end-to-end recommender workflow can be packaged for deployment behind an open and secure endpoint that enables integration across the widest range of modern applications.

But don’t just take our word for it. See it for yourself. In our newest solution accelerator, we have built an LLM-based product recommender implementing the pattern shown here and demonstrating how these capabilities can be brought together to go from concept to operationalized deployment. All the code is freely available, and we invite you to explore this solution in your environment as part of our commitment to helping organizations maximize the potential of their data.

Download the notebooks

Get the latest posts in your inbox

Subscribe to our blog and get the latest posts delivered to your inbox.