Deploying Third-party models securely with the Databricks Data Intelligence Platform and HiddenLayer Model Scanner

by Arun Pamulapati, David Wells, Neil Archibald and Hiep Dang

Introduction

The ability for organizations to adopt machine learning, AI, and large language models (LLMs) has accelerated in recent years thanks to the popularization of model zoos – public repositories like Hugging Face and TensorFlow Hub that are populated with pre-trained models/LLMs with cutting-edge proficiencies in image recognition, natural language processing, in-house chatbots, assistants, and more.

Cybersecurity Risks of Third-Party Models

While convenient, model zoos introduce the potential for malicious actors to abuse the open nature of public repositories for malicious gains. Take, for example, the recent research by our partners at HiddenLayer, who identified how public machine-learning models can be weaponized with ransomware or how attackers can take over HuggingFace services to hijack models submitted to the platform. These scenarios create two new risks: trojaned models and model supply chain attacks.

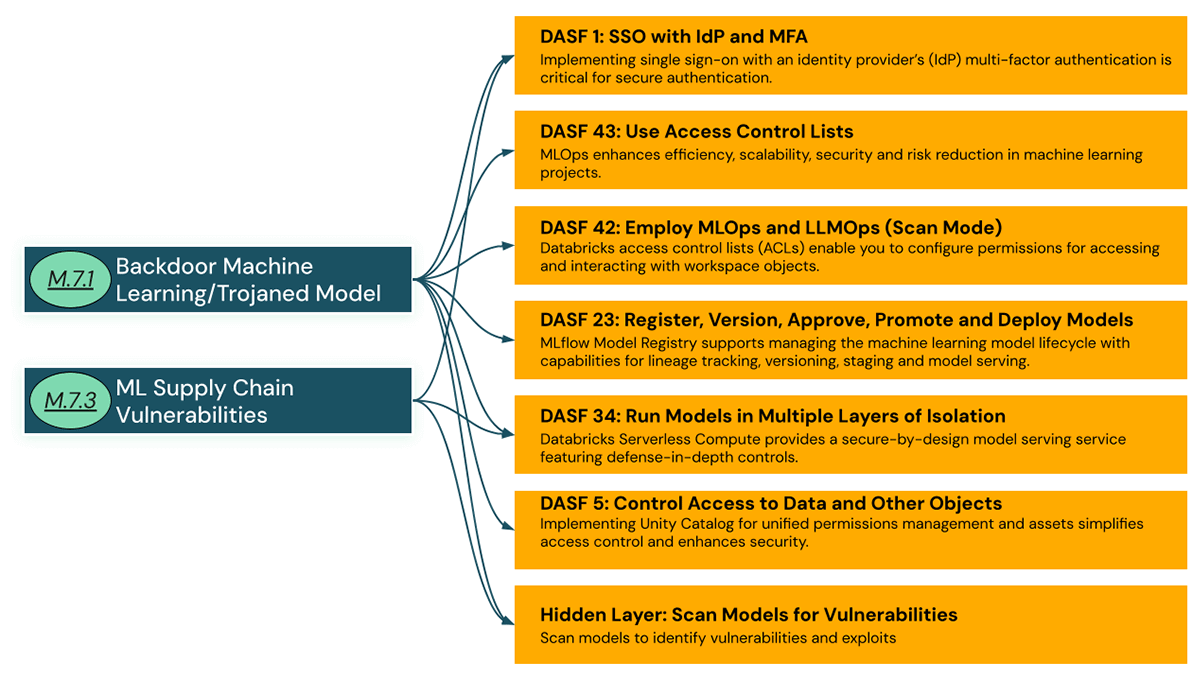

While industry recognition of these vulnerabilities is increasing1, Databricks recently published the Databricks AI Security Framework (DASF), which documents the risks associated with enterprise ML and AI programs, such as Model 7.1: Backdoor Machine Learning / Trojaned model and Model 7.3: ML Supply chain vulnerabilities, and the mitigating controls that Databricks customers can leverage to secure their ML and AI programs. Note that Databricks does perform AI Red Teaming on all of our hosted Foundational models, as well as models that are utilized by AI systems internally. We also do malware scanning of the model/weight files including pickle import checking and ClamAV scans. See Databricks' Approach to Responsible AI for more details on how we are building trust in intelligent applications by following responsible practices in the development and use of AI.

In this blog, we will explore the comprehensive risk mitigation controls available in the Databricks Data Intelligence Platform to protect organizations from the above attacks on third-party models and how you can extend them further by using HiddenLayer for model scanning.

Mitigations

The Databricks Data Intelligence Platform adopts a comprehensive strategy to mitigate security risks in AI and ML systems. Here are some high-level mitigation controls we recommend when bringing a third-party model into Databricks:

Let's examine how these controls can be deployed and how they make it safer for organizations to run third-party models.

DASF 1: SSO with IdP and MFA

Strongly authenticating users and restricting model deployment privileges helps to secure access to data and AI platforms and prevent unauthorized access to machine learning systems and allow only authenticated users to be able to down third party models. To do this, Databricks recommends:

- Adopt single sign-on (SSO) and multi-factor authentication (MFA) (AWS, Azure, GCP).

- Synchronize users and groups with your SAML 2.0 IdP via SCIM.

- Control authentication from verified IP addresses using IP access lists (AWS, Azure, GCP).

- Use a cloud private connection (AWS, Azure, GCP) service to communicate between users and the Databricks control plane does not traverse the public internet.

DASF 43: Use access control lists

Leverage Databricks' Unity Catalog to implement Access Control Lists (ACLs) on workspace objects, adhering to the principle of least privilege. ACLs are essential for managing permissions on various workspace objects, including folders, notebooks, models, clusters, and jobs. Unity Catalog is a centralized governance tool, enabling organizations to maintain consistent standards across the MLOps lifecycle and empower teams with control over their workspaces. It addresses the complexity of setting ACLs in ML environments by removing the complexity of setting permissions across multiple tools required across teams. Use these access control lists (ACLs) to configure permissions to limit who can bring, deploy and run third party models in your organization.

For those new to Databricks seeking guidance on aligning workspace-level permissions with typical user roles, see the Proposal for Getting Started With Databricks Groups and Permissions.

DASF 42: Employ MLOps and LLMOps (Scan Models)

Model Zoos offer limited security measures against repository content, making them potential vectors for threat actors to distribute attacks. Deploying third-party models requires thorough validation and security scanning to counter these vulnerabilities. Tools like Modelscan and the Fickling library serve as open-source solutions for assessing the integrity of Machine Learning Models, but lack production-ready services. A more robust option is the HiddenLayer Model Scanner, a cybersecurity tool designed to uncover hidden threats within machine learning models, including malware and vulnerabilities. This advanced scanner integrates with Databricks, allowing for seamless scanning of models in the registry and during the ML development lifecycle

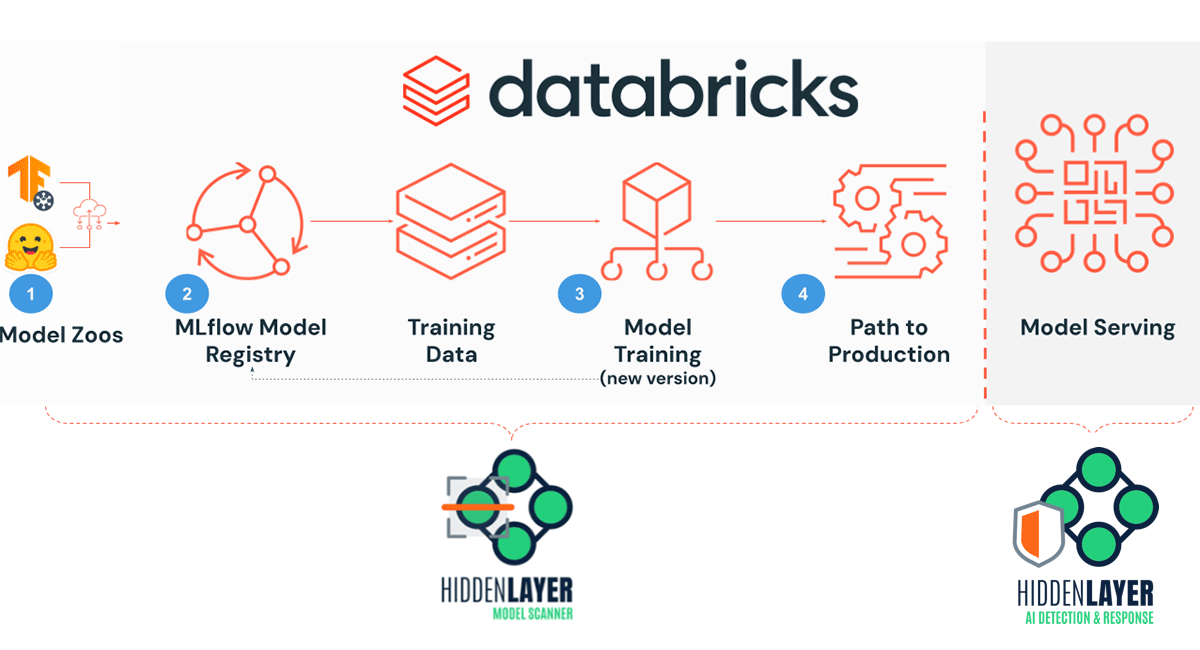

HiddenLayer's Model Scanner can be used at multiple stages of the ML Operations lifecycle to ensure security:

- Scan third-party models upon download to prevent malware and backdoor threats.

- Conduct scans on all models within the Databricks registry to identify latent security risks.

- Regularly scan new model versions to detect and mitigate vulnerabilities early in development.

- Enforce model scanning before transitioning to production to confirm their safety.

Download and Scan HuggingFace models with HiddenLayer Model Scanner

HiddenLayer provides a Databricks Notebook for their AISec Platform which will run in your Databricks environment (DBR 11.3 LTS ML and higher). Before downloading the third-party model into your Databricks Data Intelligence Platform, you can run the notebook manually or integrate it into your CI/CD process in your MLOps routine. HiddenLayer will determine whether the model is safe or unsafe and provides detection details and context such as severity, hashes, and maps to MITRE ATLAS tactics and techniques such as AML.T0010.003 - ML Supply Chain Compromise: Model that we originally started with in the introduction. Knowing that a model contains inherent cybersecurity risk or malicious code, you can reject a model for further consideration in model pipeline. All detections can be viewed in the HiddenLayer AISec Platform console. The overview provides a list of each detection and the dashboard is an aggregated view of the detections. Detections for a specific model can be viewed by navigating to the model card then choosing the Detection.

DASF 23: Register, version, approve, promote and deploy models

With Models in Unity Catalog, a hosted version of the MLflow Model Registry in Unity Catalog, the full lifecycle of an ML model can be managed while leveraging Unity Catalog's capability to share assets across Databricks workspaces and trace lineage across both data and models. Data Scientists can register the third party model with MLFlow Model Registry in Unity Catalog. For information about controlling access to models registered in Unity Catalog, see Unity Catalog privileges and securable objects.

Once a team member has approved the model, a deployment workflow can be triggered to deploy the approved model. This gives discoverability, approvals, audit and security of third party models in your organization while locking away all the sensitive data and with permission model under Unity Catalog reducing the risk of data exfiltration.

HiddenLayer Model Scanner integrates with Databricks to scan your entire MLflow Model Registry

HiddenLayer also provides a Notebook that will load and scan all the models in your MLflow Model Registry to ensure they are safe to continue with training and development. You can also setup this notebook as a Databricks Worflows Job to continuously monitor the models in your model registry.

Scan models when a new mode is registered

The backbone of the continuous integration, continuous deployment (CI/CD) process is the automated building, testing, and deployment of code. Model registry webhooks facilitate the CI/CD process by providing a push mechanism to run a test or deployment pipeline and send notifications through the platform of your choice. Model registry webhooks can be triggered upon events such as creation of new model versions, addition of new comments, and transition of model version stages. A webhook or trigger causes the execution of code based upon some event. In the case of machine learning jobs, this could be used to scan models upon the arrival of a new model in the Model registry. You will be using "events": ["REGISTERED_MODEL_CREATED"] as a webhook to trigger an event when a newly registered model is created.

The two types of MLflow Model Registry Webhooks:

- Webhooks with Job triggers: Trigger a job in a Databricks workspace

- You can use this paradigm to scan the model that just got registered with the MLFlow model registry or when a model version is promoted to the next stage.

- Webhooks with HTTP endpoints: Send triggers to any HTTP endpoint

- You can use this paradigm to notify model scan status to the human in the loop

Scan model when it's stage is changed

Similar to the process described above, you can also scan models as you transition the model stage. You will be using "events": ["MODEL_VERSION_CREATED, MODEL_VERSION_TRANSITIONED_STAGE,MODEL_VERSION_TRANSITIONED_TO_PRODUCTION"] as a webhook to trigger for this event to scan models when a new model version was created for the associated model or when a model version's stage was changed or when a model version was transitioned to production.

Please review Webhook events documentation for specific events that may better suit your requirements. Note: Webhooks are not available when you use Models in Unity Catalog. For an alternative, see Can I use stage transition requests or trigger webhooks on events?.

DASF 34: Run models in multiple layers of isolation

Model serving has traditional and model-specific risks, such as Model Inversion, that practitioners must account for. Databricks Model Serving offers built-in security and a production-ready serverless framework for deploying real-time ML models as APIs. This solution simplifies integration with applications or websites while minimizing operational costs and complexity.

To further protect against adversarial ML attacks, such as model tampering or theft, it's important to deploy real-time monitoring systems like HiddenLayer Machine Learning Detection & Response (MLDR), which scrutinizes the inputs and outputs of machine learning algorithms for unusual activity.

In the event of a compromise, whether through traditional malware or adversarial ML techniques, using Databricks Model Serving with HiddenLayer MLDR limits the impact of the affected model to:

- Isolated to dedicated compute resources, which are securely wiped after use.

- Network-segmented to restrict access only to authorized resources.

- Governed by the principle of least privilege, effectively containing any potential threat within the isolated environment.

DASF 5: Control access to data and other objects

Most production models do not run as isolated systems; they require access to feature stores and data sets to run effectively. Even within isolated environments, it's essential to meticulously manage data and resource permissions to prevent unintended data access by models. Leveraging Unity Catalog's privileges and securable objects is vital to safely integrating LLMs with corporate databases and documents. This is especially relevant for creating Retrieval-Augmented Generation (RAG) scenarios, where LLMs are tailored to specific domains and use cases—such as summarizing rows from a database or text from PDF documents.

Such integrations introduce a new attack surface to sensitive enterprise data, which could be compromised if not properly secured or overprivileged, potentially leading to unauthorized data being fed into models. This risk extends to tabular data models that utilize feature store tables for inference. To mitigate these risks, ensure that data and enterprise assets are correctly permissioned and secured using Unity Catalog. Additionally, maintaining network separation between your models and data sources is necessary to prevent any possibility of malicious models exfiltrating sensitive enterprise information.

Conclusion

We began with the risks posed by third-party models and the potential for adversarial misuse through model zoos. Our discussion detailed mitigating these risks on the Databricks Data Intelligence Platform, employing SSO with MFA, ACLs, model scanning, and the secure Databricks Model Serving infrastructure. Get started with Databricks AI Security Framework (DASF) whitepaper more actionable framework for managing AI security.

We strive for accuracy and to provide the latest information, but we welcome your feedback on this evolving topic. If you are interested in AI Security workshops, contact [email protected]. For more on Databricks' security practices, visit our Security and Trust Center.

To download the notebooks mentioned in this blog and learn more about HiddenLayer AISecPlatform, Model Scanner, and MLDR, please visit https://hiddenlayer.com/book-a-demo/

1 OWASP Top 10 for LLM Applications: LLM05: Supply Chain Vulnerabilities and MITRE ATLAS™ ML Supply Chain Compromise: Model

Get the latest posts in your inbox

Subscribe to our blog and get the latest posts delivered to your inbox.