Security & Trust Center

Your data security is our top priority

Data Protection With Customer-Managed Keys

At Databricks, your data security is our number one priority. We know that data is one of your most valuable assets and always has to be protected — that’s why we make the commitment to encrypt customer content at rest within our control plane with cryptographically secure techniques.

At the same time, we understand that for many customers the ability to protect your data with a customer-managed key (CMK) is not a nice to have, it’s a nonnegotiable requirement.

The benefits of using customer-managed keys

Using customer-managed keys provides the following benefits:

-

More control over your data – Because you manage the key that’s needed to decrypt your data, you have overall control over how and when it can be used. If you delete or revoke access to your key, it isn’t possible for Databricks (or anyone else) to decrypt that data.

-

Greater reassurance in the event of a compromise – Like all of the best security teams in the world, we hope for the best but plan for the worst. In the event of a security compromise, you can simply revoke access to your CMK and with it our ongoing access to your data.

-

Enforce your own rotation policies – If you use a platform-managed key (PMK), the platform owner rotates the key as per their own compliance policy. With a CMK you can rotate the key as per your own compliance policy.

-

Monitor access – As well as greater control, you also have visibility over how and when your key is being used. You can use cloud native monitoring solutions to track the use of your CMK, and detect any unauthorized attempts to access your data.

How it works

Databricks supports the use of CMKs managed by the following providers:

-

AWS Key Management Service (KMS) for Databricks on AWS

-

Microsoft Azure Key Vault for Azure Databricks

When you choose to leverage our CMK features, Databricks uses your CMK as part of the keychain to encrypt the following:

-

Your code – the notebooks and SQL queries that your users develop on the platform

-

Your data – the data that you process and the results of the queries that your users run on the platform

-

Your models – the machine learning models and associated artifacts that you train or deploy on the platform

-

Your credentials – the credentials that your users store as secrets within the platform

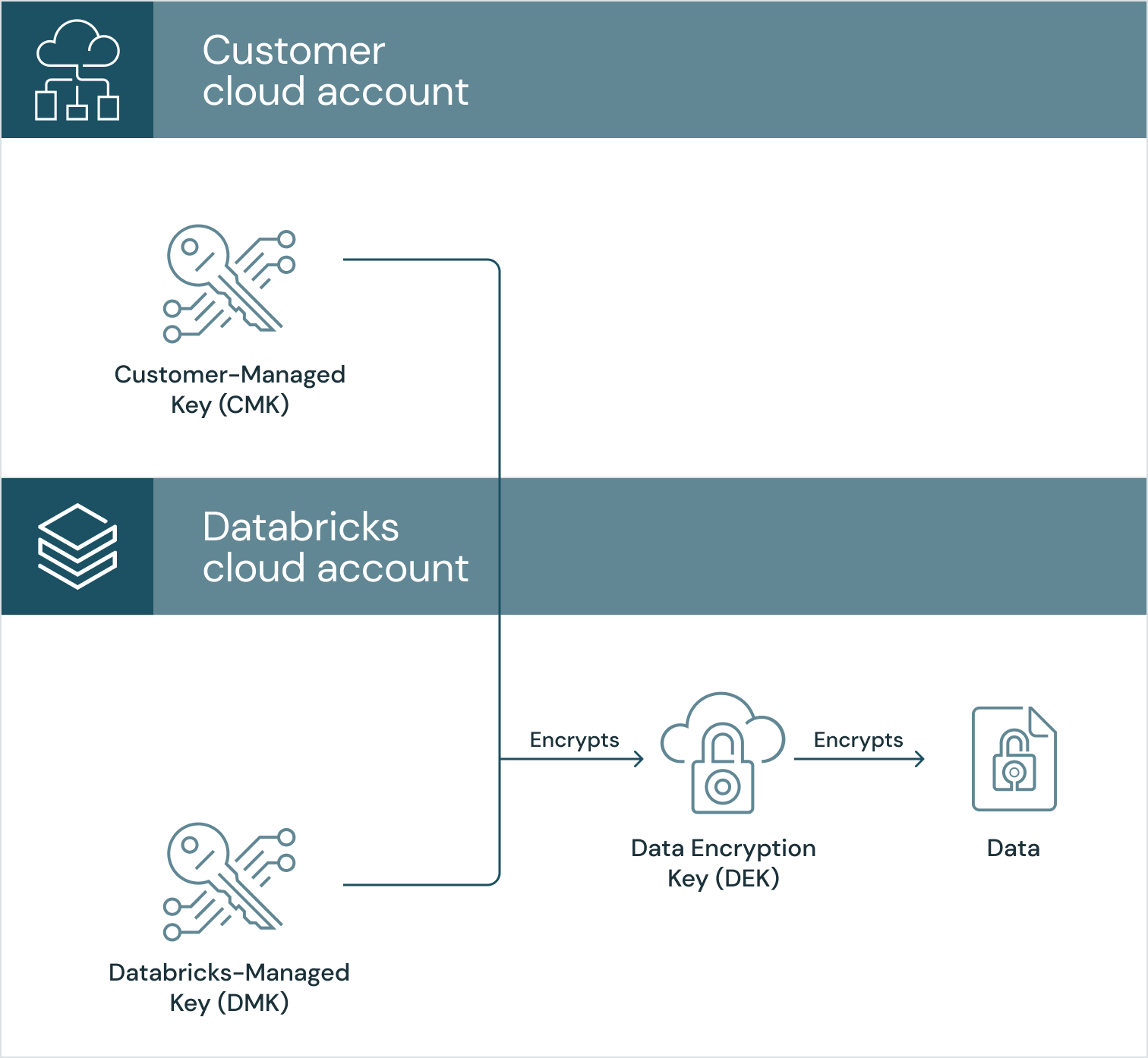

Data in the Control Plane

For data that is stored in the control plane, we use a technique called envelope-encryption to encrypt the data encryption key (DEK) that’s used to encrypt your data. This is a well-regarded technique, often used within cloud provider best practices (AWS, Azure, GCP). We securely generate the AES-256 DEK via trusted libraries such as java.crypto. Once the DEK is generated, it is encrypted with your customer-managed key, which is stored in the cloud key management service for your account. The encrypted DEK is then re-encrypted with a Databricks-managed key, which is stored in the cloud key management service for our account.

The Databricks managed services need regular access to your CMK to unwrap the DEK and therefore decrypt the data. So that we don’t overwhelm the cloud key management service and to allow for cloud provider service issues, we cache the DEK with a timeout period, after which we clear the cache. If you delete or revoke access to your key, we won’t be able to access your data.

Please see our documentation on AWS and Azure for step-by-step instructions on how to enable customer-managed keys for managed services.

Data in the Data Plane

With Databricks, almost all of your data stays in your account under your control. For the reasons outlined above, you might still want to encrypt that data with a CMK, and Databricks supports this for data at rest in storage and within the data plane hosts.

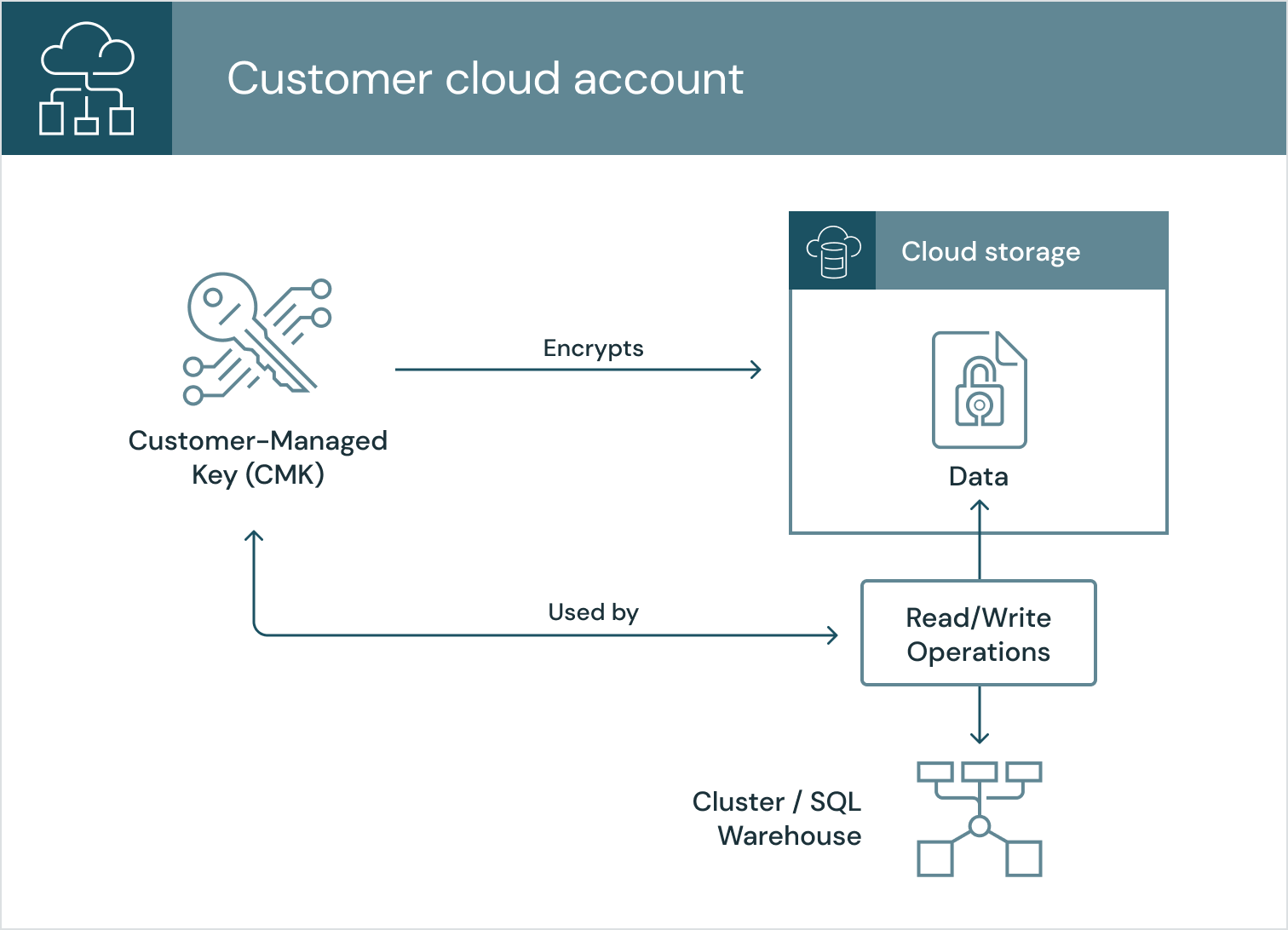

Data plane storage

You can configure Databricks to use your CMK to encrypt data at rest in your account. The same principle applies: we can’t decrypt the data if you delete or revoke access to your key; you’ll be protected whether that data is in:

-

The root storage account associated with a Databricks workspace

-

The storage accounts managed or accessed via Unity Catalog

-

The storage accounts that you use to store all of your remaining lakehouse data

When you configure CMK for these storage accounts, Databricks uses your key to encrypt any future read/write operations to your storage account. Please see the documentation for how to configure CMK for:

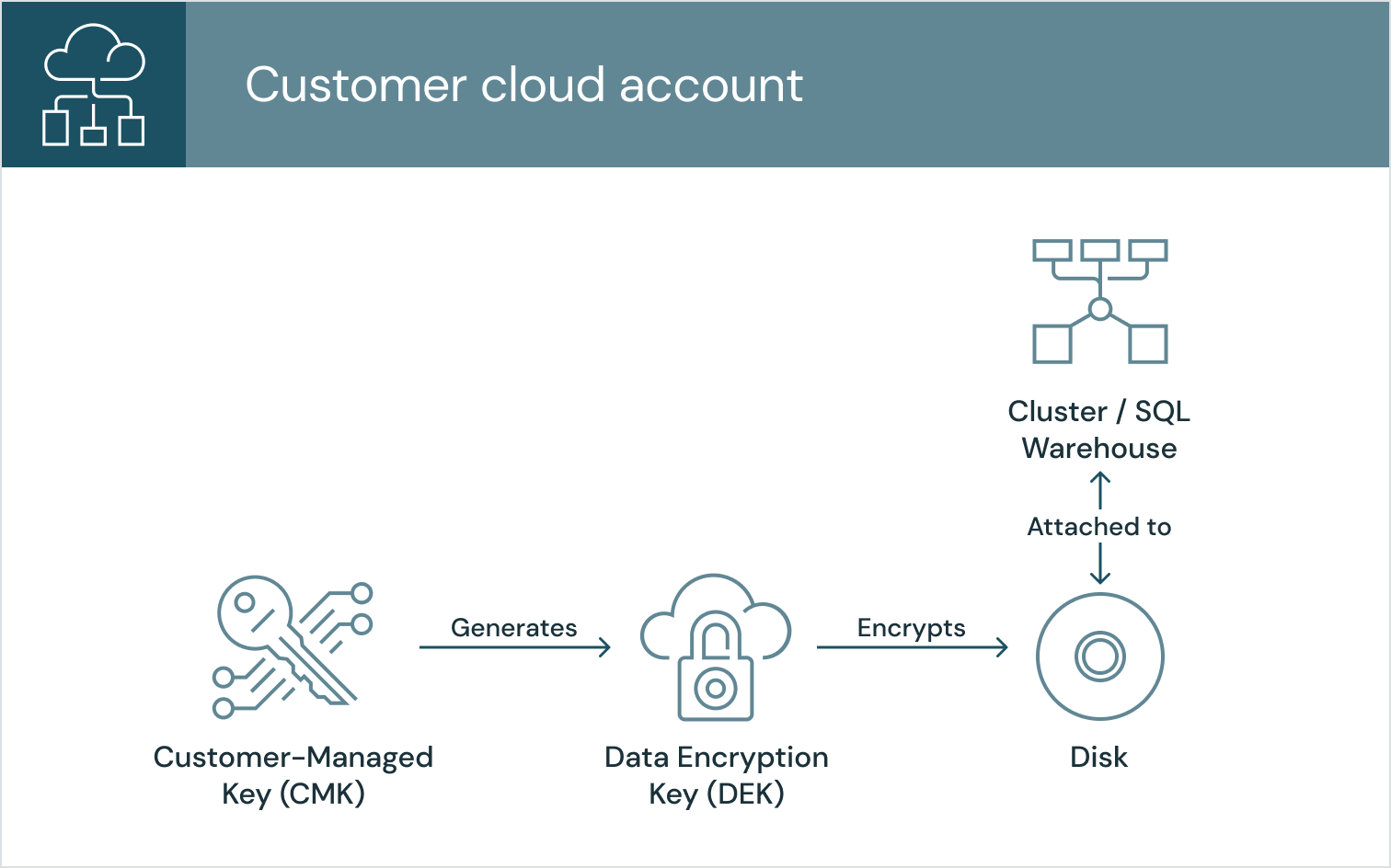

Classic data plane hosts

You can configure Databricks to use your CMK to encrypt the EBS volumes (AWS) or Managed Disks (Azure) that get attached to clusters and SQL warehouses in the classic data plane. Once configured, the cloud provider uses your CMK to generate a data encryption key (DEK) which is used to encrypt the disk.